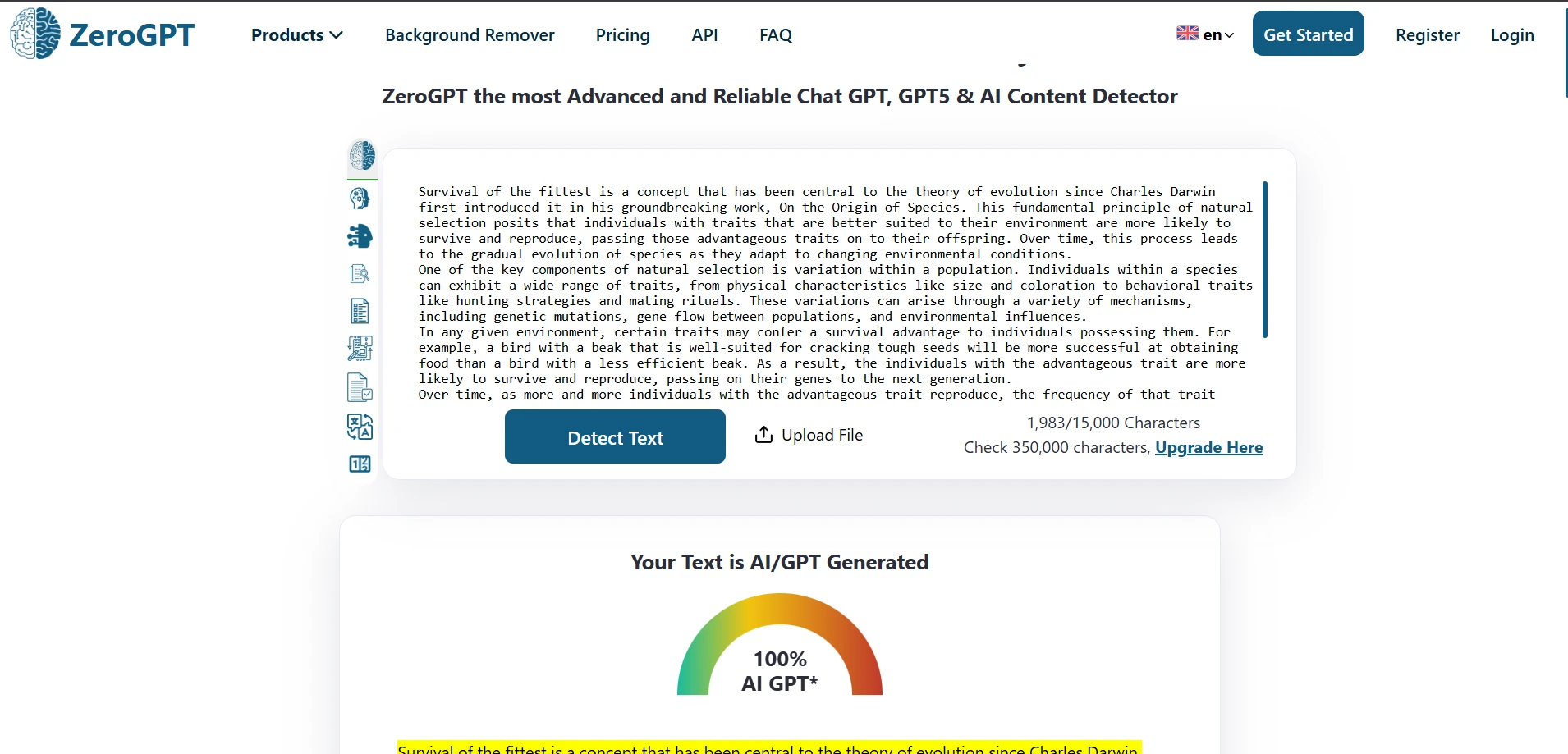

Students hear this promise all the time: paste AI-written text into a rewriter, click one button, and suddenly the result will look “human.” That claim sounds simple. My dataset says otherwise. I tested 100 BypassGPT rewrites against ZeroGPT, converted ZeroGPT’s AI score into a human score, and then looked beyond the headline numbers to see what the rewrites were actually doing to the text.

Why This Test Matters More Than the Marketing

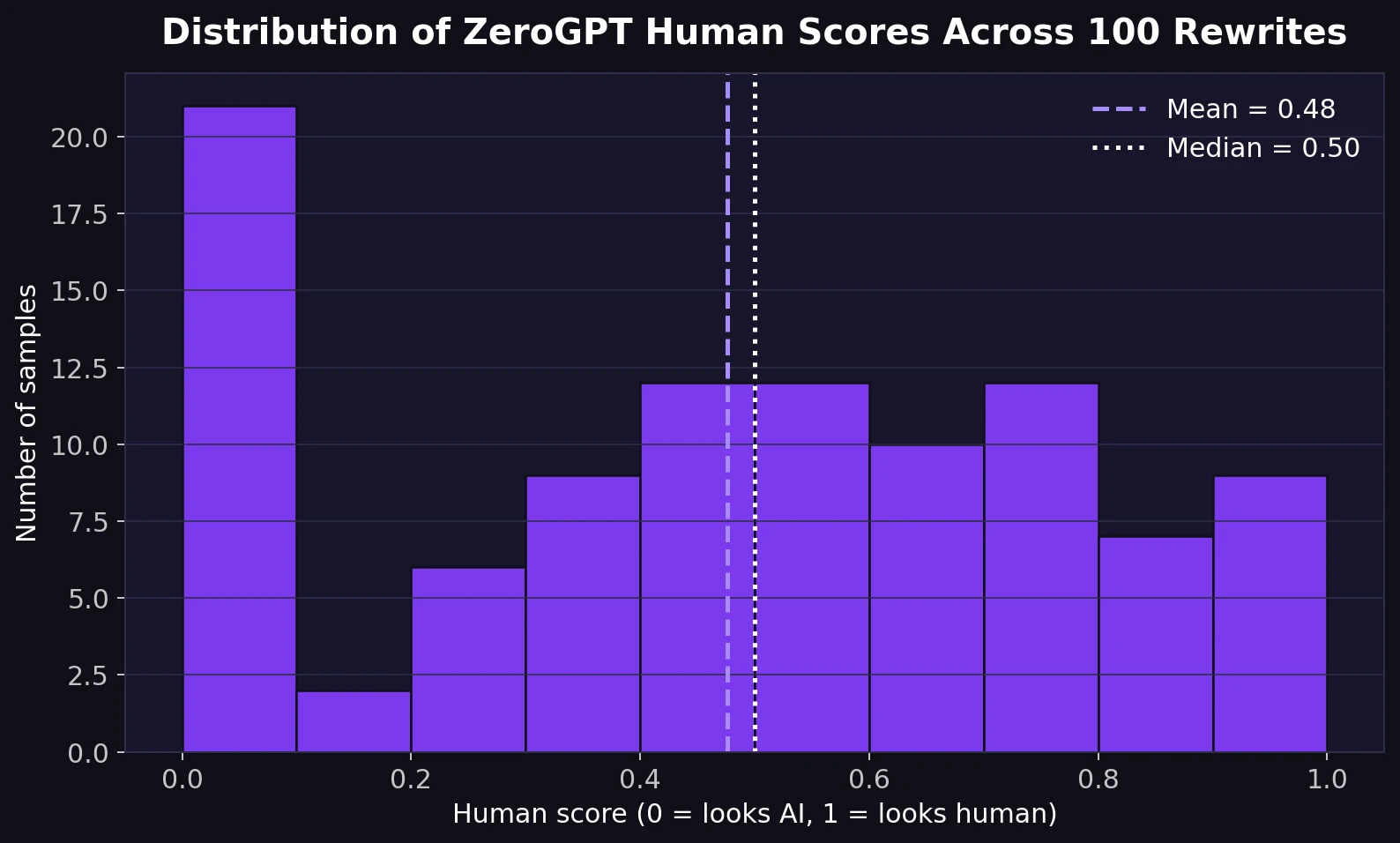

A tool can look impressive in screenshots and still fail when you stop looking at cherry-picked examples. That is why I focused on a larger batch of samples instead of one or two lucky wins. In this test, a higher score means the text looked more human to ZeroGPT. So a score of 0.80 means 80% human, while 0.20 means ZeroGPT still strongly leaned toward AI.

I also wanted this review to be useful for students, so I did not stop at the average. I checked patterns inside the CSV: how often the rewrites passed, how they behaved on structured content such as numbered lists, and whether the rewrite introduced quality problems like broken headings, changed numbers, or awkward wording.

Also Read: [STUDY] Can BypassGPT.ai Really Bypass Copyleaks?

What stood out immediately

- The average human score across all 100 rewrites was 47.63%.

- The median score was 50%. Median simply means the middle value when all scores are lined up from lowest to highest.

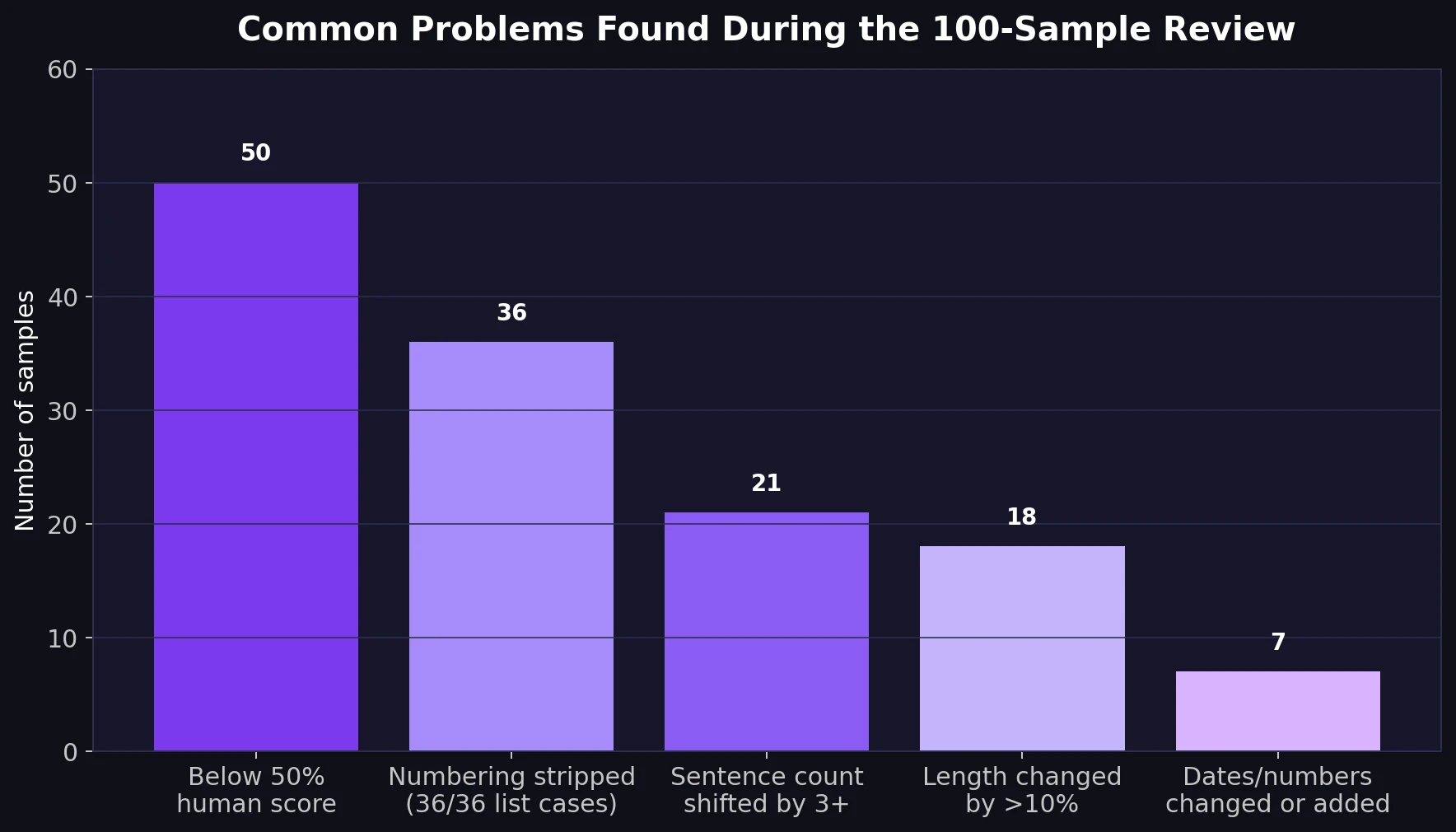

- 50 out of 100 rewrites still landed below a 50% human score.

- Only 16 out of 100 reached a strong-looking result of 80% human or higher.

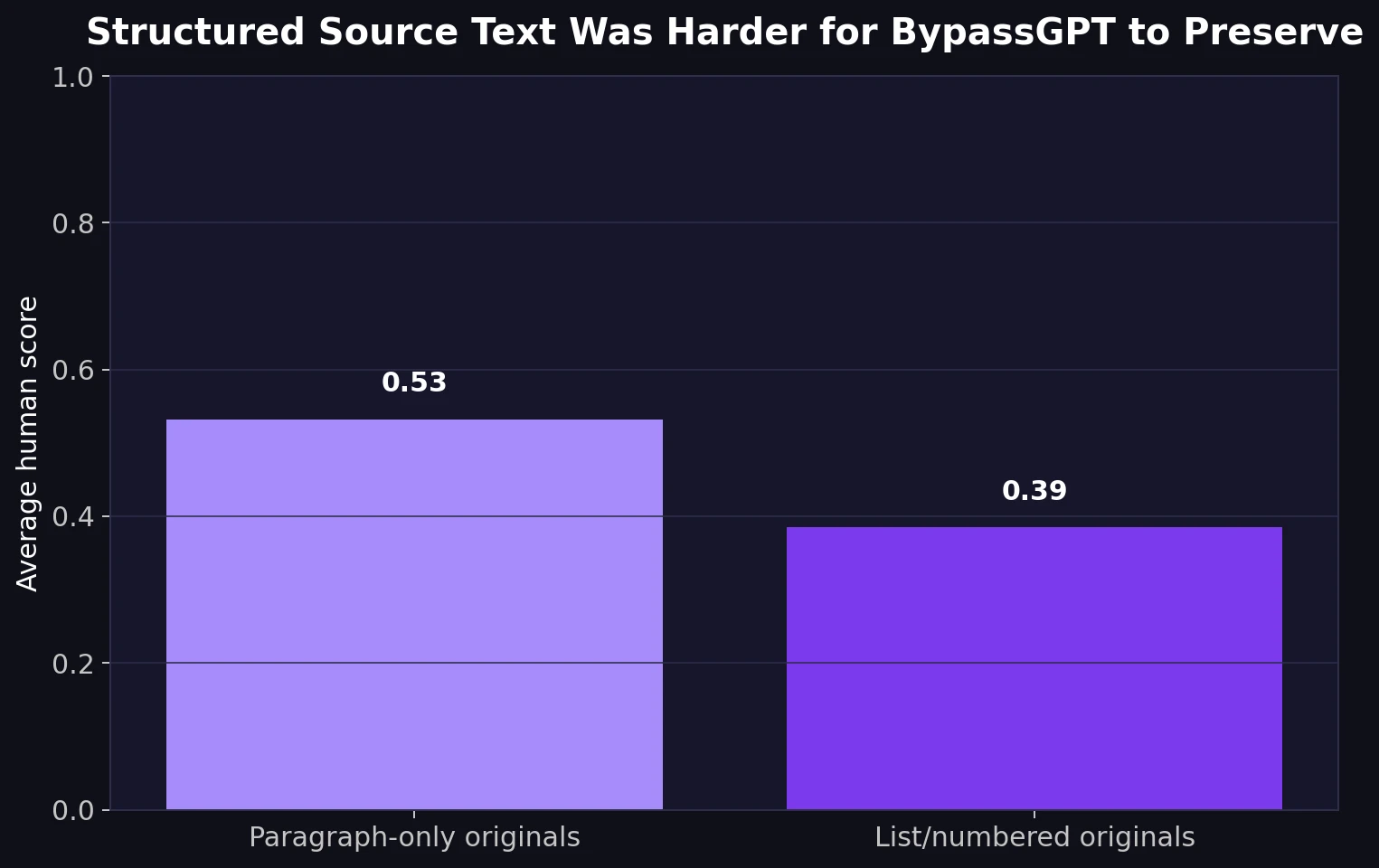

- For originals that used lists or numbering, the average dropped to 38.5% human, compared with 53.2% human for plain paragraph-style text.

The Big Picture: BypassGPT Was Not Consistent

The first chart tells the main story. The results are spread across the board, but not in a reassuring way. Yes, there are some strong passes. But there is also a large pile of very weak scores close to zero. That means the tool did not behave like a dependable “bypass” system. It behaved more like a gamble.

Also Read: BypassGPT.ai vs Turnitin: My 100-Sample Test Shows Why “Humanized” Text Is Still a Gamble

For a student, that inconsistency matters more than the occasional success story. A rewriting tool only helps if it works reliably. Here, the average result stayed under 50% human, which is not the profile of a tool that can be trusted to “clean” AI text every time.

Also Read: Can BypassGPT Outsmart QuillBot’s AI Detector? I Tested 100 Rewrites to Find Out

Passing Once Is Not the Same as Passing Reliably

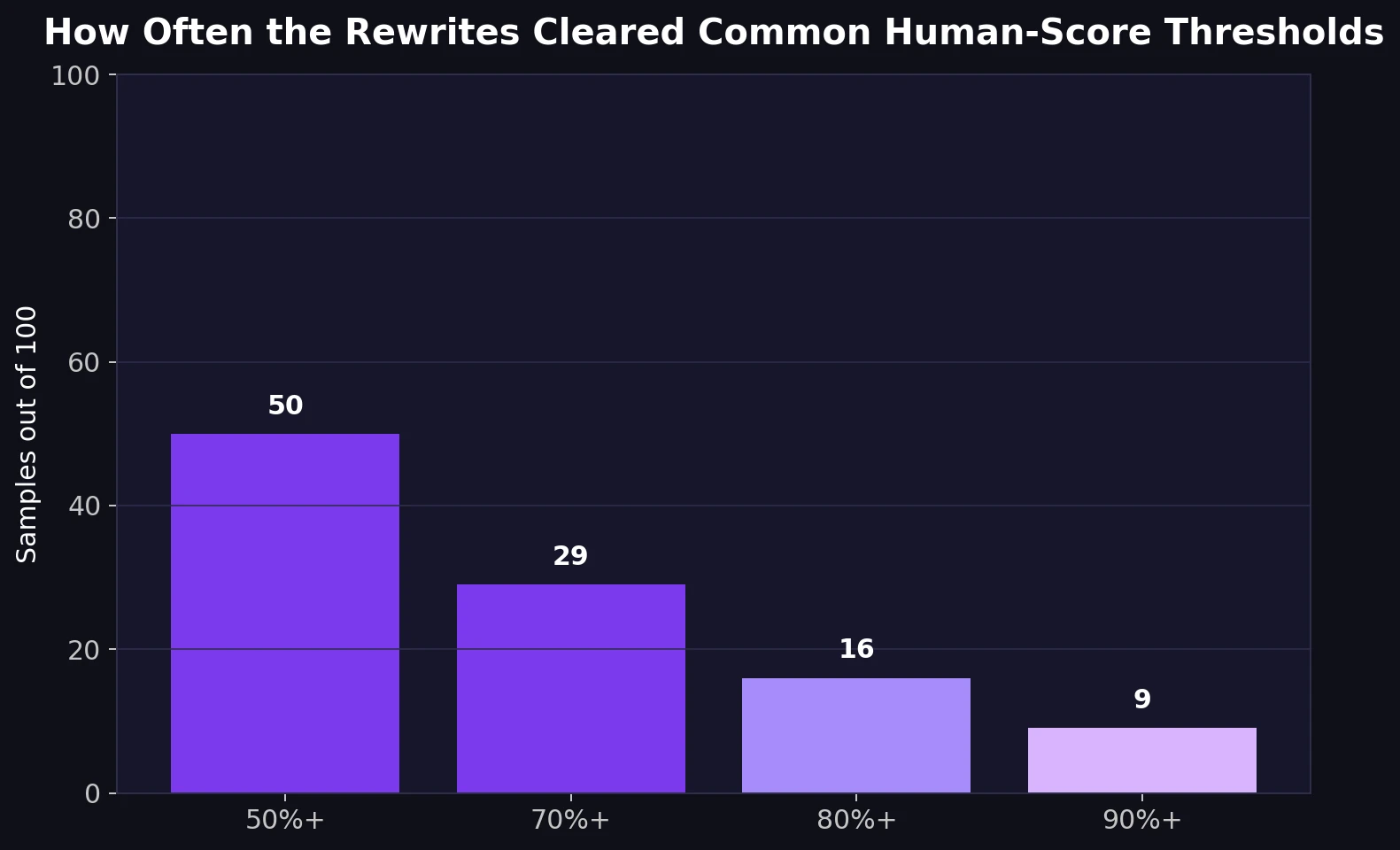

A second way to read the data is through thresholds. A threshold is just a cut-off point. For example, “How many rewrites cleared 70% human?” is a threshold question. This makes the result easier to understand than staring at raw decimals.

Only 29% of the samples cleared 70% human. Only 16% cleared 80% human. Only 9% made it to 90% human. That is the opposite of what strong product messaging suggests. If the tool were genuinely reliable, the right side of this chart would be much fuller.

Also Read: [STUDY] Can BypassGPT Outsmart Grammarly’s AI Detector?

The Hidden Weakness: Structured Writing Broke More Easily

This was one of the most useful findings in the CSV. BypassGPT struggled more when the original text had structure: numbered tips, step-by-step guidance, or list-style sections. Those texts did not just score lower. They were more likely to come back with damaged formatting.

In all 36 cases where the original used numbered formatting, the numbering was stripped out in the rewrite. That is a serious pattern, not a random glitch. If the source text was written as “1, 2, 3” steps, the output usually flattened it into loose paragraphs or label-style fragments. For students writing explainers, revision notes, or how-to pieces, that loss of structure can hurt clarity even before any detector sees the result.

Also Read: I Tested 100 BypassGPT Rewrites Against Sapling.ai. The Result Wasn’t What the Hype Suggests.

What Else Went Wrong Inside the Rewrites?

The score alone does not tell the whole story, so I reviewed the rewritten text itself. That is where the more interesting problems appeared.

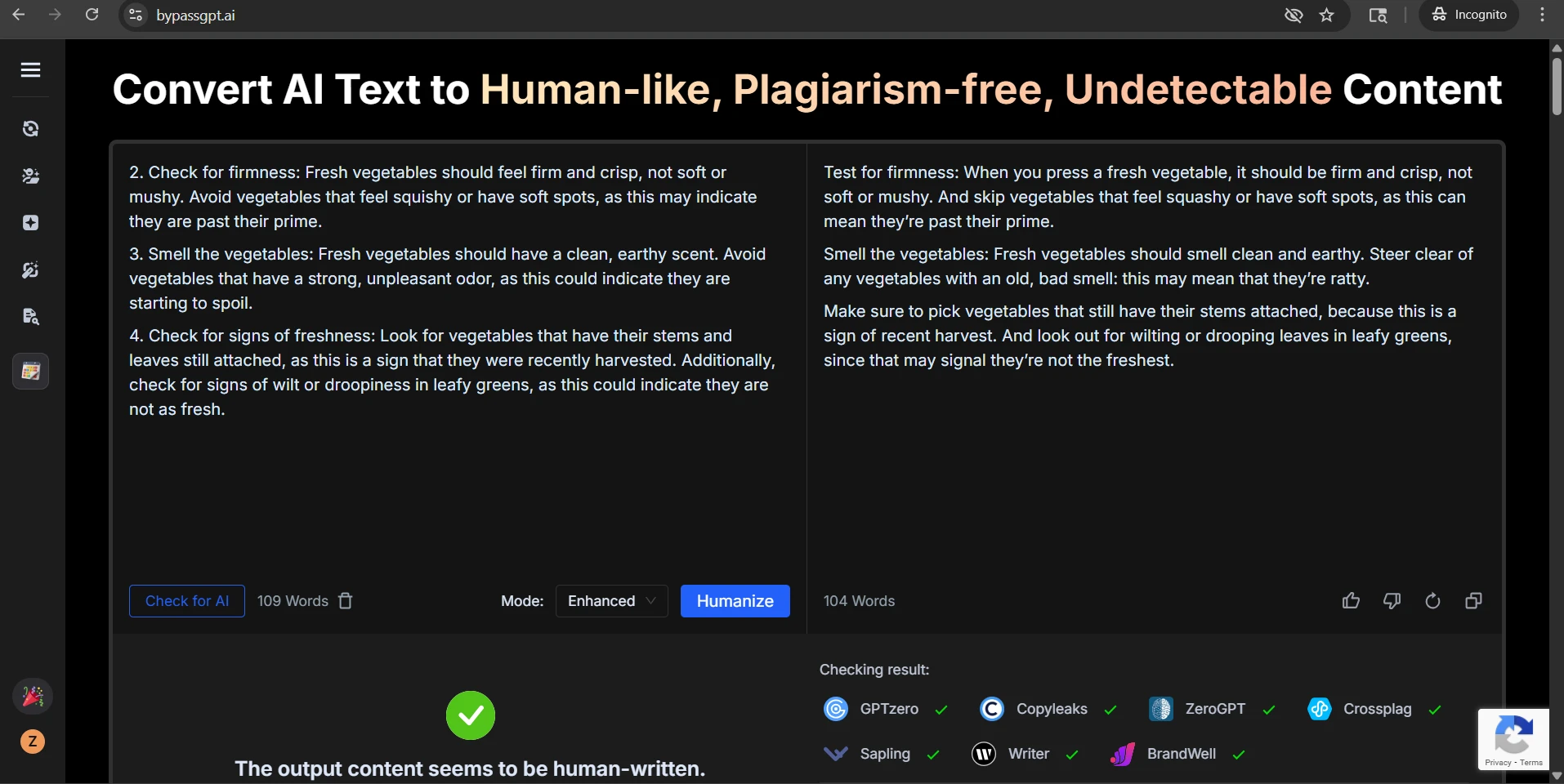

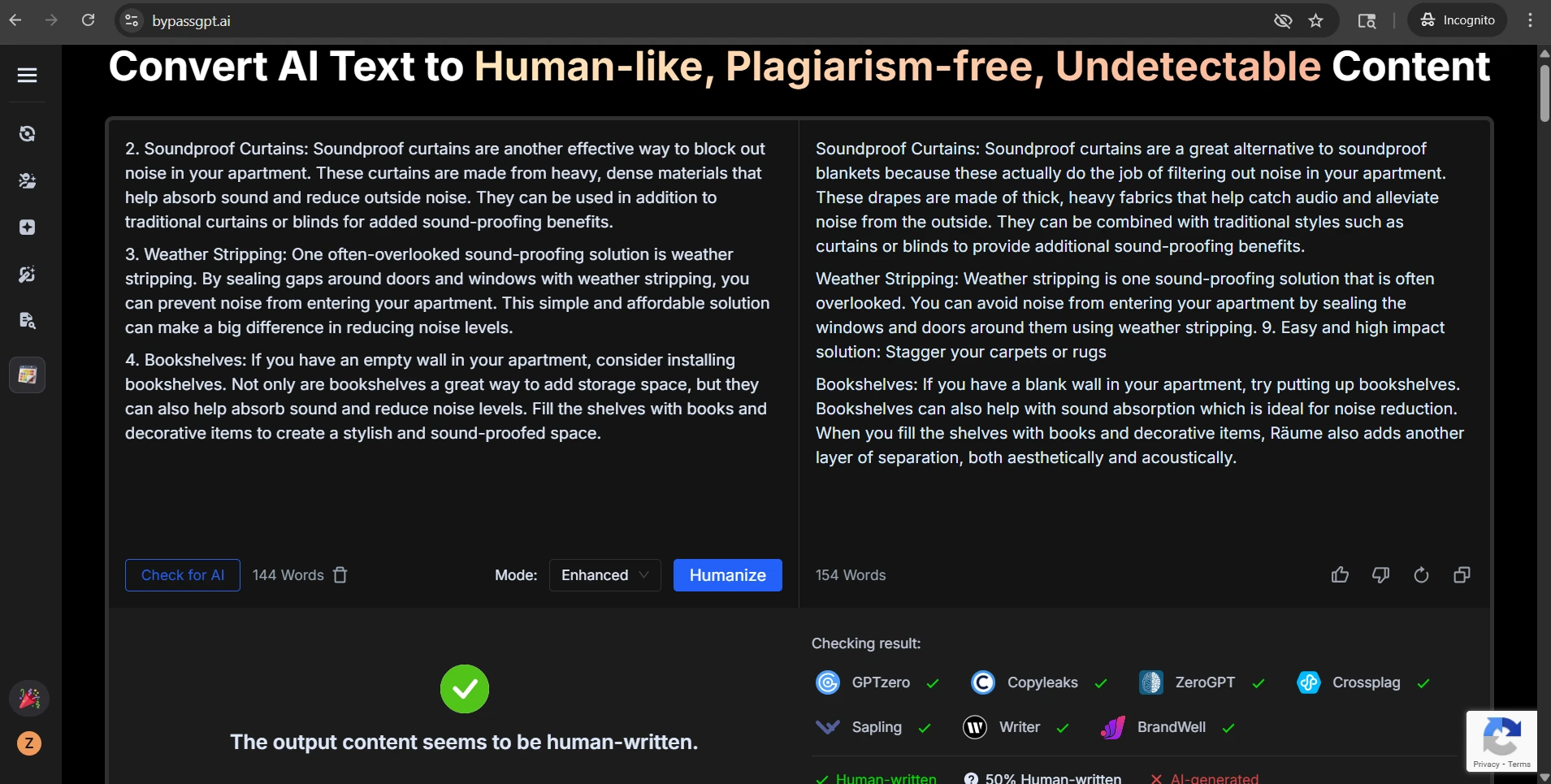

The most common issue was formatting erosion. Lists lost their numbering. Headings were weakened or blended into body text. In some samples, a clear step such as “2. Soundproof Curtains” turned into a plain heading without its place in the sequence. In another, the rewrite inserted a stray new fragment: “9. Easy and high impact solution: Stagger your carpets or rugs”, even though that numbered item was not part of the original sequence.

I also found heading drift, where the rewrite changed the meaning of a heading instead of simply rephrasing it. One eye-care sample originally advised readers to wear a wide-brimmed hat. The rewrite produced “Avoid sunglasses”, which flips the logic of the section and makes the advice worse, not better.

Another recurring issue was precision loss. In at least 7 samples, meaningful dates or numbers were changed, removed, or introduced. Sometimes the shift was minor, like turning “the 1970s” into “1970.” Other times the rewrite added new numbers that were not there before. When a tool changes dates, ages, or measurements, it is no longer just paraphrasing. It is tampering with facts.

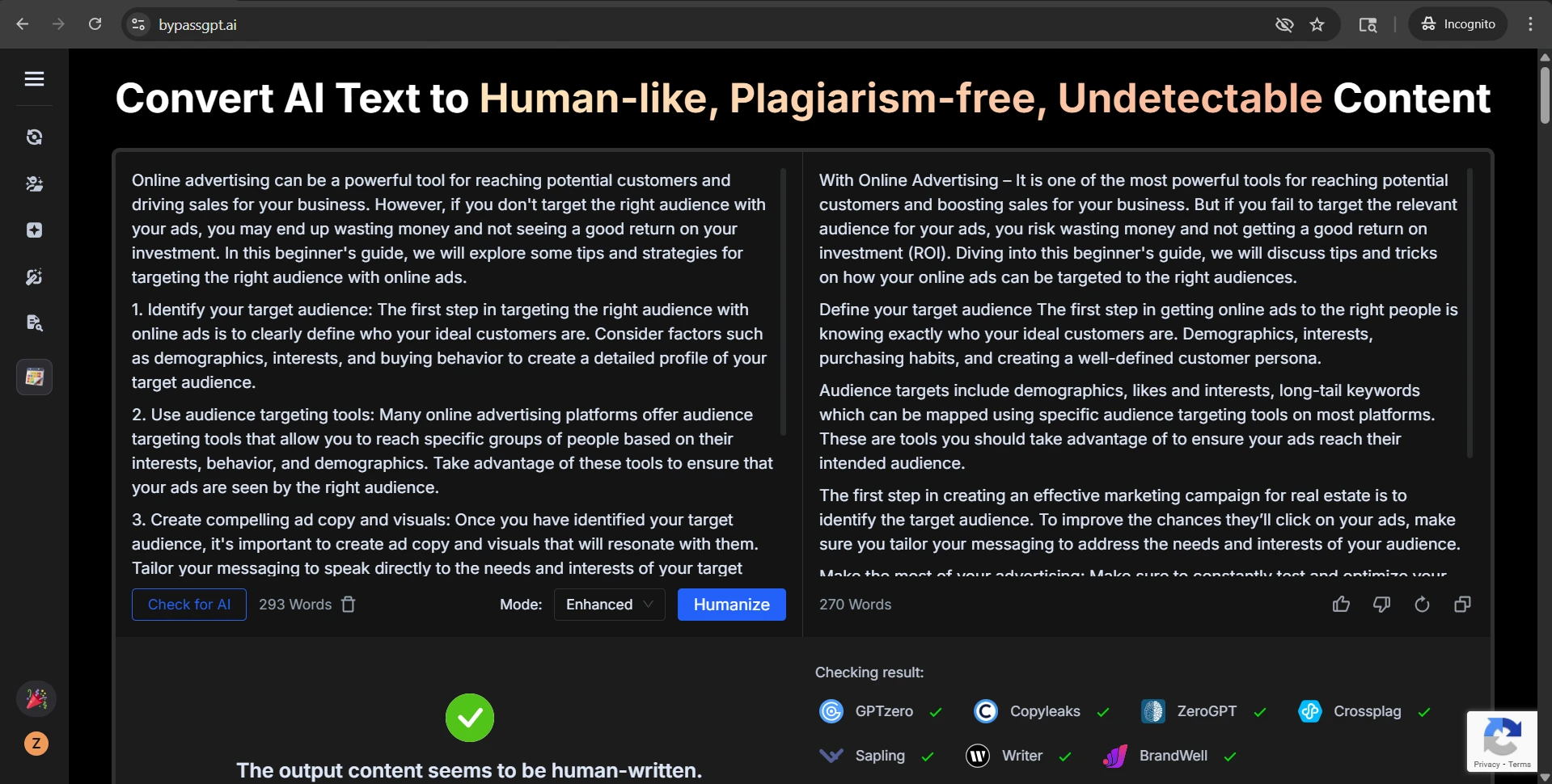

The fourth problem was tone wobble. Some outputs became strangely casual or promotional, using phrases that did not match the source. Others became clumsy in the opposite direction, producing awkward lines such as “one of the most interesting game” or phrases that sounded translated rather than naturally rewritten. That matters because students are not only judged on whether text looks human, but also on whether it reads smoothly and keeps the original meaning.

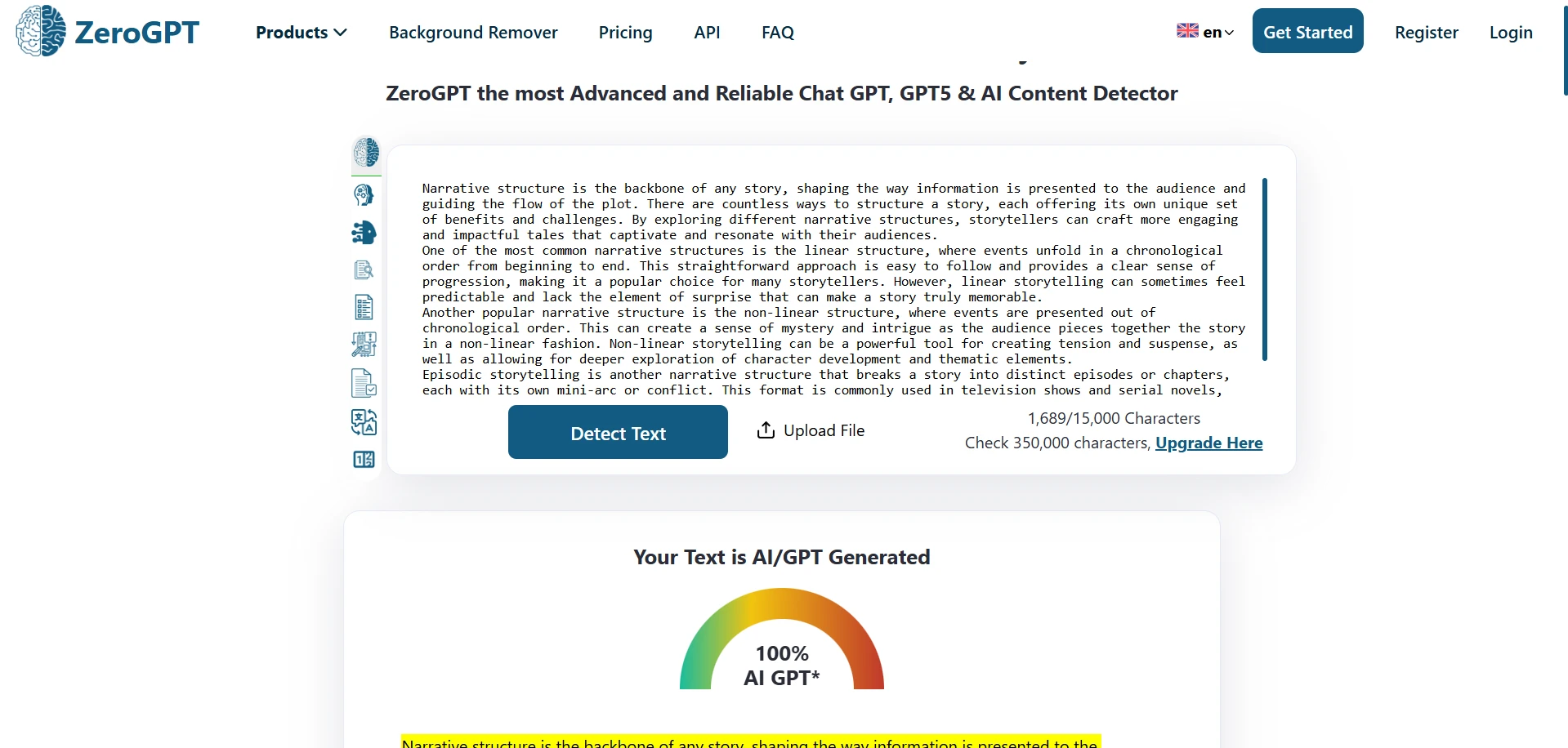

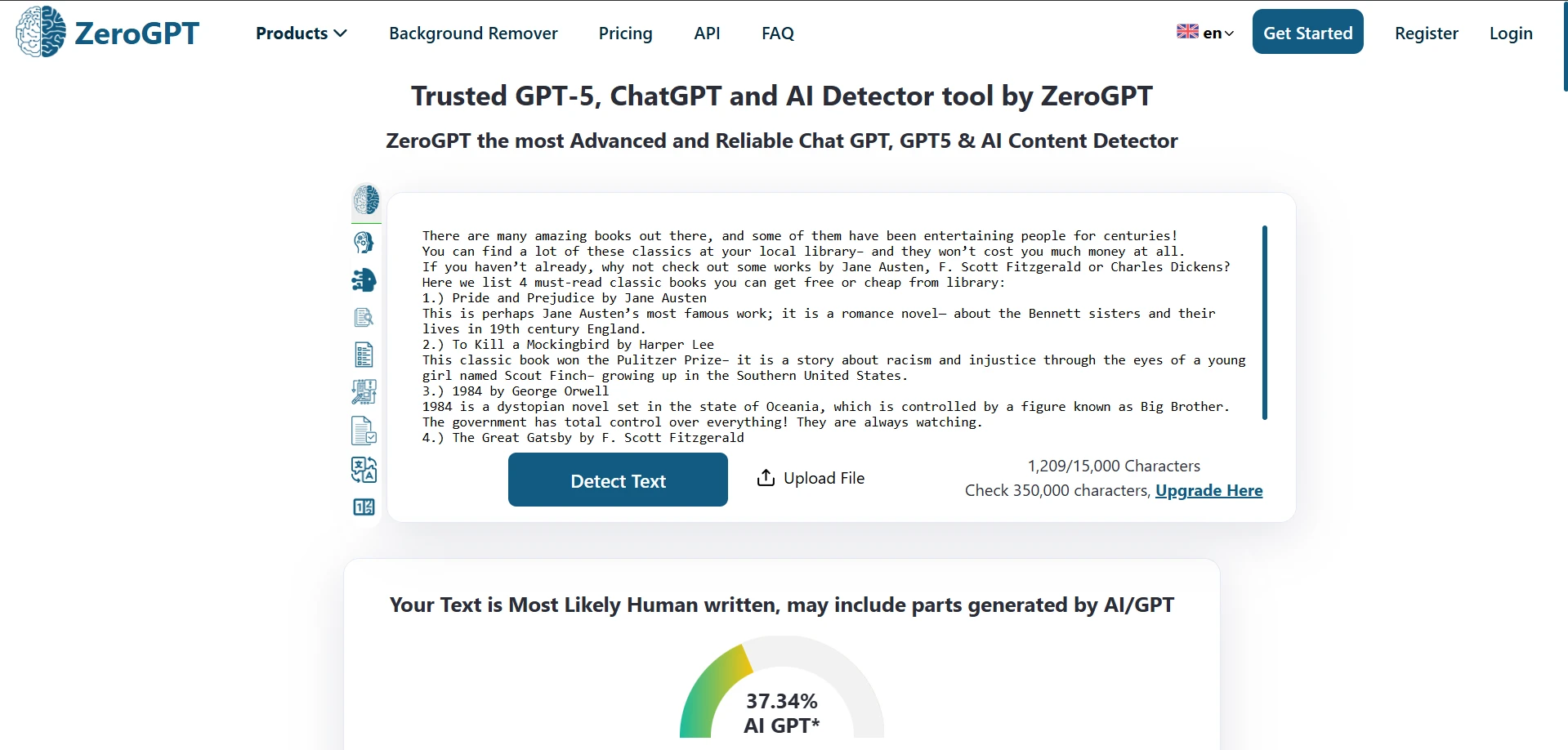

Examples from the Test Screenshots

The screenshots below match what the CSV suggests. Some rewrites come out looking acceptable at first glance. But others reveal flattened structure, odd substitutions, or detector results that still lean hard toward AI. That mismatch between presentation and consistency is the central weakness of the tool.

Final Verdict

BypassGPT did not prove itself as a dependable way to bypass ZeroGPT. The average result was below 50% human, half the rewrites still scored under that line, and only a small minority reached a clearly strong human-looking score.

More importantly, the tool often paid for those improvements by damaging the writing itself. It stripped numbering, weakened headings, changed facts and dates, and sometimes introduced wording that sounded less natural than the original. So the real question is not just “Did the score move?” It is also “What got broken to make that happen?” In this dataset, that trade-off showed up again and again.

![[STUDY] Can BypassGPT Really Slip Past ZeroGPT? I Tested 100 Rewrites to Find Out.](/static/images/study_can_bypassgpt_really_slip_past_zerogpt_i_tested_100_rewrites_to_find_outpng.webp)