If you are a student using a rewriting tool to make AI-written text look more human, the real question is not just “Can it beat the detector?” It is also “What does it do to the writing while trying?” To test that properly, we reviewed 100 BypassGPT.ai rewrites and looked at their Copyleaks human scores. Higher scores mean the text looked more human. The result was not a simple win or loss. It was a split personality test: some rewrites passed very strongly, while many others failed completely.

The Average Score Looks Fine. The Distribution Tells a Harder Truth.

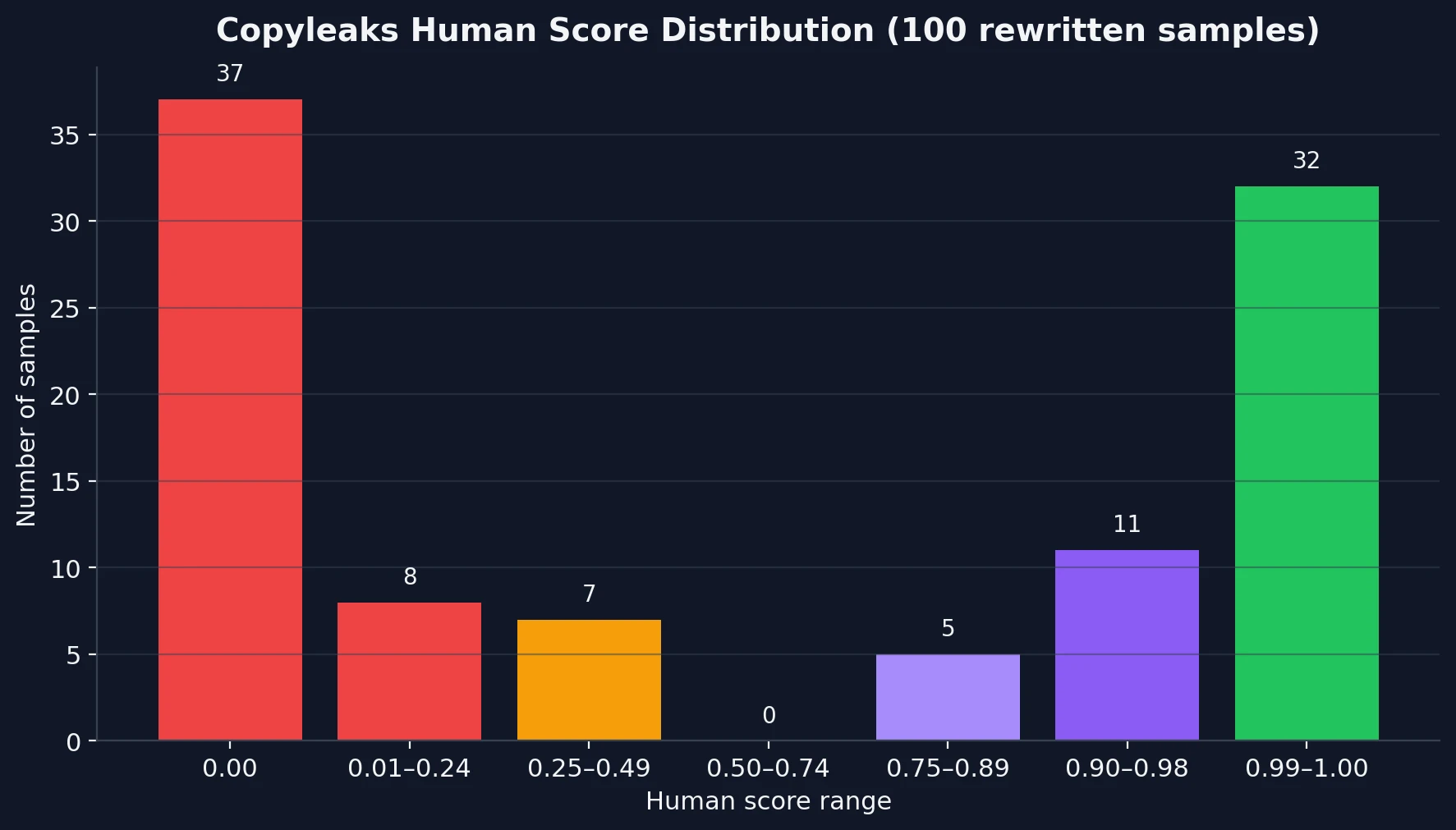

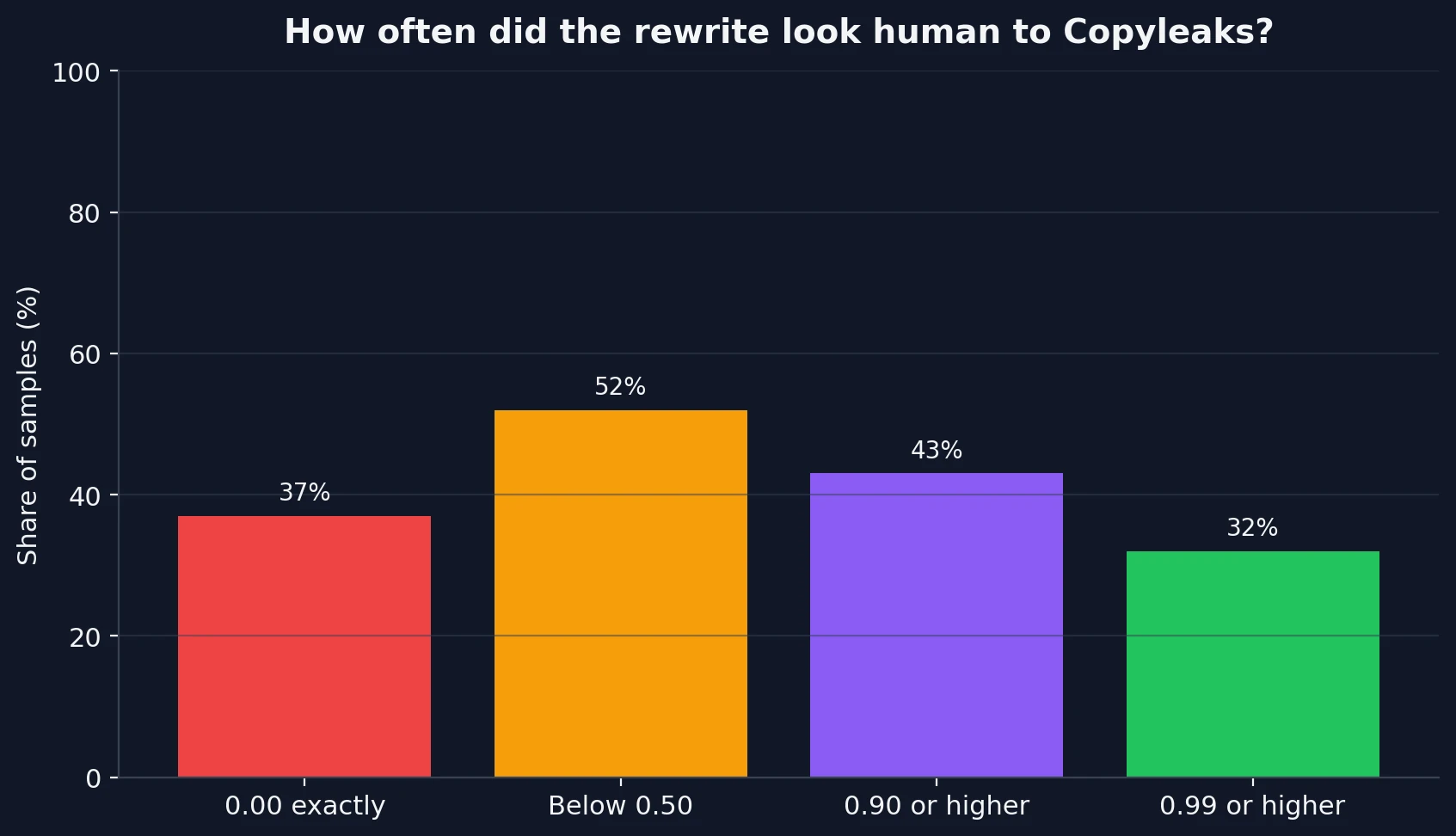

At first glance, the dataset seems balanced. The average human score was 50%. But that average hides what is really happening. The median score was just 36%, which means more than half of the samples still landed below that mark. Even more important, the scores were heavily polarized.

In plain language, this was not a “mostly okay” tool. It behaved more like a coin flip with dramatic outcomes. A large share of samples looked very human to Copyleaks, but a similarly large share looked very AI-like.

What stood out most in the 100-sample test:

- 37% of samples scored exactly 0.00 human, which is a total failure against Copyleaks.

- 43% scored 0.90 or higher, so strong passes definitely happened.

- 32% scored 0.99 or higher, which shows BypassGPT.ai can sometimes produce extremely convincing rewrites.

- No samples landed in the 0.50 to 0.74 range. The outputs were usually either clearly weak or clearly strong.

This matters for students because consistency matters more than occasional success. A tool that gives you one brilliant pass and one total failure on the next attempt is not dependable. If your goal is to submit writing with confidence, a highly unstable output is a problem even before a teacher or reviewer starts reading closely.

Also Read: BypassGPT.ai vs Turnitin: My 100-Sample Test Shows Why “Humanized” Text Is Still a Gamble

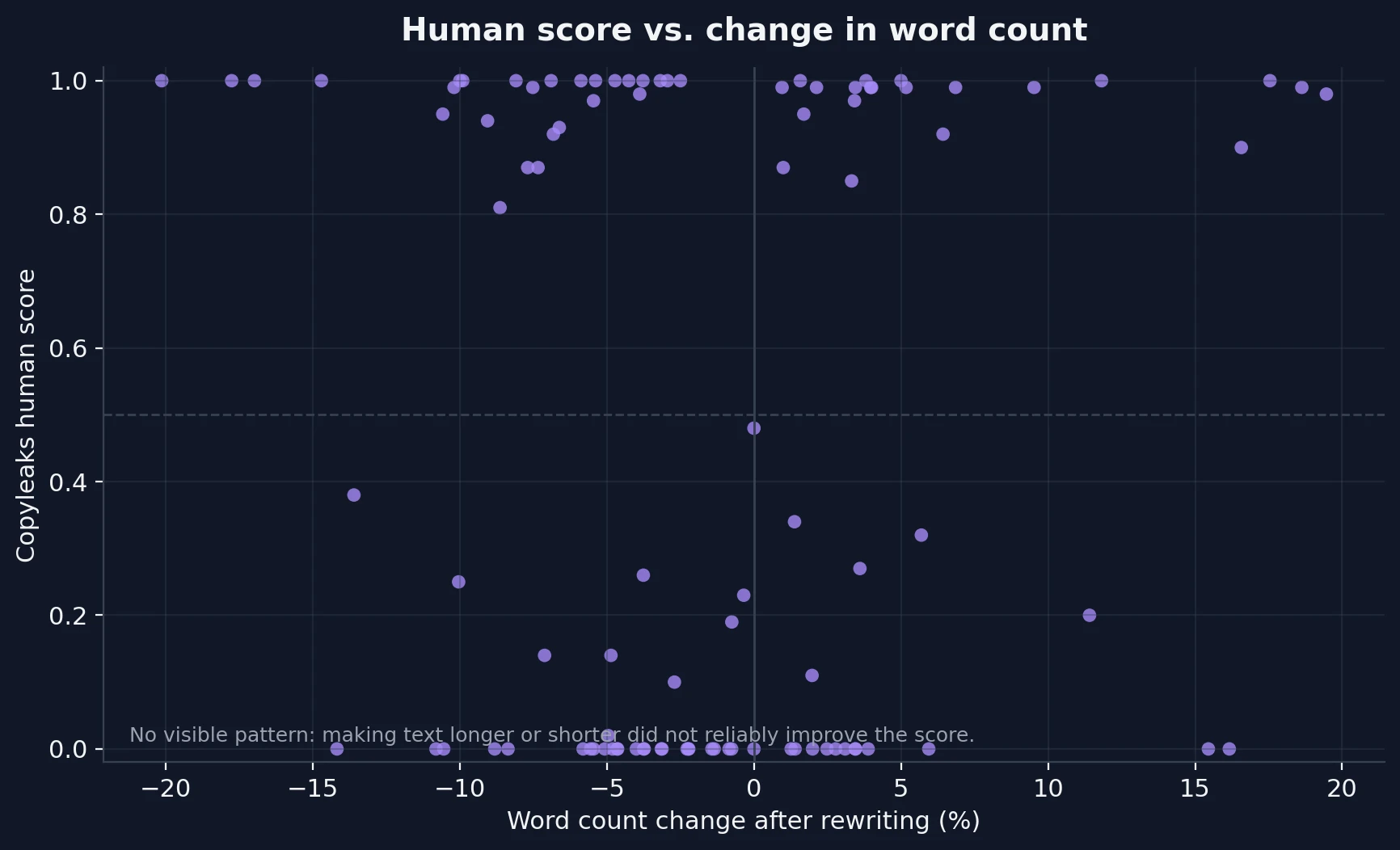

It Was Not Winning by Simply Making the Text Longer

A common assumption is that a rewrite tool can “game” a detector just by making sentences longer, adding fluff, or changing a few words. That is not what this dataset showed. The average word count changed by only about -1.5%, so the rewritten text was, on average, almost the same length as the original.

Also, there was no meaningful relationship between score and length change. Some shorter rewrites scored very high. Some longer rewrites scored zero. That suggests BypassGPT.ai was not winning because it padded the text. When it worked, it worked for other reasons. When it failed, extra wording did not rescue it.

Also Read: Can BypassGPT Outsmart QuillBot’s AI Detector? I Tested 100 Rewrites to Find Out

The Bigger Problem: The Rewrites Often Damaged the Structure

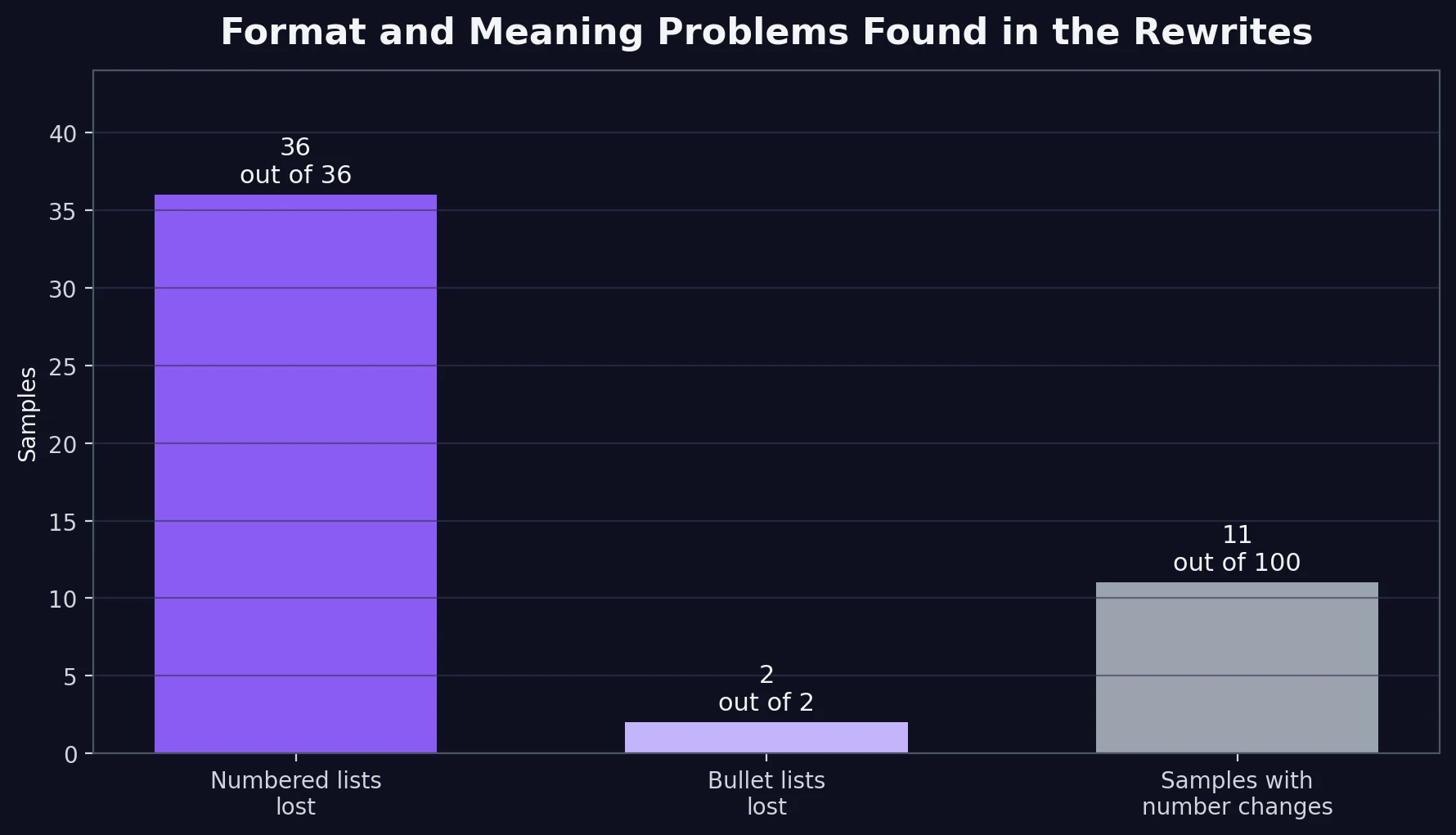

Detector score is only half the story. A student does not submit a number. A student submits readable work. And this is where the CSV revealed a second, important problem: many rewrites damaged the original structure.

The strongest pattern was list handling. In every sample that originally used clear bullet-like or numbered formatting, the rewrite tended to flatten that structure into plain paragraphs or loose labels. That may help a detector in some cases, but it also makes the writing harder to scan and weaker for practical use.

Also Read: [STUDY] Can BypassGPT Outsmart Grammarly’s AI Detector?

Here are the most important side effects we found:

First, list formatting was wiped out. All 38 samples that began with bullet-style or list-style lines lost those markers in the rewrite. More specifically, all 36 numbered-step samples also lost their numbering. That is not a small cosmetic issue. In study guides, tutorials, recipes, explainers, and comparison posts, the structure is part of the meaning.

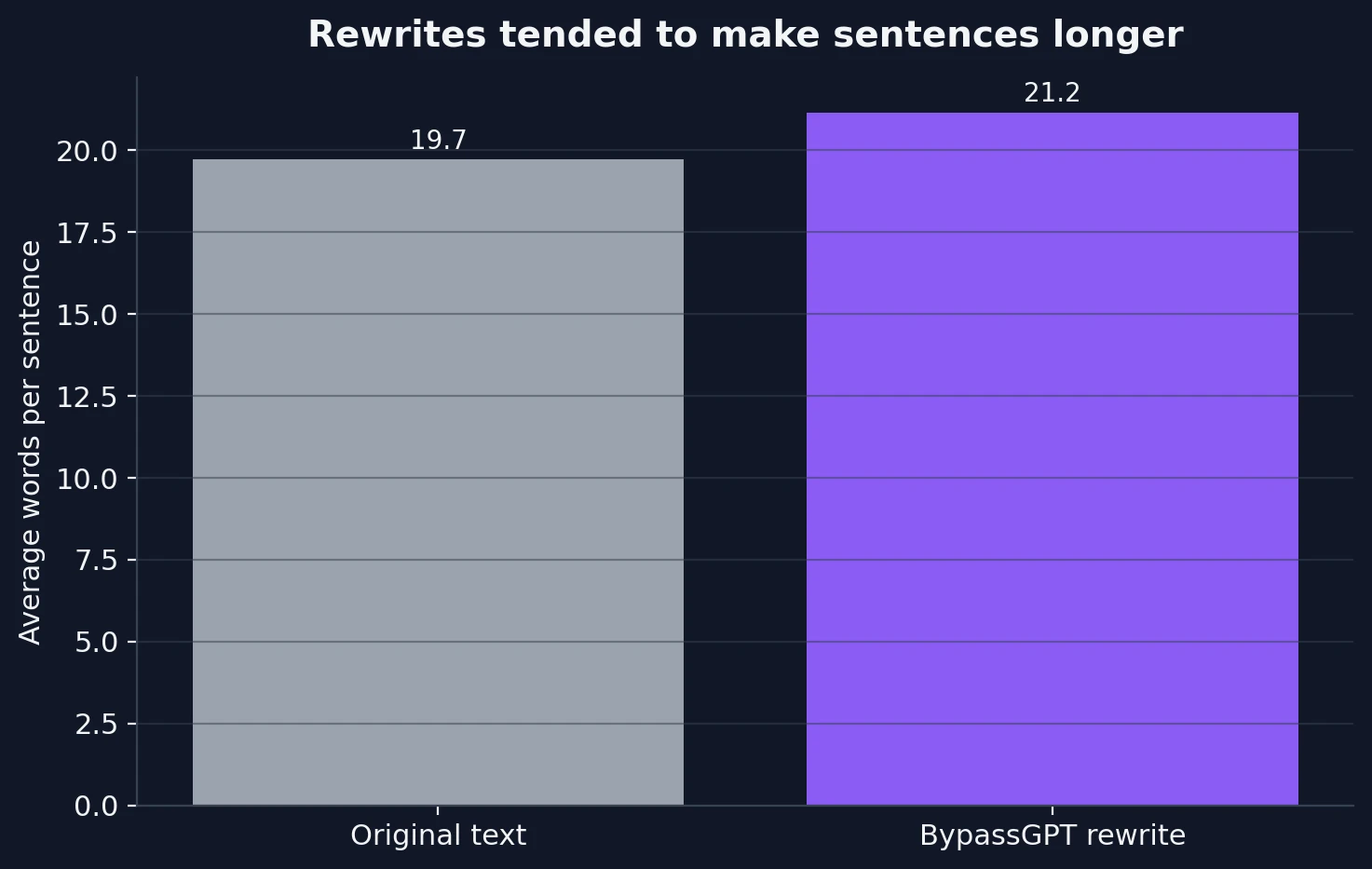

Second, sentence shape became heavier. The original texts averaged 19.7 words per sentence. The rewrites rose to 21.2 words per sentence. That is not a huge jump, but it is enough to make instructional writing feel denser, especially when step-by-step text gets merged into broader sentences.

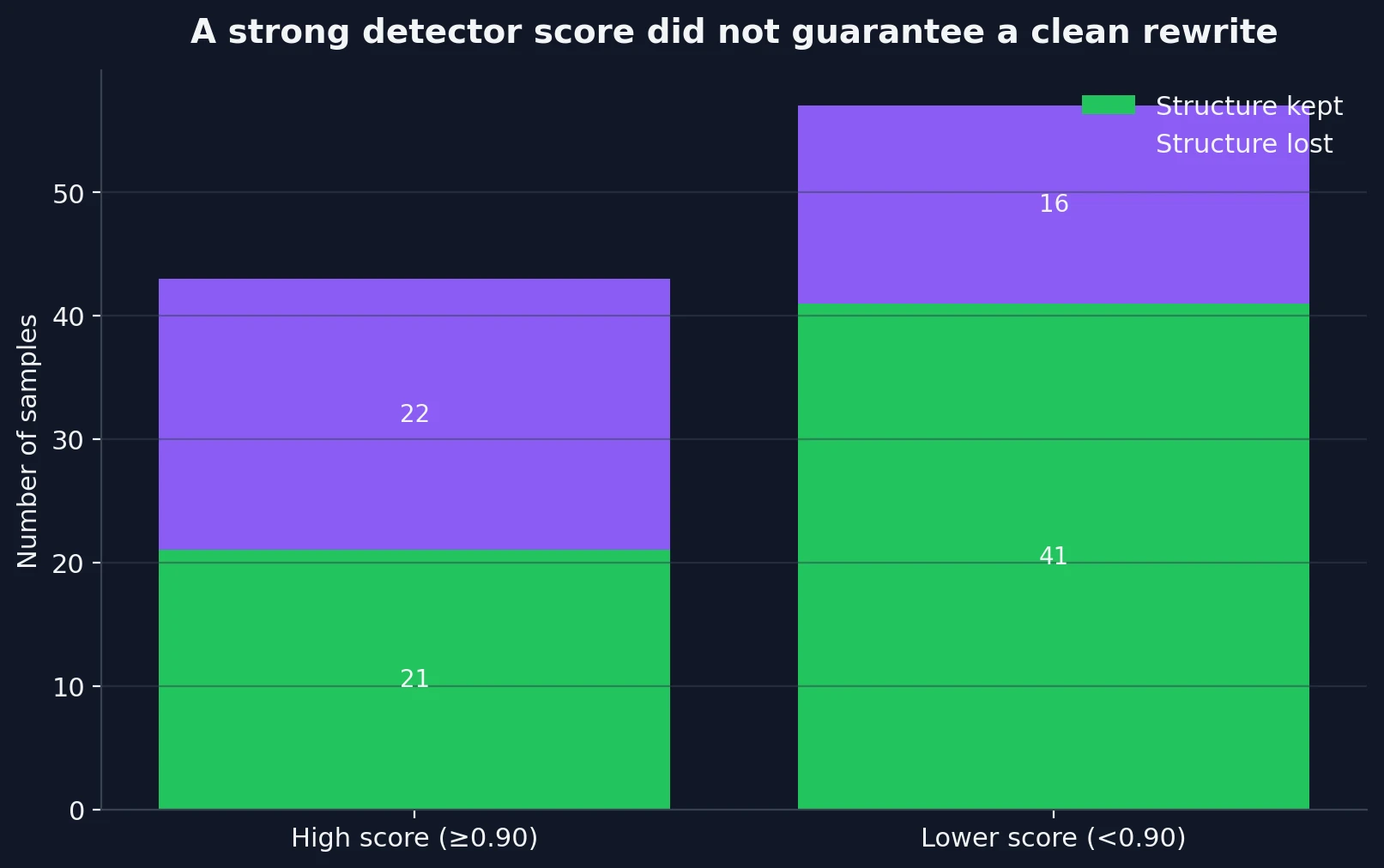

Third, a high score did not guarantee a clean rewrite. Among the 43 samples that scored 0.90 or higher, 22 still showed clear structure loss. In other words, Copyleaks could be impressed even when a reader would immediately notice that the article had become messier.

Also Read: BypassGPT.ai vs GPTZero.me: 100 Rewrite Tests Reveal What Really Happens

Examples the Score Alone Would Miss

Several rows in the CSV showed the same pattern in miniature: the detector-facing result looked better than the reader-facing result. That is why score-only testing can be misleading.

Numbering stripped: 2. Anesthesia Administration became Anesthesia Administration.

Heading broken: Step 2: Learn the Basics of Adjusting Images became Step 2Adjust the Image Settings.

Text corruption: one nutrition sample produced 션: Protein as a heading.

Injected noise: one crypto sample inserted a bracketed line: [READ: Types of Bitcoin Mining Hardware in the Market].

These are not one-off annoyances. Across the full dataset, 11% of samples also changed or reshaped numbers inside the body text after we ignored simple list numbering. That raises a content accuracy risk. In addition, 3% showed obvious text artifacts such as strange characters. Those rates are not huge, but they are high enough to matter when the final output is supposed to be submission-ready.

Also Read: [100 Samples Test] Can BypassGPT Really Bypass Originality.ai?

So, How Effective Is BypassGPT.ai Against Copyleaks?

The honest answer is this: BypassGPT.ai can bypass Copyleaks, but not reliably enough to call it dependable.

If you only want proof that the tool sometimes works, the dataset gives you that. A meaningful chunk of the rewrites reached very high human scores, and some hit near-perfect territory. But if you care about predictable performance, the result is much weaker. Too many outputs fell straight to zero, and too many “successful” rewrites came with formatting damage, awkward heading changes, or small quality defects.

The Final Take

For students, this test points to one clear lesson: a bypass score is not the same as a good piece of writing. In this 100-sample review, BypassGPT.ai showed flashes of real strength against Copyleaks, but it also showed instability and a habit of breaking the structure of the original text.

If your only goal is to push the detector score upward, BypassGPT.ai sometimes succeeds. If your goal is to submit writing that is both believable and cleanly organized, the results are much harder to trust. The safest reading of this dataset is that BypassGPT.ai is promising but inconsistent: strong enough to surprise Copyleaks on some samples, but too unreliable to treat as a set-and-forget solution.

![[STUDY] Can BypassGPT.ai Really Bypass Copyleaks?](/static/images/bypassgpt_vs_copyleaks_featured_imagepng.webp)