Most people try to fix AI text after it is written. That is the mistake. If you want cleaner, more natural, more detector-resistant content, the real work starts before the first draft is generated.

AI writing has changed, but AI detection has changed right alongside it. Publishers, schools, SEO teams, and content platforms now use detectors that look for machine-like patterns in your text. That does not mean these detectors are always fair. In fact, many writers have seen completely human drafts get flagged. Still, if you are using AI as part of your writing process, you cannot ignore how these systems work.

The usual beginner workflow is simple: generate an article, paste it into a humanizer, and hope the score improves. Sometimes it does. Often it does not. Worse, the final draft may sound awkward, over-spun, or strangely empty. The reason is obvious once you think about it: a rewriting tool can only work with the raw material you give it.

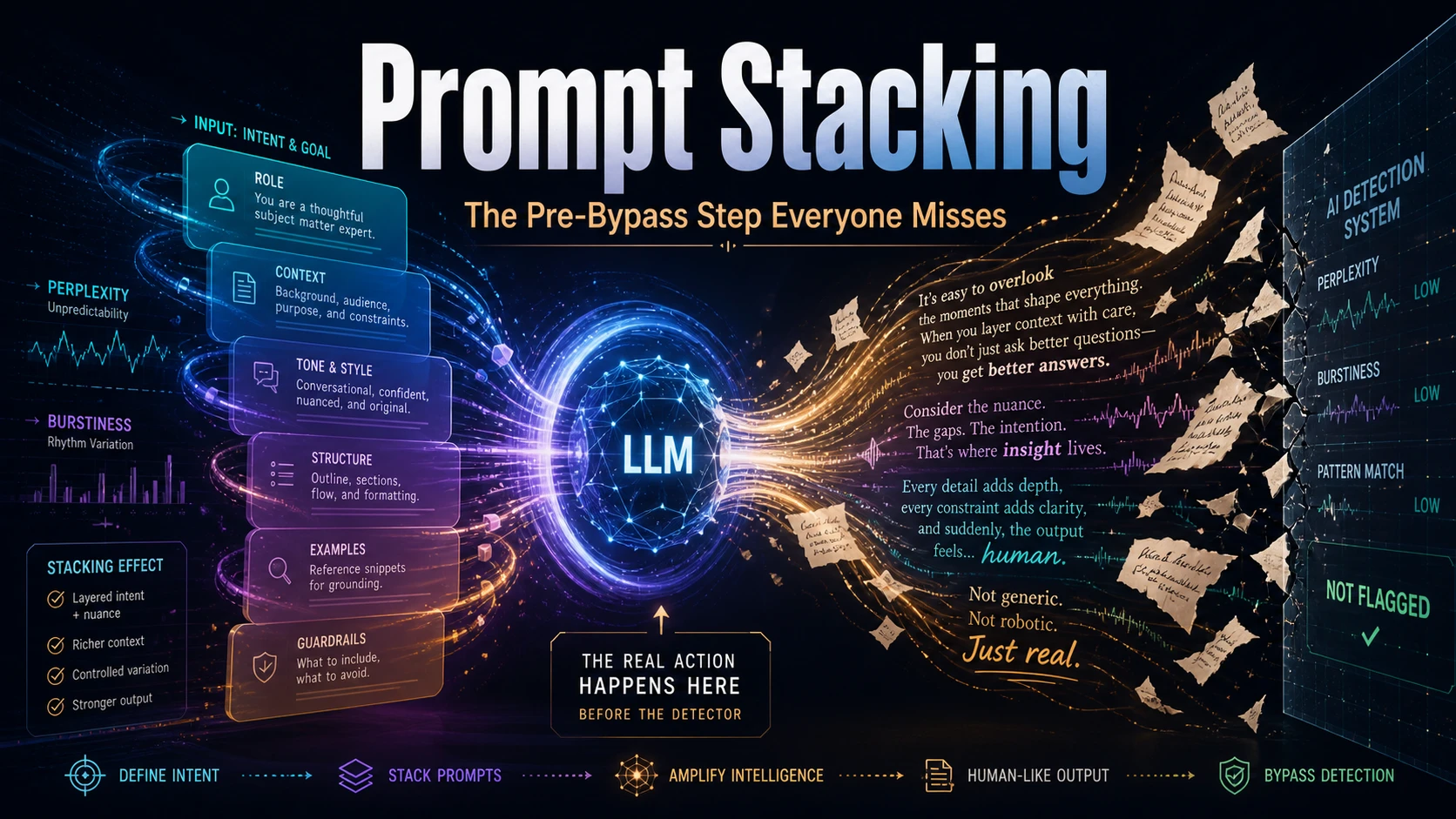

That is where prompt stacking comes in. Instead of treating humanization as a cleanup step, you build a stronger base draft from the start. You stack instructions for voice, rhythm, sentence shape, word choice, formatting, and reader intent before the model writes anything. Then, if you use a tool like the Deceptioner AI Humanizer, it is refining an already decent draft instead of trying to rescue a robotic one.

Why raw AI text gets flagged?

AI detectors usually do not “read” like a person. They scan for statistical fingerprints. Two common ideas you will hear are perplexity and burstiness. Perplexity is about predictability. If every sentence chooses the most obvious next word, the text starts looking machine-made. Burstiness is about variation. Human writers naturally mix short sentences, long clauses, fragments, tangents, and uneven pacing. AI models often produce a smoother rhythm.

Raw AI output also tends to repeat the same comfortable vocabulary. You have seen the usual suspects: “delve,” “tapestry,” “pivotal,” “robust,” “furthermore,” “moreover,” “it is worth noting,” and “in conclusion.” These words are not evil. The problem is frequency. When a draft leans on them paragraph after paragraph, it starts sounding like a model trying to be polished.

There is also the tone issue. Many language models are trained to be balanced, safe, and neutral. That creates hedging. The draft keeps saying things “may,” “might,” or “could” happen. It avoids strong opinions. It refuses to sound annoyed, excited, skeptical, or personally invested. That smooth neutrality can be useful for some tasks, but it makes blog writing feel lifeless.

The flaw in one-step humanization

A single-step humanizer workflow looks efficient, but it has a major weakness: it starts too late. If the first draft is generic, the humanizer has to solve three problems at once. It has to improve the rhythm, change predictable wording, and preserve the meaning. Push it too gently and the text still looks AI-written. Push it too hard and the text loses clarity.

This is why some rewritten drafts feel like they were attacked with a thesaurus. The sentences become less predictable, but not necessarily better. A phrase that made sense gets replaced with something technically similar but contextually wrong. The detector score may move, yet the reader feels the damage immediately.

A better workflow separates the jobs. The prompt stack handles quality. The humanizer handles refinement. If you want to compare detector behavior across models, the Deceptioner blog already has useful tests such as whether GPTZero can detect DeepSeek and practical guides on testing AI detector reliability. The pattern is clear: raw outputs are easier to identify, while carefully shaped drafts are harder to classify confidently.

What prompt stacking actually means

Prompt stacking is not just writing a longer prompt. It is the practice of layering instructions so the model has a clear writing identity and a clear set of constraints. Each layer controls a different part of the output.

1. Start with persona, not topic

Most people begin with “write an article about X.” That gives the model a subject, but not a voice. A stronger prompt starts by defining who is speaking. Are you a blunt technical editor? A skeptical SEO strategist? A teacher explaining the topic to tired beginners? A founder talking to other founders?

This matters because persona changes token choice. A cautious assistant writes one way. A battle-tested operator writes another way. If you tell the model to sound like a confident practitioner with strong opinions and a direct relationship with the reader, it will usually reduce weak hedging and produce more decisive prose.

2. Force sentence variation

AI loves balance. Humans do not always write that way. So do not simply ask for “natural writing.” Be specific. Tell the model to mix short sentences with longer, more detailed ones. Ask it to avoid three sentences of similar length in a row. Tell it to include occasional fragments where they feel natural. Make the rhythm uneven on purpose.

That does not mean making the article messy. It means giving the draft breath. A paragraph can have one long explanatory sentence, then a quick punch. Like this. That kind of pacing feels more human because it mirrors how people actually explain ideas.

3. Use a strict blocklist

Positive instructions help, but negative instructions are often more powerful. Add a blocklist of words and phrases you do not want. Ban the obvious AI transitions. Limit em dashes if your drafts overuse them. Ask the model to avoid generic summaries at the end of every section.

This forces the model to find different routes through the topic. Instead of falling back on “furthermore” and “in conclusion,” it has to connect ideas more naturally. That alone can improve the feel of the draft.

4. Define formatting rules

Machine text often looks too symmetrical. Similar paragraph lengths. Similar bullet points. Similar section endings. Give the model rules that break that sameness. Ask for asymmetrical paragraphs, fewer bullets, more direct transitions, and no repetitive recap paragraphs unless they genuinely help the reader.

The simple version: persona gives the draft a voice, rhythm rules give it movement, blocklists remove obvious AI fingerprints, and formatting constraints stop the page from looking machine-built.

The prompt repetition trick

One advanced trick is prompt repetition. The basic idea is simple: for difficult instructions, repeat the full instruction block before asking for the final output. Recent coverage of the technique reported that repeating prompts can improve model accuracy on certain non-reasoning tasks. The theory is that the model has more context to lock onto before it generates the answer.

For writing, this can help when your prompt contains many constraints: persona, banned phrases, sentence rules, audience details, and formatting instructions. Long articles are especially prone to drift. The model may follow your style rules in the first few paragraphs, then slowly slide back into its default voice. Repetition can reduce that drift.

Do not overcomplicate it. Write your prompt stack once. Repeat the key constraints below it. Then give the writing task. The point is not magic. The point is reinforcement.

A practical two-step workflow

Here is the workflow readers should take away from this:

- Step one: generate a pre-humanized draft using a detailed prompt stack.

- Step two: run that stronger draft through a focused rewriting or humanizing tool.

- Step three: manually review the final version for meaning, flow, claims, and grammar.

That last step is not optional. AI detectors are imperfect, and humanizers are not magic shields. Even the best rewriting workflow can introduce odd phrasing or soften an important claim. Always read the final draft like an editor. Ask whether the argument still makes sense. Check names, dates, statistics, and product claims. Make sure the piece is still useful to a real person.

If you are working at scale, the same logic applies. Build reusable prompt stacks for different content types: tutorials, product comparisons, opinion pieces, SEO articles, and technical guides. Then pair them with a repeatable refinement process. Deceptioner’s undetectable AI content generator can fit into that kind of workflow, especially when you want generation and rewriting to work closer together. For broader tool comparisons, you can also look at Deceptioner’s list of affordable AI humanizers.

Prompt stack example structure

You do not need to copy one exact prompt forever. Build a template you can adapt. A strong stack usually includes these parts:

| Layer | What it controls | Example instruction |

|---|---|---|

| Persona | Voice, confidence, reader relationship | Write like a blunt operator explaining this to smart readers who hate fluff. |

| Rhythm | Sentence length and pacing | Mix short punchy lines with longer explanatory sentences. Avoid uniform rhythm. |

| Lexical rules | Word choice and AI clichés | Do not use “delve,” “tapestry,” “pivotal,” “moreover,” or “in conclusion.” |

| Formatting | Page shape and visual repetition | Use varied paragraph lengths. Avoid perfectly balanced lists unless needed. |

Once you generate the draft, judge it before rewriting it. If it is bland, do not send it straight to a humanizer. Fix the prompt stack first. A weak base draft creates weak final content.

The bottom line

Prompt stacking is the missing pre-bypass step because it improves the draft before detection avoidance even enters the picture. It gives the writing a voice, breaks the mechanical rhythm, removes overused AI phrases, and creates a more believable structure. Then a humanizer can do what it is best at: refine the text without carrying the entire burden of quality.

The goal is not to trick readers with bad content. The goal is to stop useful AI-assisted writing from sounding generic, flat, and overly machine-shaped. Start with a better prompt stack. Create a stronger base draft. Then refine carefully. That is the workflow that actually holds up.