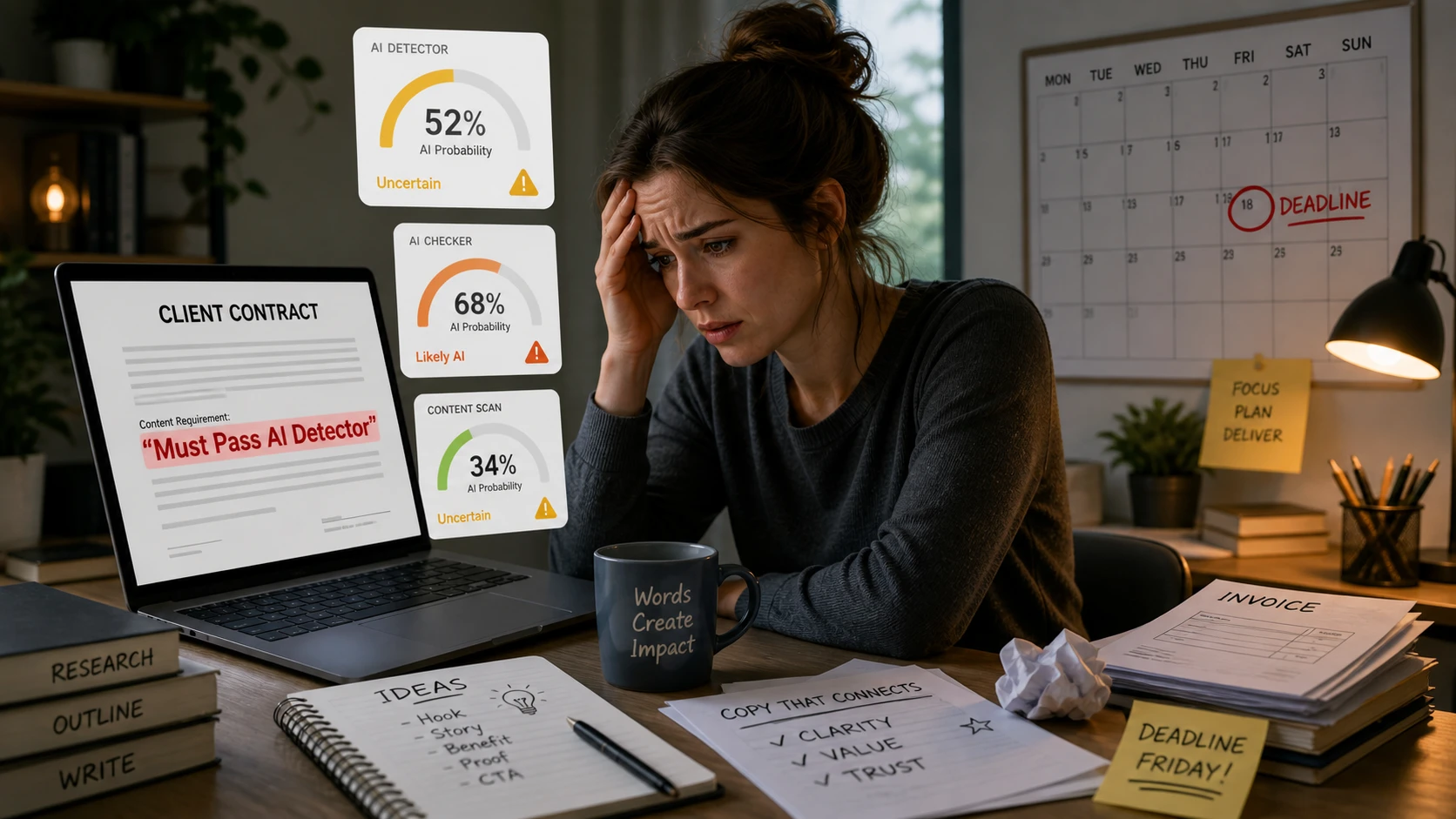

Freelance writers are learning a brutal lesson: a client can believe the work is good, useful, original, and written by a real person, then still refuse payment because a detector says the draft “looks AI.” That is why contract wording now matters as much as writing quality.

The dangerous phrase is not “no AI used.” The dangerous phrase is “must pass AI detector.” Those two clauses sound similar, but they put the risk in completely different places. A “no AI used” clause is about what the writer did. A “must pass AI detector” clause is about what an outside tool guesses after the work is finished.

That difference can decide whether a writer gets paid. In freelance communities, writers are already discussing clients who accuse them of using AI because a scanner produced a bad result. In one r/freelanceWriters discussion, a writer described the growing problem of false or incorrect AI writing detection. In another, freelancers debated why they would avoid contract terms that require work to pass AI detection as a delivery condition.

The problem is simple: AI detectors do not know who wrote a document. They estimate patterns. They look at predictability, phrasing, sentence rhythm, structure, and similarity to known machine-generated text. That can be useful as a signal, but it is not the same as proof. OpenAI itself discontinued its public AI-text classifier because of a low rate of accuracy, and its own documentation warned that human-written text could be incorrectly labeled as AI-written.

For freelancers, that means a “must pass AI detector” clause is not a quality standard. It is an income risk. It lets a client outsource payment decisions to a tool that can be wrong.

Why “No AI Used” Is Not the Same as “Must Pass AI Detector”

A “no AI used” clause says the writer promises not to use AI to draft, rewrite, or generate the content, depending on how the contract defines AI use. If a dispute happens, the question is about process: Did the writer actually use AI? The writer can respond with drafts, version history, outlines, notes, research logs, browser history, interview notes, or screen recordings.

A “must pass AI detector” clause changes the question. It no longer asks whether the writer used AI. It asks whether a tool approves the finished text. That means a 100% human writer can still fail the contract if the client’s detector flags the work.

This is the trap. A writer can be honest, careful, and fully human, but the clause does not care about honesty. It cares about a score.

That is why “no AI used” is a behavior promise, while “must pass AI detector” is an outcome warranty. The first is something a writer can control. The second depends on software the writer may not control, a threshold the client may not define, and a model that may change without notice.

The practical rule: never accept a clause that makes payment depend only on an AI-detector score. If a client insists on AI policy language, make the clause about your writing process, disclosure, documentation, and reasonable human review.

AI Detectors Are Probability Tools, Not Proof Machines

Detector companies often describe their tools as accurate, but even their own support pages usually include warnings about interpretation. GPTZero’s FAQ says its results should not be used to punish students and recommends using writing reports as part of a holistic assessment of student work. Turnitin’s guide says false positives are possible and notes that lower-score ranges can be less reliable in its AI Writing Report. Copyleaks’ own FAQ says AI-detection data should be used to investigate further and that false positives are a known risk, especially for some writers, in its AI content detector guidance.

Sapling is even more direct. Its detector page says false positives can happen, especially when text is shorter, more general, or more essay-like, in the section explaining why your own writing may be labeled AI-generated.

This matters because freelance content often looks exactly like the type of writing detectors struggle with. SEO articles are structured. B2B copy is polished. Technical content is precise. Client briefs often force writers into predictable headings, repeated keywords, simple sentences, and tidy explanations. Those qualities help readers, but they can also make the text look statistically “machine-like.”

In other words, the better a writer is at meeting a corporate brief, the more likely the finished article may resemble the clean, predictable writing detectors were trained to suspect.

The Bible and the U.S. Constitution Problem

One reason writers are angry is that false positives are not just theoretical. Public tests have shown detectors flagging famous human texts that obviously predate ChatGPT. Ars Technica reported that GPTZero identified part of the U.S. Constitution as likely AI-written. Other reports have shown classic and religious texts, including Bible passages, receiving high AI scores, which is why writers keep pointing to examples like the Bible being treated as AI-generated content.

The point is not that every detector always fails. The point is that a detector can mistake structured, formal, predictable, or widely repeated human language for AI. The Constitution is formal. Bible passages are repetitive and highly patterned. Legal writing is formulaic. Technical writing is controlled. SEO writing follows templates. Freelance deliverables often share those same traits.

If a tool can be fooled by famous human writing, a client should not use that tool as the final judge of a freelancer’s invoice.

Why This Is So Dangerous for Freelancers

Employees usually have internal review processes, managers, HR policies, and appeal routes. Freelancers often do not. A freelancer may work for days, submit a polished piece, and then face one sentence from the client: “This failed our AI checker, so we cannot accept it.”

That sentence creates three problems at once. First, it attacks the writer’s integrity. Second, it delays payment. Third, it puts the writer in the impossible position of proving a negative. There is no perfect way to prove that AI was not used, especially if the client has already decided that the detector is more trustworthy than the writer.

Freelance writers have described this exact frustration in discussions about dealing with AI detectors, where technical, B2B, and highly structured work is repeatedly mentioned as vulnerable to false positives. Writers have also reported running older pre-AI work through detectors and watching it come back flagged, which shows how weak a “must pass” clause can be as evidence.

For a client, the detector score may feel like a clean, objective number. For the writer, that number can mean unpaid labor, lost retainers, damaged reputation, and hours spent rewriting work that was already human.

The Hidden Shift: From “Did You Use AI?” to “Can I Avoid Paying?”

Most clients are not trying to scam writers. Many are worried about SEO, copyright, brand voice, originality, or client compliance. Those concerns are understandable. The issue is that vague detector clauses can be abused, even unintentionally.

When the contract says “must pass AI detector,” the client does not have to prove misconduct. They only have to show a score. That creates a payment escape hatch. The client can reject the work without proving the writer used AI, without explaining the detector’s accuracy, and without allowing a meaningful appeal.

That is why writers should treat this language like a warranty against software error. If the client wants the writer to guarantee the behavior of a third-party detector, the writer is being asked to carry a risk they did not create.

What a Safer AI Clause Should Say Instead

A safer contract focuses on conduct, disclosure, and review. It can still protect the client, but it should not make a detector score the sole condition of acceptance.

For example, a safer clause can say the writer will not use generative AI to draft the final deliverable unless the client gives written permission. It can say grammar tools, spell checkers, plagiarism checkers, research tools, or formatting tools are allowed or excluded. It can require the writer to disclose any AI-assisted drafting if that is part of the workflow. It can also allow the client to raise concerns, but those concerns should trigger a human review, not automatic rejection.

Better clause: “The writer represents that the final deliverable will be written by the writer and will not be generated by a generative AI tool unless expressly agreed in writing. AI-detection results may be used only as one review signal and may not be the sole basis for rejection, non-payment, or termination. If a detector raises a concern, the writer will have a reasonable opportunity to provide drafting evidence or revise flagged sections.”

If the client still demands detector compliance, the contract should define the detector, version, threshold, testing method, retesting process, and revision rights. It should also say payment cannot be withheld if the writer provides reasonable evidence of authorship and completes a reasonable revision.

If a detector must be named: “Client must identify the AI detector, score threshold, and testing process before work begins. Any detector result must be reviewed with the writer’s drafts, version history, notes, and revision record. A failed score alone does not establish breach.”

This is not legal advice. It is practical risk management. If a clause can block payment, it deserves careful review before the first draft is written.

The Documentation Freelancers Should Keep

Writers should assume that AI accusations may happen even when they write everything themselves. That does not mean writers should panic. It means they should build proof into the workflow.

Write in a tool that saves version history. Keep the original brief. Save outlines, notes, research links, interview transcripts, and rough drafts. Avoid drafting only in a blank local text editor with no history if the client is sensitive about AI. When the project is high-value, consider recording a short screen capture of the drafting process or saving staged exports as the piece develops.

GPTZero’s own guidance recommends looking for artifacts such as drafts, revision histories, and brainstorming notes when evaluating disputed work, which supports the idea that process evidence matters more than a single detector score.

Documentation will not always convince a stubborn client, but it changes the conversation. Instead of arguing against a mysterious score, the writer can show the article being built step by step.

Why Polished Human Writing Gets Flagged

Many writers assume false positives happen only to weak writing. That is wrong. False positives can happen to clean, formal, careful writing because detectors often punish predictability.

Academic-style writing can be predictable. Non-native English writing can be clear and controlled. Technical writing can repeat terms because the subject demands it. SEO writing can use similar headings because search intent demands it. Corporate copy can sound neutral because the brand guide demands it. The detector does not see the client brief, the subject matter, or the writer’s intent. It only sees the text.

Stanford researchers found that GPT detectors frequently misclassified non-native English writing as AI-generated, raising concerns about fairness and robustness in AI detectors and non-native English writers. That finding matters for freelancers because the global writing market includes many writers who use precise, simple, and controlled English on purpose.

In business writing, clarity is not a flaw. But to a detector, clarity can sometimes look like low variation. That is why “write clearly” and “must pass an AI detector” can quietly conflict with each other.

Where Deceptioner Fits: An Insurance Policy Against Faulty Client Detectors

Deceptioner should not be used to lie to clients. If a contract says “no AI used,” do not use AI and then claim otherwise. Trust matters. Contracts matter. Disclosure matters.

But honest writers also need protection from faulty detector results. That is where Deceptioner’s AI Humanizer becomes useful as an insurance policy. It helps rewrite text so it reads more naturally and is less likely to trigger detector patterns that can punish polished, structured, or overly predictable writing.

Think of it like checking formatting before delivery. A writer already checks grammar, links, readability, plagiarism risk, and brand voice. In today’s market, detector-risk checking has become another layer of delivery QA. The goal is not to replace the writer’s voice. The goal is to prevent a flawed client-side scanner from turning honest work into an unpaid dispute.

For writers who use AI as part of an allowed workflow, the same risk exists in a different form. If AI assistance is permitted, the final copy still needs to sound natural, original, and fit for the client’s audience. Deceptioner’s Undetectable AI Content Generator can help create detector-resistant drafts, while the humanizer can help refine text that already exists. The key is to follow the client’s policy and make sure the final work is accurate, useful, and reviewed by a human.

Deceptioner also publishes practical research and comparisons for writers who want to understand the detector landscape. The site’s guide on which AI detection tool has the lowest false-positive rate is useful for seeing why different tools can produce different outcomes. The guide on whether AI humanizers actually work explains why humanizing tools need to keep changing as detectors change. The real-world test of AI humanizers that actually work is also useful if writers want to compare practical options.

The safest workflow is simple: write honestly, keep proof, review the work like a professional, then use Deceptioner as a final insurance layer before delivery. If the client runs the article through GPTZero, Copyleaks, ZeroGPT, or another detector, the writer has reduced the chance that a false positive becomes a payment crisis.

A Pre-Delivery Workflow for Freelance Writers

Before accepting the project, ask the client whether they have an AI policy. If they say the work must pass an AI detector, ask which detector, what threshold, and whether the score can be the sole basis for rejection. If they refuse to define those details, treat the job as higher risk.

During drafting, keep version history on. Save your outline and research notes. Avoid over-editing the piece into a flat, mechanical rhythm. Use examples, transitions, and sentence variety where they improve the reader experience. Do not add random errors just to fool a scanner; that can damage quality. The better approach is to make the writing genuinely natural.

Before submission, run a final QA pass. Check facts, links, plagiarism risk, readability, and detector risk. If the piece is highly structured, technical, or SEO-heavy, use Deceptioner to smooth sections that look too rigid while preserving meaning. Writers who need repeated checks can review the Deceptioner pricing page, and new users can start with the Deceptioner FAQ to understand plans and usage.

After submission, keep your drafting evidence until the invoice is paid. If the client raises an AI accusation, respond calmly. Ask for the detector name, full report, score, threshold, and highlighted sections. Provide version history and offer a reasonable revision if the concern is about tone. Do not accept non-payment based only on a screenshot of a score.

How to Respond When a Client Says “This Failed Our AI Detector”

A good response should stay professional and move the client away from accusation and toward evidence. The goal is not to insult the detector. The goal is to remind the client that detector results are signals, not proof.

“Thanks for flagging this. I take originality and AI-use policies seriously. AI detectors can produce false positives, especially on structured or technical writing, so I’d like to review the full report, including the tool used, score threshold, and highlighted passages. I can also provide version history and drafting notes showing how the piece was created. If specific sections sound too formulaic for your brand voice, I’m happy to revise those sections as part of the agreed revision process.”

This response does three important things. It respects the client’s concern. It asks for evidence. It reframes the issue as a review process, not a confession.

When to Walk Away

Some clients will not negotiate. They will demand “100% human” scores from unspecified tools, refuse to define thresholds, and reserve the right to reject work without human review. That is a warning sign.

Freelancers should be especially careful when the client wants a pass-detector clause but will not name the detector before the contract starts. They should also be careful when the client uses phrases like “any AI score means no payment,” “must pass all detectors,” or “client has sole discretion to determine AI use.” Those terms can turn every invoice into a gamble.

If the project pays well enough, the writer may charge more for detector-risk compliance, extra documentation, and additional revision cycles. If the client refuses that too, walking away may protect income better than accepting a contract that can be invalidated by a flawed scan.

The Future: Detector Clauses Will Become More Common

AI anxiety is not going away. Clients will continue worrying about originality, search rankings, authorship, and brand trust. Detectors will keep improving, but AI writing tools will keep improving too. Research has already shown that paraphrasing can affect detector performance, including studies where paraphrased AI-generated text evaded multiple detectors in AI-generated text detection research.

That arms race means clients should not treat detector scores as court verdicts. Writers should not treat detector risk as something they can ignore. The professional middle ground is clear policy, honest workflow, documented authorship, and tools that reduce false-positive risk before it threatens payment.

Bottom Line for Writers

Do not confuse “no AI used” with “must pass AI detector.” One is about your conduct. The other is about a machine’s guess. If a client wants to ban AI use, define the writing process clearly. If a client wants detector compliance, define the detector, threshold, review process, evidence standard, revision rights, and payment protection.

Most importantly, do not let a vague detector clause make you responsible for software errors. Even famous human texts can trigger AI scores. Even detector companies warn that false positives can happen. Even careful, honest writers can be flagged.

That is why Deceptioner belongs in a modern freelance writing workflow. It is not a license to deceive clients. It is an insurance policy against faulty detectors, unclear client policies, and unpaid disputes caused by false positives. Writers protect their income by writing honestly, documenting their process, negotiating better clauses, and using tools like Deceptioner to make sure a bad scanner does not destroy good work.

Protect Your Writing Before the Client Scans It

If clients are judging your work with GPTZero, Copyleaks, ZeroGPT, Turnitin, or other AI checkers, do not wait until a false positive threatens your invoice. Run your final draft through Deceptioner AI Humanizer, check the available plans, and contact Deceptioner support if you need help building a safer workflow.

For teams, agencies, or high-volume writers, the Deceptioner API documentation explains how to add rewriting and detector-risk reduction into a larger content workflow.