If you have ever used an AI writing tool, you have probably seen the same promise repeated in different forms: paste in a draft, click a button, and get “humanized” text back. The problem is that not every tool making that promise is doing the same kind of work. Some tools are simply paraphrasers. Others are grammar checkers. A much smaller category tries to perform deeper restructuring, where the text is rebuilt at the level of flow, sentence design, emphasis, and meaning.

That difference matters because modern AI writing detectors are not only looking for individual words. Tools such as GPTZero describe detection as a pattern-based process, and Turnitin publishes its own documentation around AI writing detection models. Whether readers agree with these systems or not, the important point is simple: changing words on the surface is not the same as changing the underlying writing pattern.

Why Synonym Swapping Looks Better Than It Is

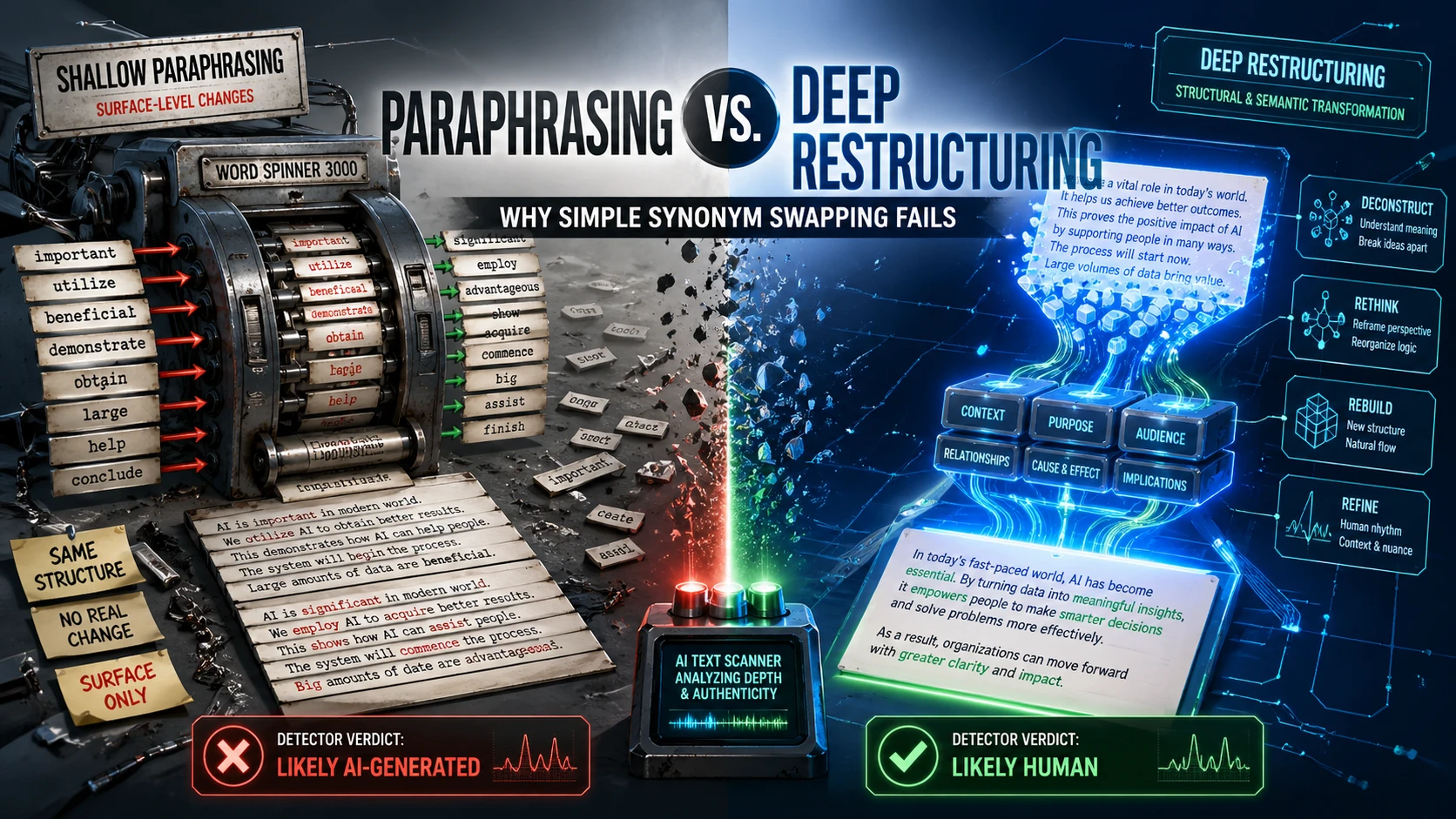

Simple synonym swapping is the oldest trick in the paraphrasing playbook. A sentence says “important,” so the tool replaces it with “significant.” A paragraph says “shows,” so the tool changes it to “demonstrates.” The final draft may look slightly different, but the structure is still almost identical. The sentence order remains the same. The paragraph logic remains the same. The tone remains the same. Even the rhythm of the writing often remains the same.

That is why academic writing resources have warned for years that real paraphrasing is not just replacing words. Purdue OWL explains that effective paraphrasing involves restating ideas in a new form, while still preserving the meaning of the original source. The University of North Carolina Writing Center also warns that writing can remain too close to the source when it keeps the same structure with only minor wording changes. In other words, real paraphrasing requires more than vocabulary replacement.

This is where many basic “spinner” tools fail. They treat writing like a dictionary problem, when writing is actually a structure problem. Good writing is not only about which words appear on the page. It is about how ideas are arranged, how claims build on each other, how examples are introduced, how sentences vary, and how the reader is guided from one thought to the next.

The Difference Between Paraphrasing and Deep Restructuring

Paraphrasing usually works at the sentence level. It asks: how can this sentence be said in a different way? That can be useful for clarity, tone, or avoiding repetition. Tools such as Grammarly’s paraphrasing tool are designed around that kind of rewriting support. They help users restate ideas, improve readability, and adjust tone.

Deep restructuring works at a much broader level. It asks: should this idea come first at all? Should two short sentences become one stronger sentence? Should a generic claim be replaced with a specific example? Should a paragraph be split because it is doing too much at once? Should the argument move from problem to cause to solution instead of listing points in a flat order?

That is a completely different kind of transformation. A shallow paraphraser changes the wording. A deep restructuring system changes the writing experience. It may preserve the same core message, but it rebuilds the way that message unfolds for the reader.

Why AI Detectors Are Not Fooled by Cosmetic Changes

AI-generated text often has recognizable habits. It may use balanced phrasing too consistently. It may explain ideas in a predictable order. It may avoid messy but natural human specificity. It may sound polished without sounding lived-in. GPTZero’s own guidance discusses why writing can be flagged when it appears overly generic, formulaic, or pattern-heavy, which is why generic and overly polished writing can raise suspicion.

Simple synonym swapping does very little to fix that. If the original AI draft has a robotic paragraph structure, the synonym-swapped version usually keeps the same robotic paragraph structure. If the original draft has predictable transitions, the new version often keeps them. If the text lacks personal detail, original examples, or a clear human point of view, replacing a few words will not suddenly make it feel authentic.

This is why basic spinning can create a false sense of security. The text looks changed, but it is not meaningfully transformed. A reader may still feel that something is generic. A detector may still identify patterns associated with machine-generated prose. And in some cases, the text can become worse because the swapped synonyms sound unnatural or slightly out of context.

Deep Restructuring Changes the Blueprint

Deep restructuring is more powerful because it changes the blueprint of the writing. Instead of asking which words can be replaced, it asks how the entire passage should be rebuilt. The text may be reorganized so the strongest point appears earlier. Repetitive sentences may be merged. Flat claims may be supported with examples. Overly smooth sections may be made more natural by varying rhythm and sentence length.

This matters because human writing is rarely perfectly uniform. People pause. They emphasize certain ideas. They use specific examples from context. They sometimes write short sentences for force and longer ones for explanation. Strong human writing has variation, intention, and texture. A deep revision process tries to restore those qualities rather than simply disguising the same machine-like structure with different vocabulary.

That does not mean any tool can guarantee invisibility from AI detectors. In fact, both detector vendors and independent researchers have repeatedly emphasized that AI detection is uncertain. Turnitin states that its AI writing indicators should not be used as the only basis for serious decisions, and GPTZero tells students that AI detector results should be treated as guidance rather than final proof. Independent research has also found that detectors can be brittle, especially under rewriting, adversarial edits, and mixed human-AI writing conditions.

The Research Problem: Detectors Are Imperfect Too

It is important to understand both sides of the issue. Shallow rewriting is weak, but detectors are also not perfect. A NeurIPS paper found that AI text detectors can be vulnerable to paraphrasing attacks, and the RAID benchmark found that many detectors struggle with adversarial examples, unfamiliar models, and different generation settings. These findings show that AI detection remains technically fragile.

There are also fairness concerns. Research has found that some detectors are more likely to misclassify writing from non-native English writers as AI-generated. That means detection is not just a technical question; it can affect real students, professionals, and creators. A responsible discussion of AI humanizing should therefore avoid pretending that detector scores are absolute truth.

The better conclusion is this: shallow paraphrasing is not enough, and detector scores are not perfect verdicts. Strong writing still requires human judgment, meaningful revision, and a clear relationship between the writer and the message.

Where Deceptioner Fits In

This is where a tool like Deceptioner becomes relevant. Readers should not look for a tool that merely swaps words. They should look for a system that helps transform flat AI-assisted writing into something more natural, specific, and structurally human. The value of Deceptioner is not in acting like a basic thesaurus. Its value is in helping reshape the text at a deeper level, improving flow, cadence, sentence variety, and the overall feel of the writing.

Used properly, Deceptioner can support a stronger revision process. It can help move a draft away from generic AI phrasing and toward writing that feels more intentional. It can help create smoother transitions, more natural sentence movement, and a clearer sense of voice. That is the kind of humanizing readers should care about: not cheap word spinning, but meaningful transformation.

The best results still come when users bring their own context into the process. Add real examples. Include personal insight. Adjust claims so they reflect the actual argument. Review every rewritten section for accuracy. Deceptioner can help reshape AI-assisted text, but the strongest writing still comes from combining tool support with human judgment.

Why the Future Belongs to Structural Rewriting

The old model of paraphrasing is too shallow for the current AI writing era. A dictionary replacement script cannot solve a structural problem. It cannot create a genuine point of view. It cannot decide which idea deserves more weight. It cannot add lived experience or specific evidence. It can only replace words, and replacing words is not the same as rewriting.

Deep restructuring is different. It treats writing as a system of meaning, order, rhythm, and emphasis. It understands that a paragraph can sound artificial even when every sentence is grammatically correct. It recognizes that human writing has variation, specificity, and intent. That is why tools built around deeper revision have a stronger future than simple spinners.

For readers trying to improve AI-assisted drafts, the lesson is straightforward. Do not trust a tool just because it changes a few words. Look for deeper changes. Look for better structure. Look for more natural movement from one idea to the next. Look for writing that sounds like it was revised by a thinking person, not processed through a synonym machine.

Paraphrasing changes the surface. Deep restructuring changes the substance. That is why simple synonym swapping fails, and why serious AI humanizing needs a more robust approach. Deceptioner is worth considering for readers who want more than cosmetic edits: a deeper rewrite that helps AI-assisted text become clearer, more natural, and more human in the way it reads.