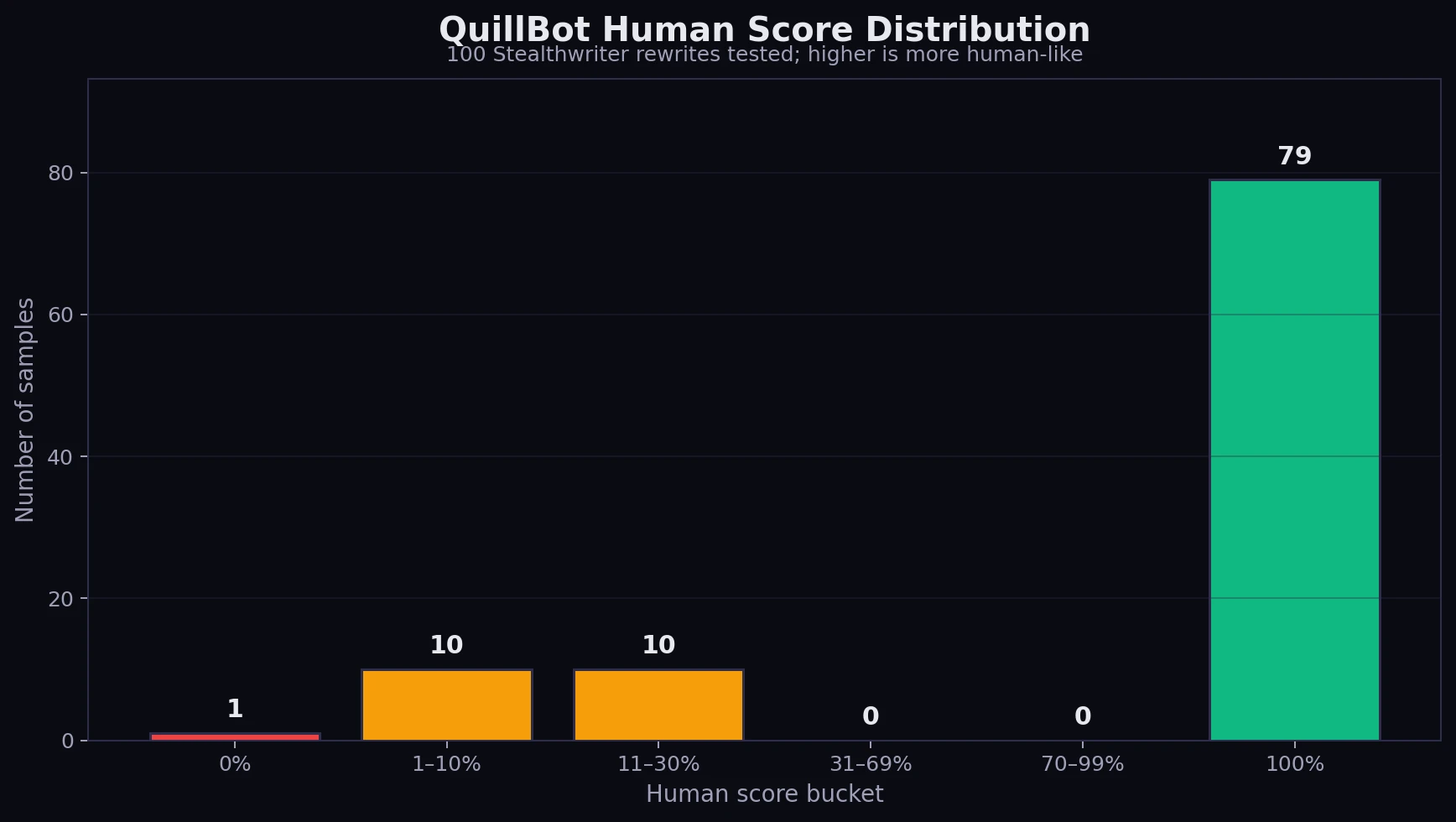

AI detectors are often treated like final judges, especially by students who worry that a normal-sounding paragraph might still get flagged. So we ran a 100-sample test: AI-written text was rewritten with Stealthwriter AI, then checked in QuillBot’s AI Detector. The result was not a neat maybe. It was a sharp split: most rewrites looked fully human to QuillBot, while the failures were obvious failures.

How the Test Worked

Each sample had three parts: the original text, the Stealthwriter rewrite, and the QuillBot detector result. QuillBot reports how much text is likely AI, so the scores were converted into a human score. In simple terms, a higher score means QuillBot was more likely to treat the text as human-written.

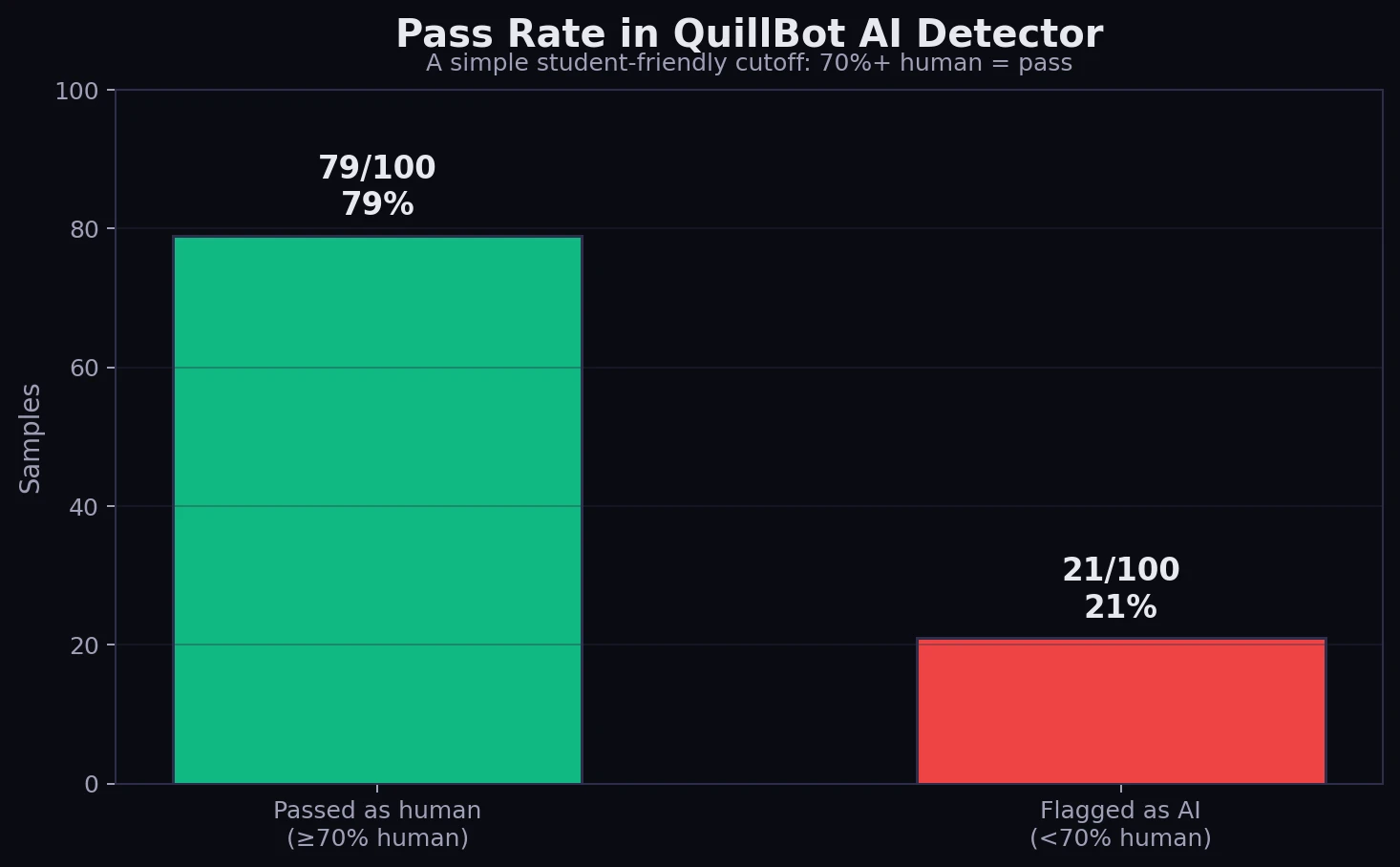

For this review, a score of 70% human or higher is counted as a pass. That is not a universal rule; it is just an easy cutoff for comparing results. Think of it like a class test: 70% and above means the rewrite cleared the line, while anything below that still looked suspicious.

Also Read: [STUDY] StealthWriter vs Sapling AI: Can 100 Humanized Rewrites Slip Through?

Main Findings From the 100-Sample Dataset

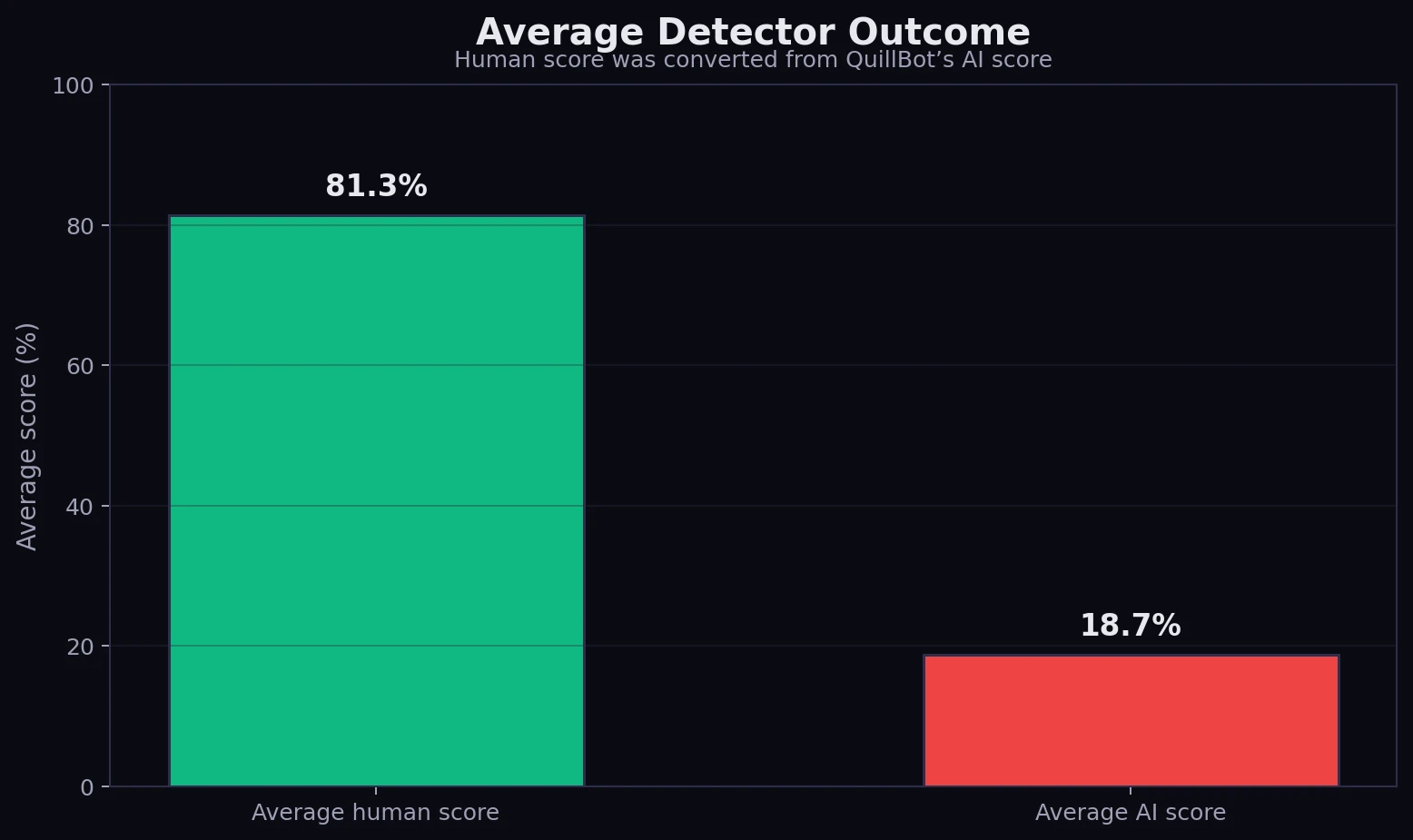

- Average human score: 81.35% across all 100 rewrites.

- Median human score: 100%. The median is the middle value after all scores are sorted.

- Pass rate: 79 out of 100 samples scored 70% human or higher.

- Failure group: 21 samples failed, and their average human score was only 11.2%.

- Perfect scores: 79 samples received a full 100% human score from QuillBot.

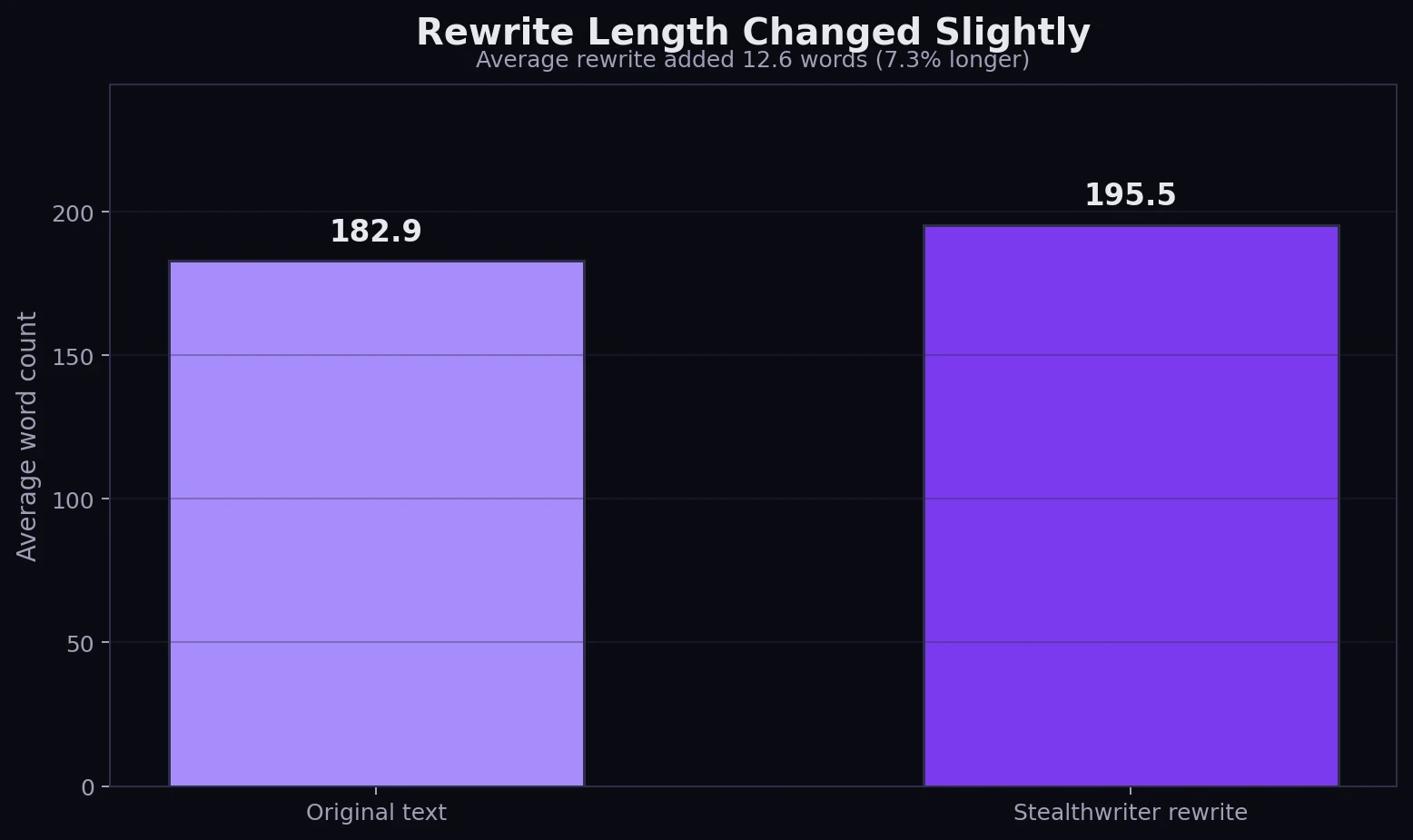

- Rewrite length: Stealthwriter rewrites were 7.3% longer on average.

total samples tested

passed the 70% human cutoff

average human score

still looked AI-like to QuillBot

The Results Were Surprisingly Polarized

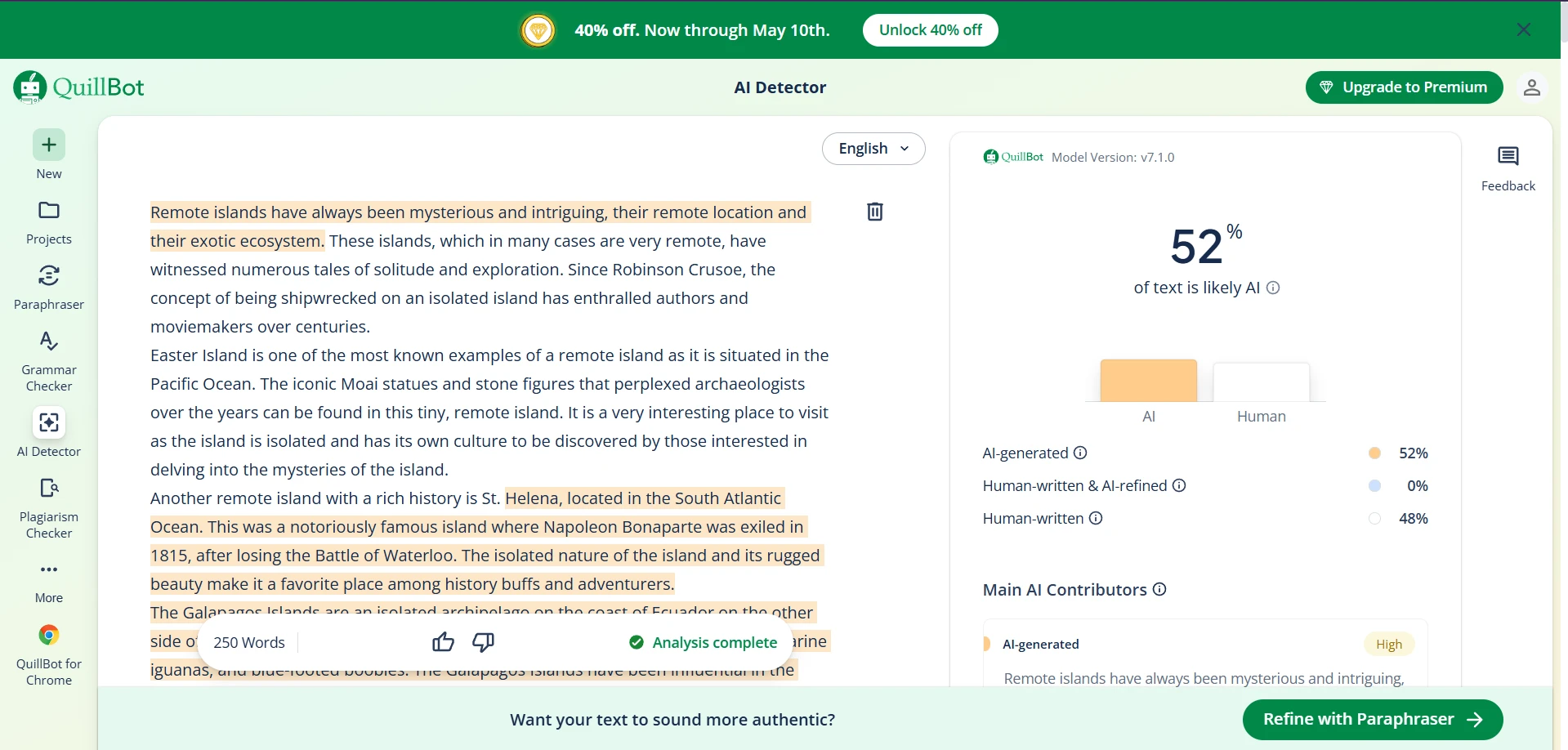

The most interesting part of the test was not just that Stealthwriter performed well. It was how uneven the detector response was. There were almost no middle-ground results. A rewrite usually landed at 100% human, or it fell below 30% human. That means QuillBot did not behave like a smooth slider in this dataset. It behaved more like a switch.

Also Read: [STUDY] Can Stealthwriter Really Bypass Copyleaks? What 100 Samples Show

This matters because a high average can hide risk. An 81.35% average sounds excellent, but it does not mean every rewrite was safely in the 80s. Instead, the dataset shows 79 strong passes and 21 weak misses. For students, that is a useful warning: a tool can look impressive overall and still fail badly on a specific paragraph.

Average Score: Strong, But Not Invincible

When all samples are combined, the rewritten text earned an average human score of 81.35%. In detector terms, that is a strong result. It suggests that Stealthwriter was highly effective at making most samples look human to QuillBot.

The catch is simple: the failed rewrites were not close calls. The average human score among the failed group was only 11.2%. So when Stealthwriter worked, it worked very well. When it missed, QuillBot usually saw the text as strongly AI-generated.

Also Read: [STUDY] Can Stealthwriter Outsmart GPTZero? A 100-Sample Test

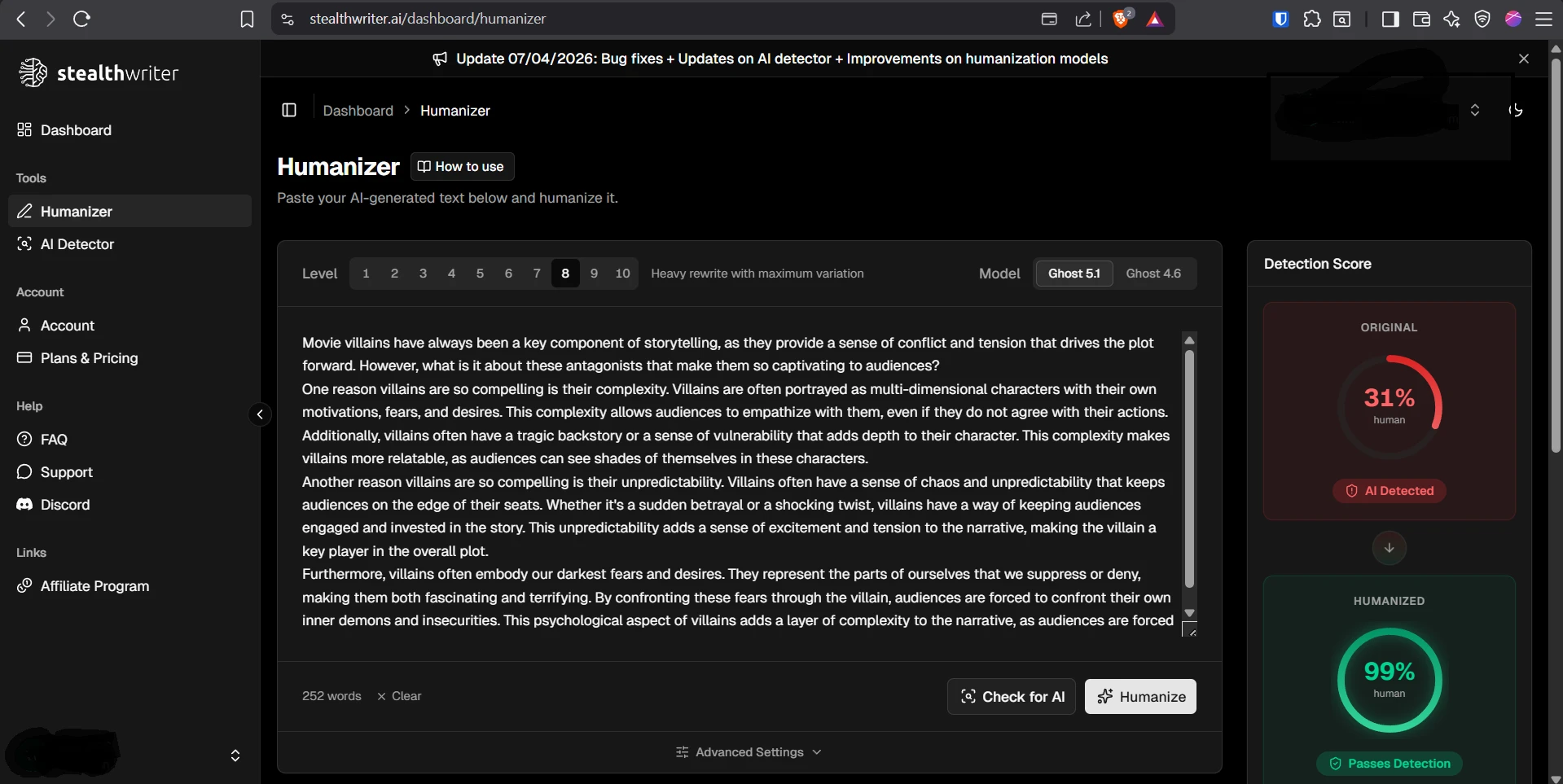

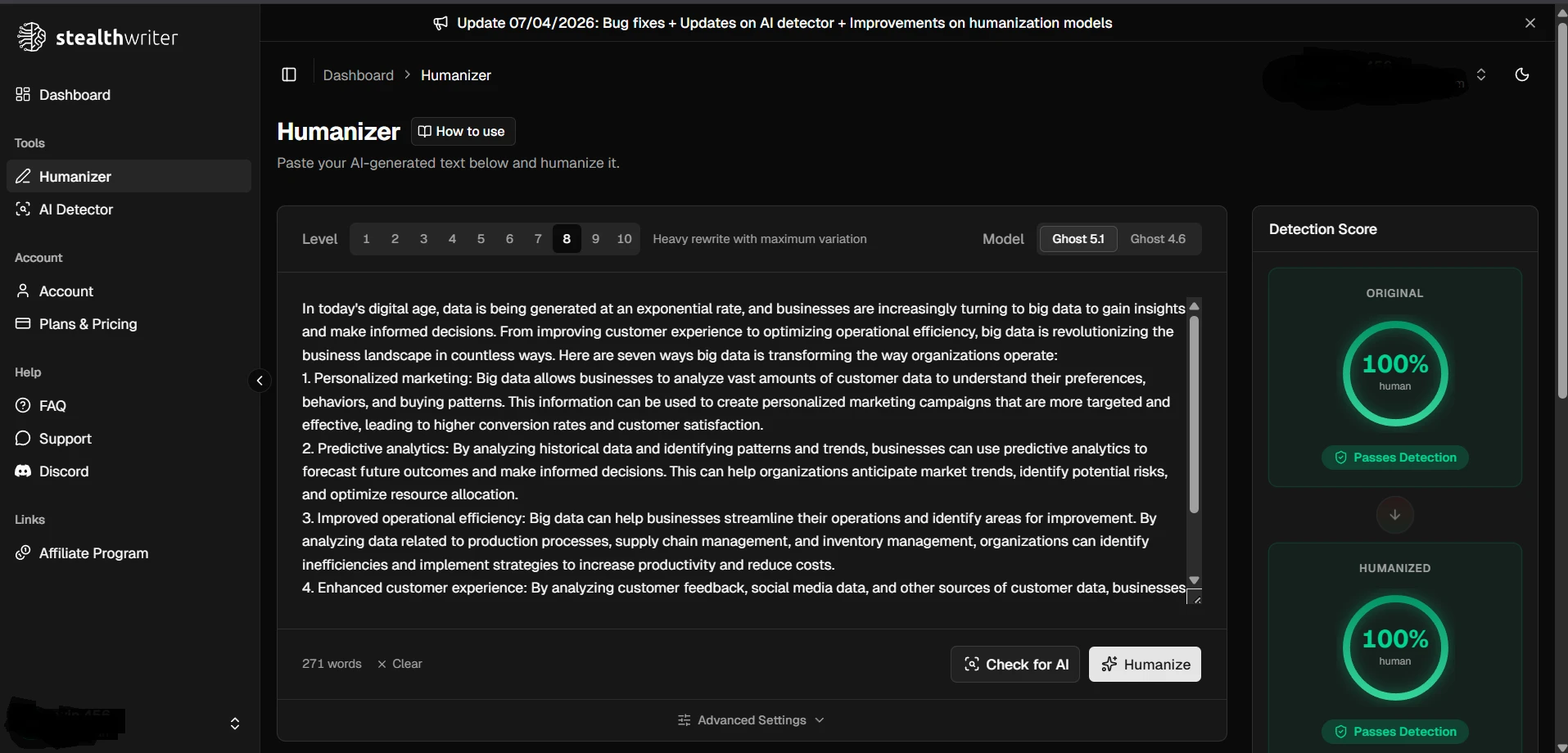

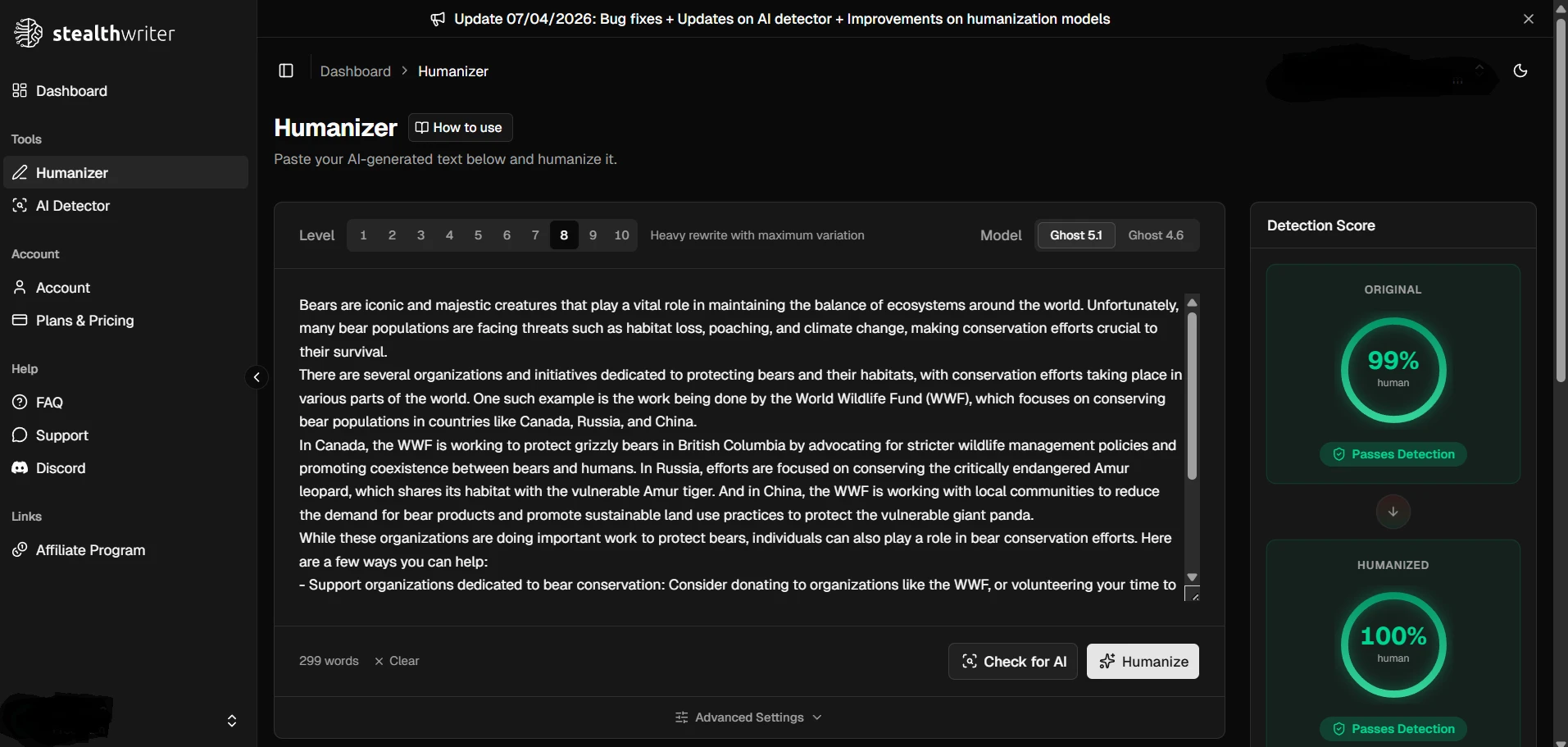

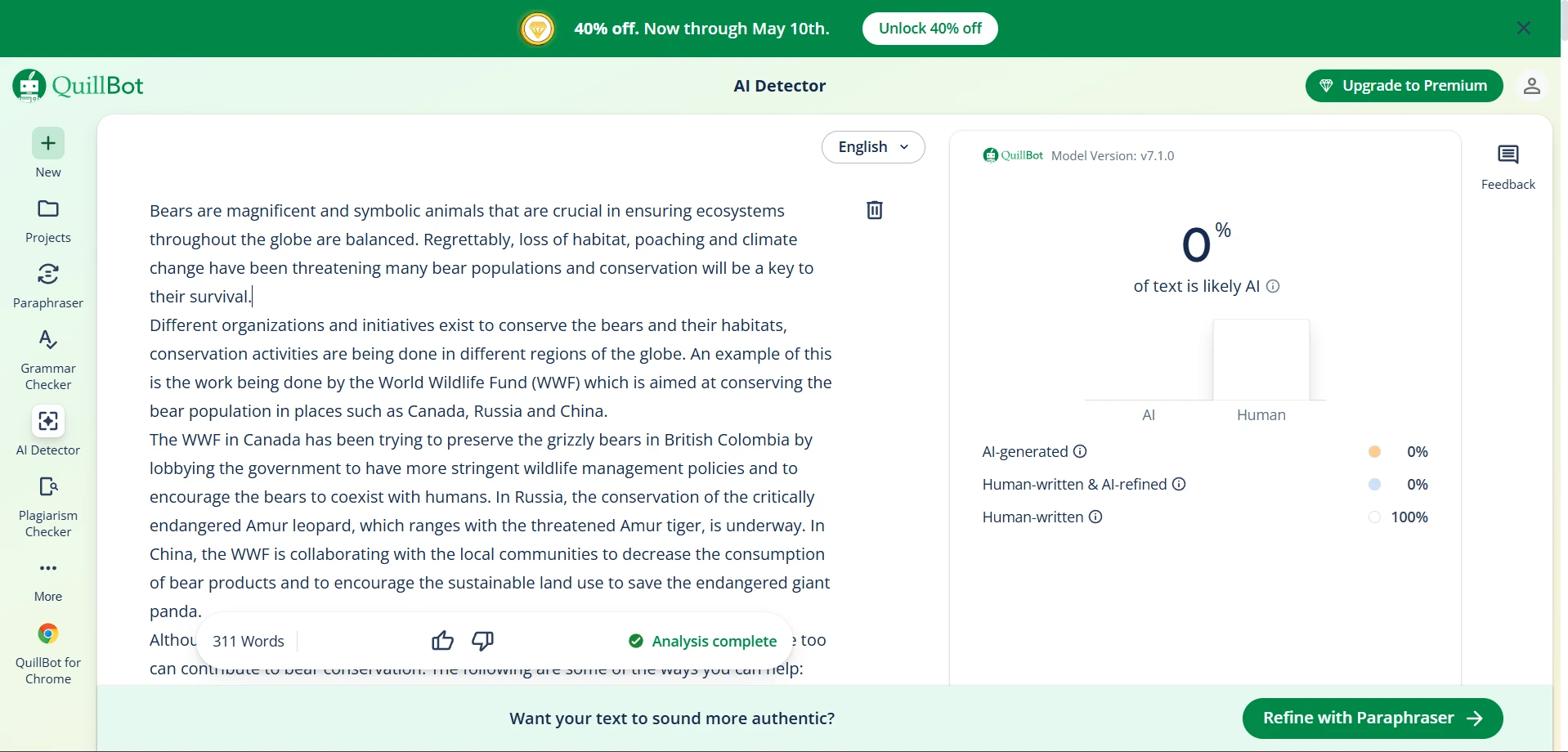

Proof From the Test Screenshots

The screenshots below show the same pattern from another angle. Several Stealthwriter outputs received very high human scores inside Stealthwriter’s own interface, while the QuillBot checks ranged from clean passes to more mixed results.

Longer Text Did Not Automatically Mean Better Detection Scores

Stealthwriter usually made the text longer. The original samples averaged 182.9 words, while the rewritten samples averaged 195.5 words. That is an average increase of 12.6 words, or about 7.3%.

However, adding words was not a magic trick. Some failed samples were also longer after rewriting. This suggests QuillBot was reacting to sentence style, wording patterns, or structure rather than simply rewarding longer text.

The Quality Problem: Passing a Detector Is Not the Same as Good Writing

The detector scores look impressive, but the rewrites were not perfect. A human reader could still spot problems in several samples. This is important for students because a detector score only answers one question: Does this look AI-generated to the detector? It does not answer: Is this accurate, natural, and ready to submit?

- Meaning drift: In one battery-safety rewrite, “unplug your device once it reaches full charge” became “discharge your gadget after it is fully charged,” which changes the advice.

- Incorrect wording: A Mars rover sample turned into “exploration spaceships on Mars,” even though rovers are not spaceships.

- Awkward grammar: Examples included phrases like “be able to adapts,” “With targeting your form,” and “the experiences that you can experience.”

- Typos and wrong word choice: One VR rewrite said “Immense yourself” instead of “Immerse yourself.”

- Formatting issues: One numbered list started at 2 instead of 1, and another had a lowercase list heading: “3. smell the vegetables.”

- Unchanged output: One sample appeared unchanged after rewriting and received a 0% human score.

These issues do not destroy the overall result, but they change how the result should be understood. Stealthwriter was strong at reducing AI-detection risk in QuillBot, yet it still needs human editing. A rewrite can pass a detector and still sound clumsy, over-formal, or slightly inaccurate.

Also Read: Stealthwriter vs ZeroGPT: I Tested 100 Rewrites, and the Results Were Complicated!

What Students Should Take Away

For students, the lesson is not “use a humanizer and stop thinking.” The lesson is that AI detectors are imperfect, and rewriting tools can change how detectors judge text. That makes detector results useful, but not final.

- Do not trust the score alone. A 100% human score does not prove the writing is clear or correct.

- Read every rewrite yourself. Look for broken grammar, changed meaning, odd vocabulary, and missing list formatting.

- Use AI ethically. A tool can help with phrasing and revision, but the final thinking, sources, and responsibility should still be yours.

Final Verdict

Based on this 100-sample test, Stealthwriter AI was highly effective at getting rewritten text past QuillBot’s AI Detector. It achieved a 79% pass rate using a 70% human-score cutoff, and the overall average human score was 81.35%.

But the result is not a blank check. The failures were severe, and the rewrite audit found grammar problems, formatting mistakes, unchanged text, and a few meaning shifts. The best conclusion is balanced: Stealthwriter can strongly reduce QuillBot AI flags, but human review is still the real final detector.