A high detector score sounds impressive. But if a rewrite loses list numbers, bends the meaning, or turns a clean sentence into nonsense, should that still count as a win? To answer that question, we looked at 100 BypassGPT rewrites scored with Grammarly’s AI detector and then checked what happened to the writing itself.

The Setup: What Was Measured and Why It Matters

This test used 100 rewritten samples. Each sample already had a human score recorded from Grammarly’s detector. In this scale, a higher score is better because it means the text looked more human to the detector. So a score of 100 means a very strong pass, while a lower score means the text still looked more AI-like.

That score tells only one part of the story. Students do not just need text that passes a tool. They need text that still makes sense, keeps the original structure, and does not quietly damage the content. So the analysis also compared each original sample with its BypassGPT rewrite and checked for common formatting and content problems such as missing numbered lists, weakened headings, changed numbers, and stray characters.

Also Read: BypassGPT AI vs GPTZero.me

What stood out right away

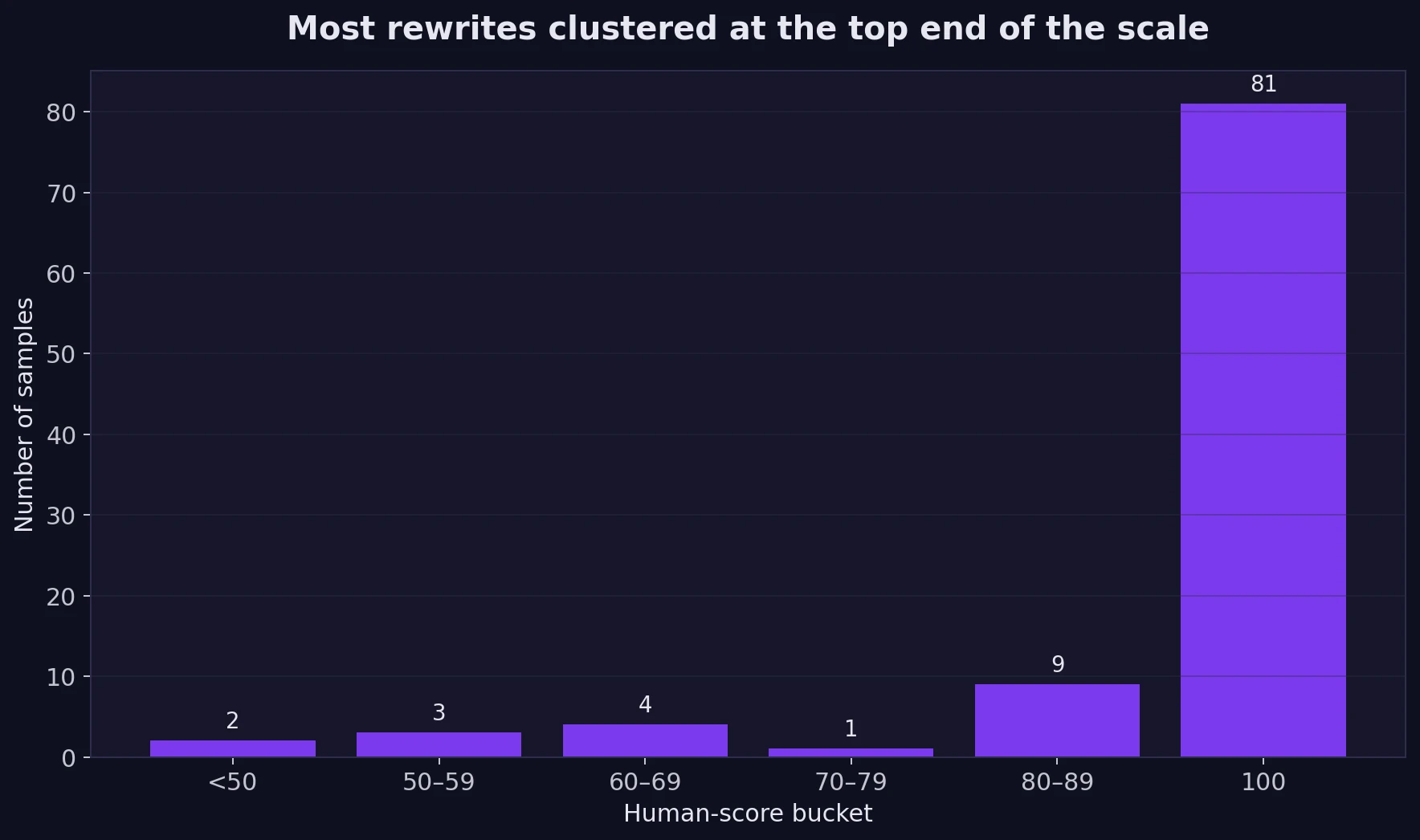

- Average human score: 94.4 out of 100.

- Median score: 100. The median is simply the middle score after sorting all 100 results.

- Perfect passes: 81 out of 100 samples scored a full 100.

- Strong passes: 90 out of 100 scored at least 80, and 98 out of 100 still landed above 50.

For a student reading those numbers alone, the first conclusion would be obvious: BypassGPT performed very well against Grammarly’s detector in this dataset. The score distribution supports that. Most of the samples are not just passing; they are stacked near the top of the scale.

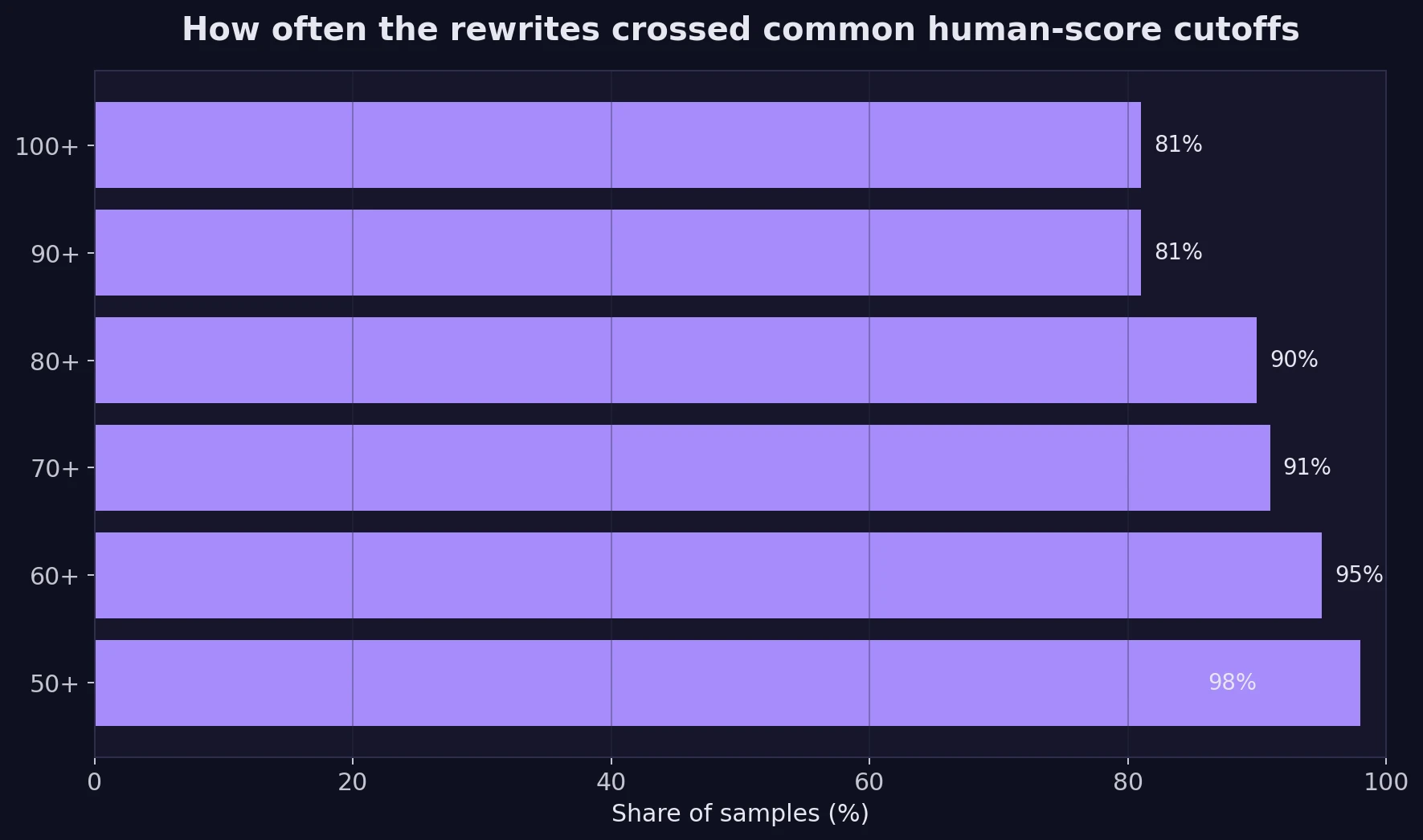

The next chart makes that result even easier to read. Think of these cutoffs as checkpoints. If someone wanted a soft pass, a strong pass, or a near-perfect pass, how often would BypassGPT get there?

The Part Scores Cannot Show: What Happened to the Writing?

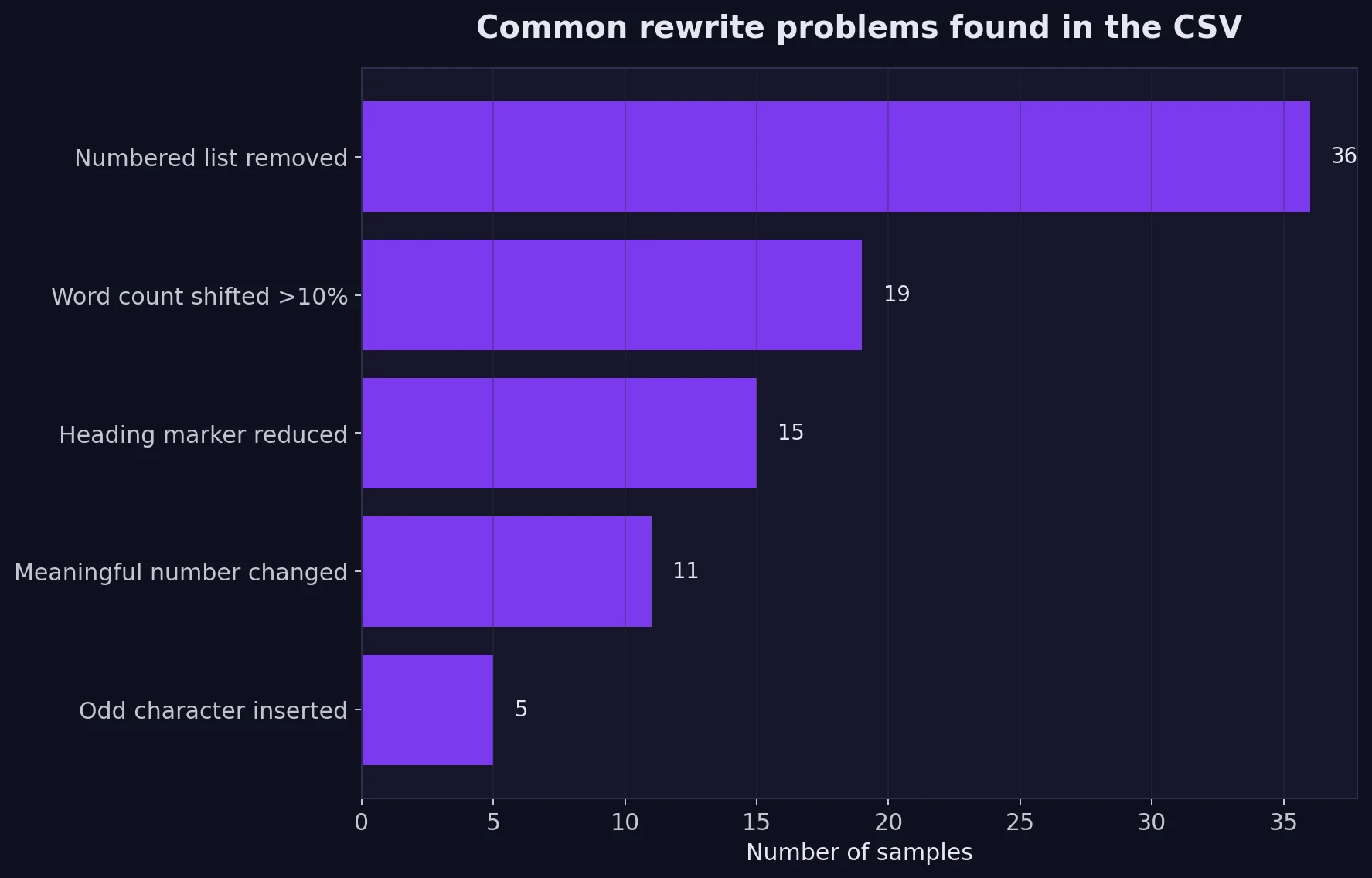

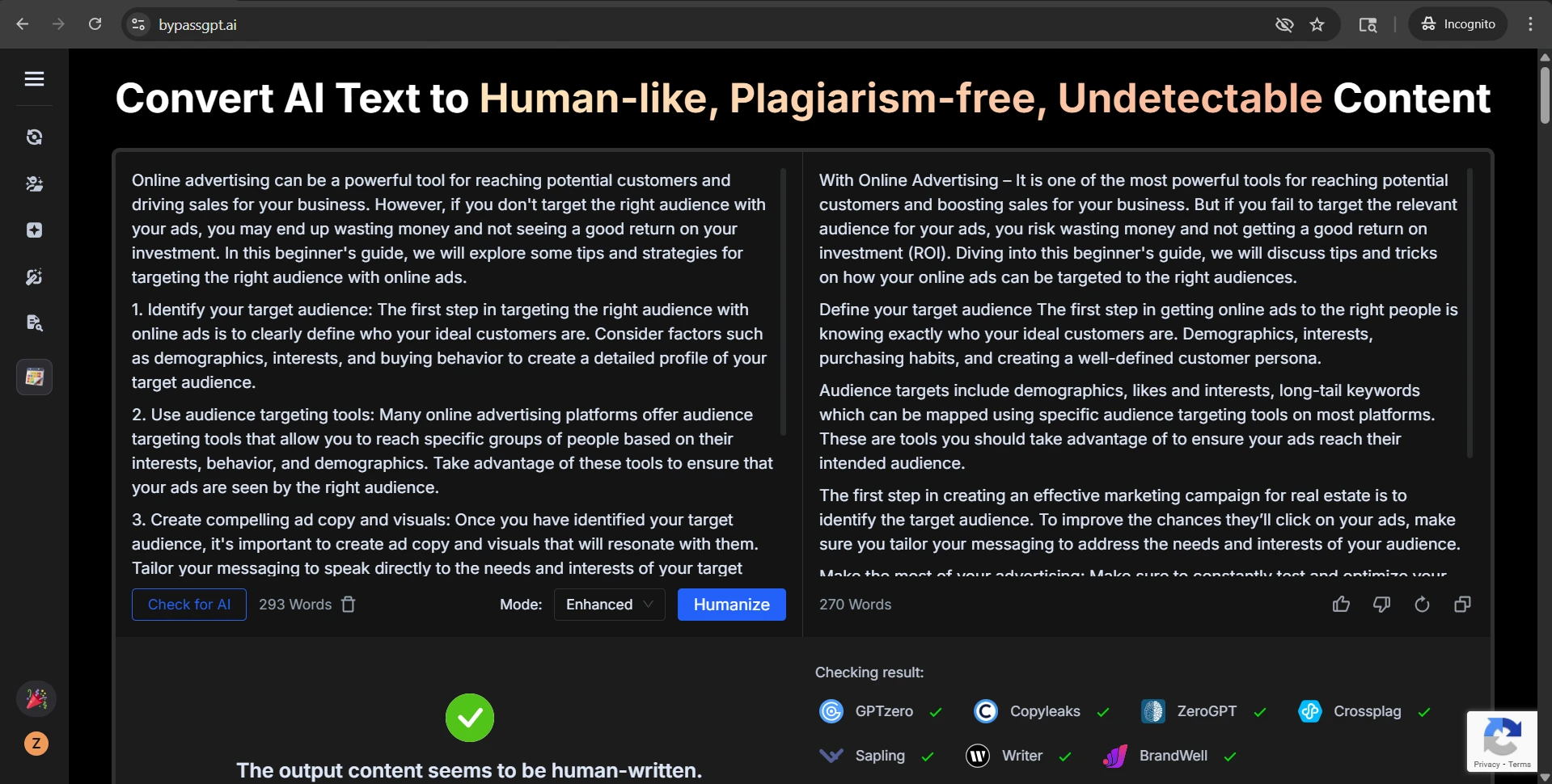

Now comes the more interesting question. Did the rewrites stay usable? In many cases, yes. But across the CSV, the tool also introduced a pattern of damage that students should care about, especially if they are rewriting essays, notes, guides, or structured assignments.

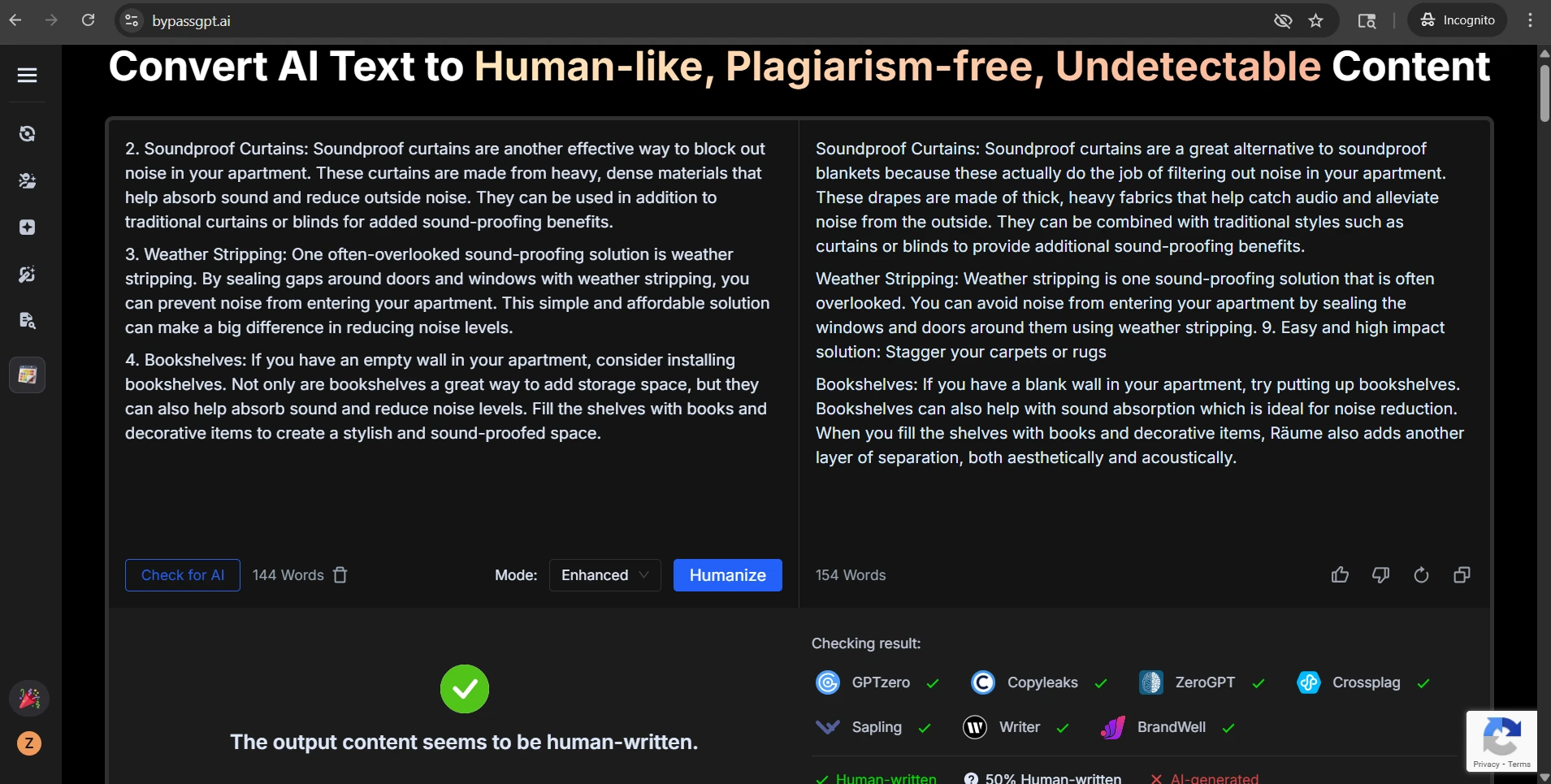

The biggest formatting problem was the loss of numbering. In the dataset, 36 samples contained numbered list items in the original text. BypassGPT removed that numbering in all 36 of them. That matters because numbered structure is not decoration. It tells readers the order of steps, the rank of ideas, or the sequence of instructions.

Headings also took hits. Among the samples that used heading-style labels with colons, 15 out of 39 lost at least one heading marker. In plain terms, text that was originally broken into clear sections often came back flatter and harder to scan.

Also Read: Can BypassGPT really bypass Originality.ai?

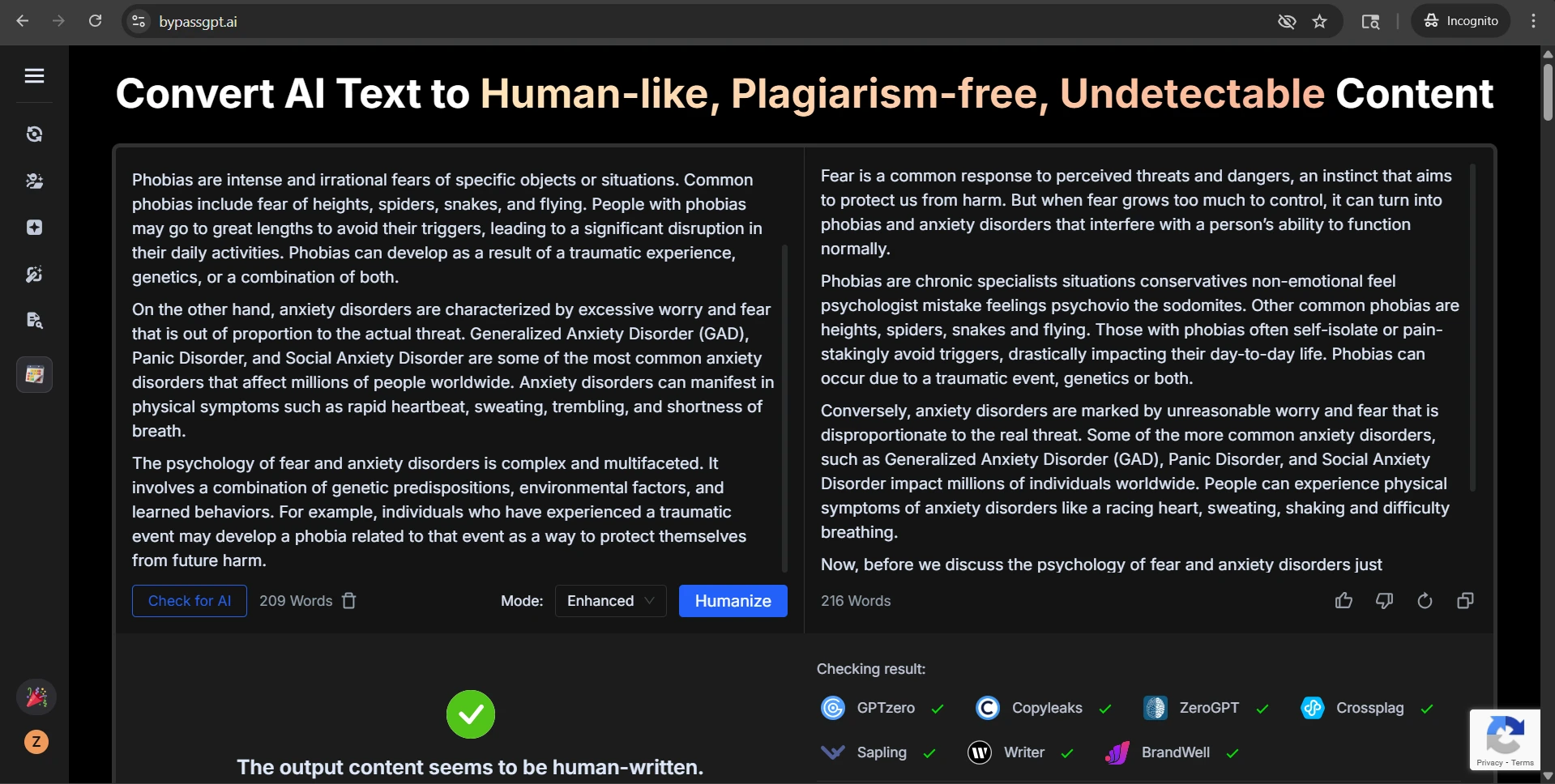

There were also content risks. Eleven samples changed meaningful numbers inside the text, not just list labels. In a school context, that is a serious issue. Dates, counts, percentages, and historical facts are not details students can afford to blur. On top of that, five rewrites inserted odd characters, including stray foreign letters or punctuation marks that did not belong in the original. Another 19 samples changed in length by more than 10%, which often made the text either padded or trimmed in awkward ways.

What the Examples Reveal

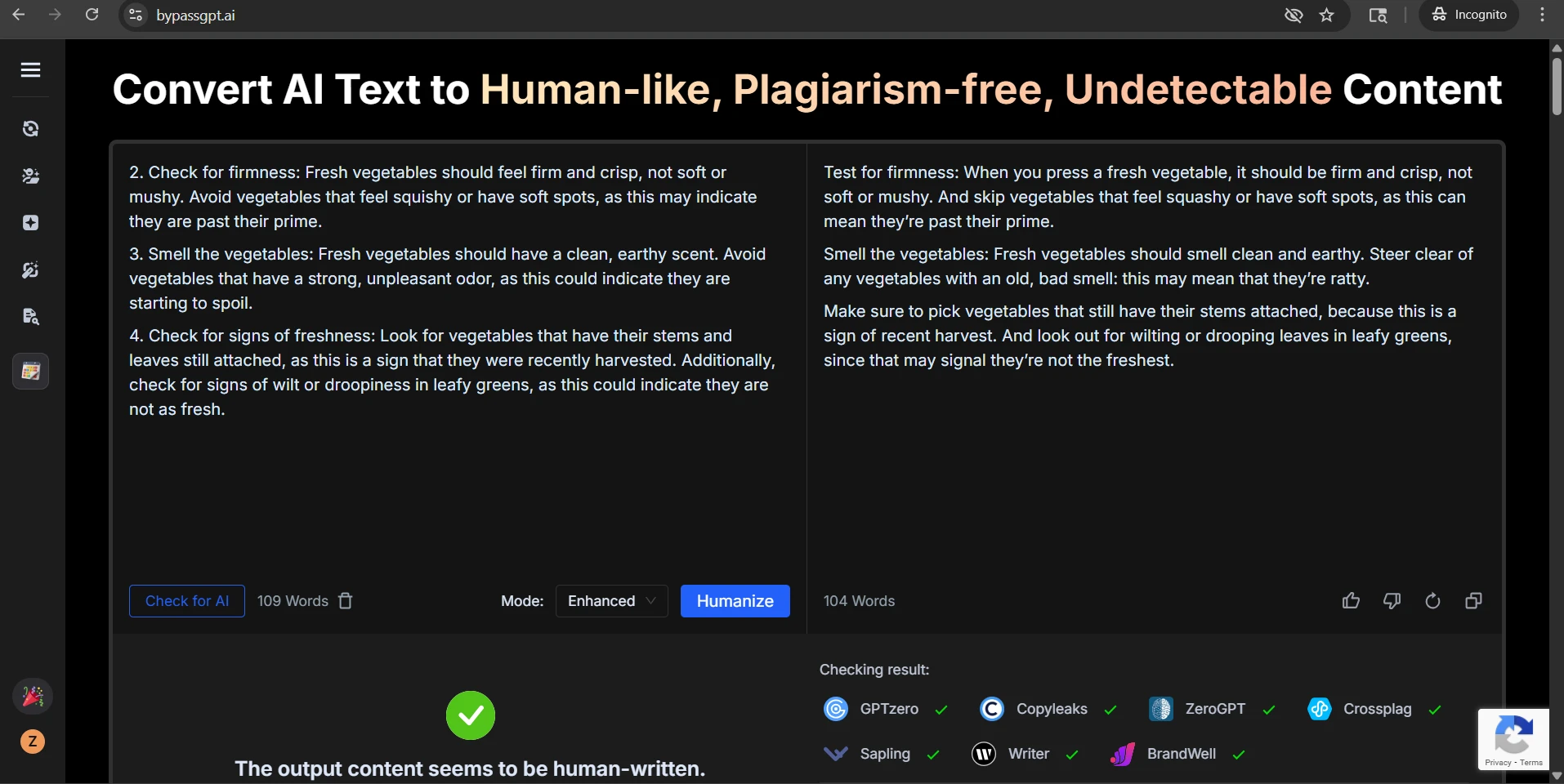

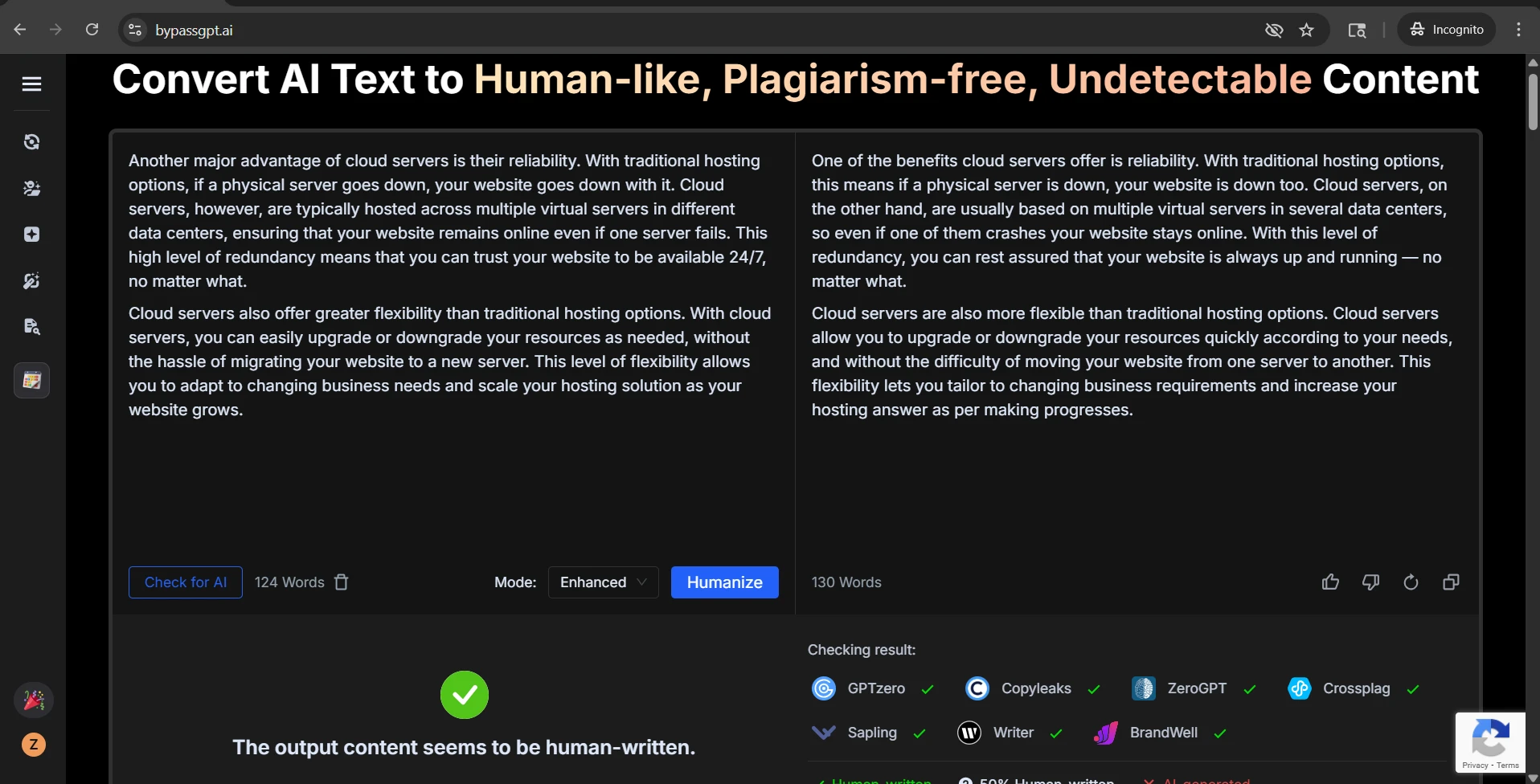

The screenshots make these problems easier to see. One rewrite turns a clean vegetable-buying checklist into looser prose, drops the list numbers, and replaces a simple idea about bad smell with the strange word ratty. Another soundproofing rewrite introduces a random new numbered item and even slips in an unrelated foreign-looking word. A third example about phobias is the most alarming of all: part of the rewritten paragraph becomes obvious nonsense, yet the sample still receives a strong human-looking result.

Also Read: Can ZeroGPT detect BypassGPT?

That creates the central tension in the whole test: the detector score and the reading experience do not always move together. A rewrite can look more human to Grammarly while becoming less useful to an actual person.

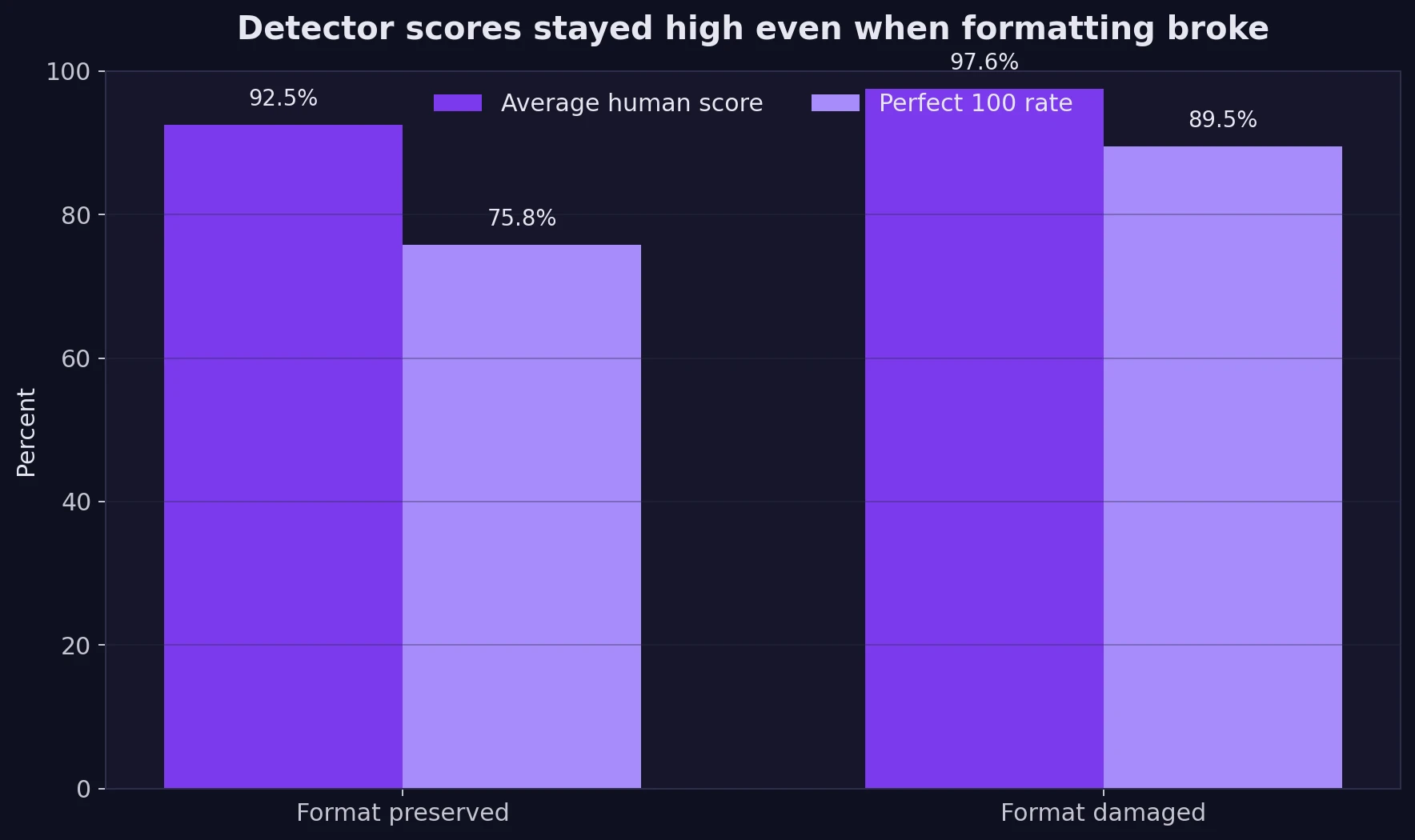

This is one of the most revealing results in the study. Samples with formatting damage still averaged 97.6 human, while the format-preserved group averaged 92.5. The damaged group also produced more perfect 100 scores. That does not prove messy writing is better writing. It suggests something narrower and more important: Grammarly’s detector did not consistently penalize the loss of structure.

Also Read: Can Sapling AI Detect BypassGPT?

So, Does BypassGPT Actually Work?

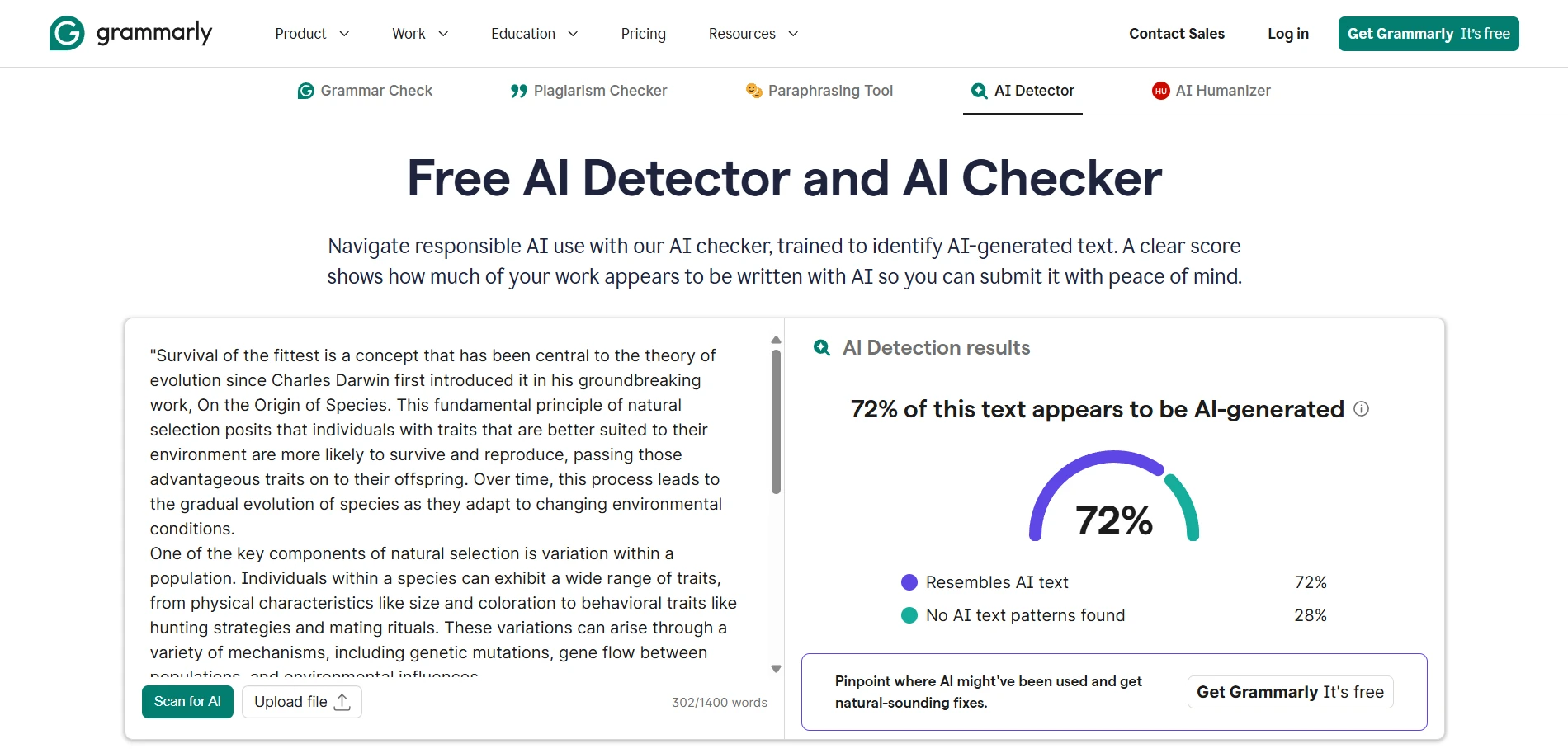

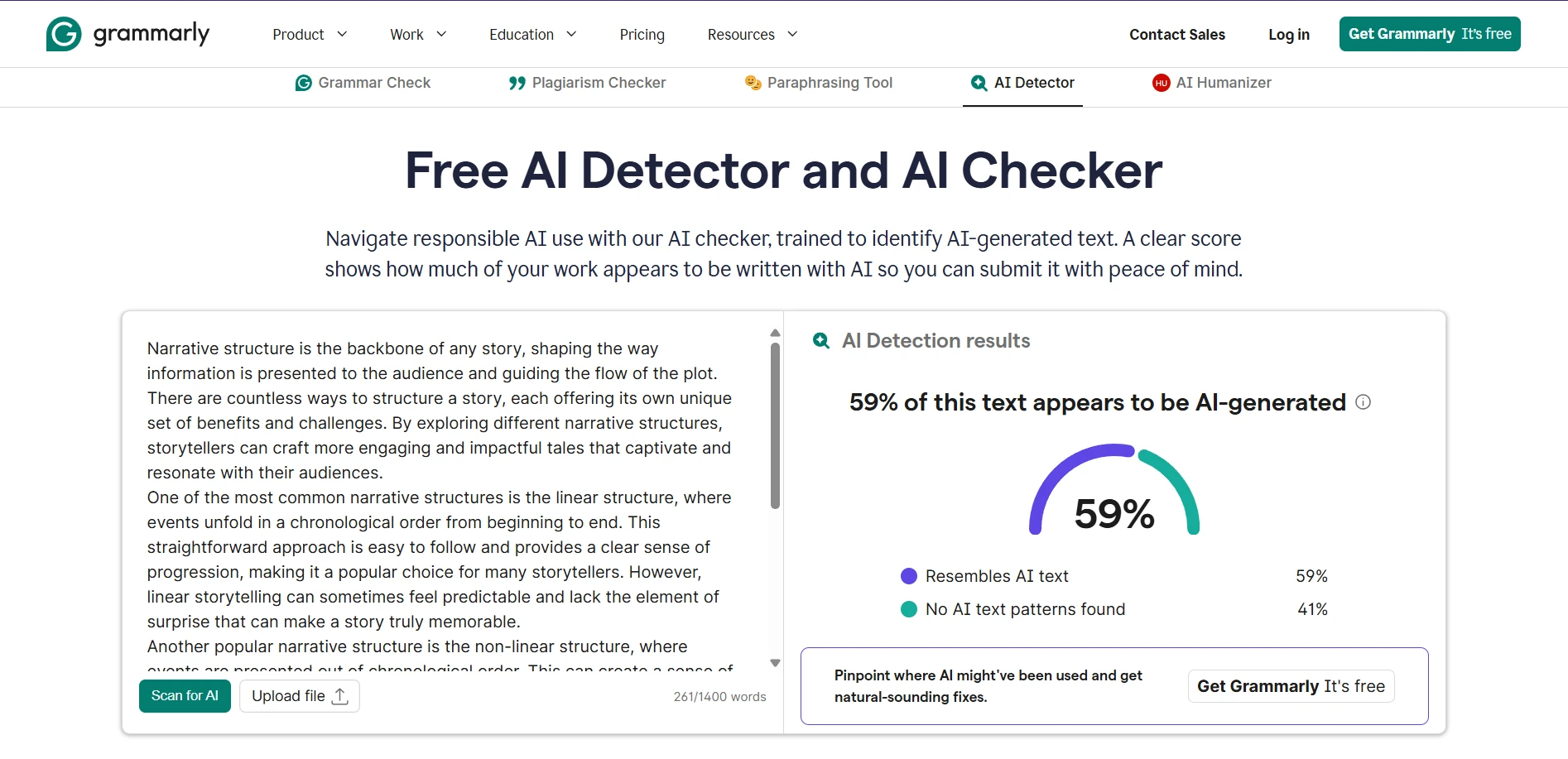

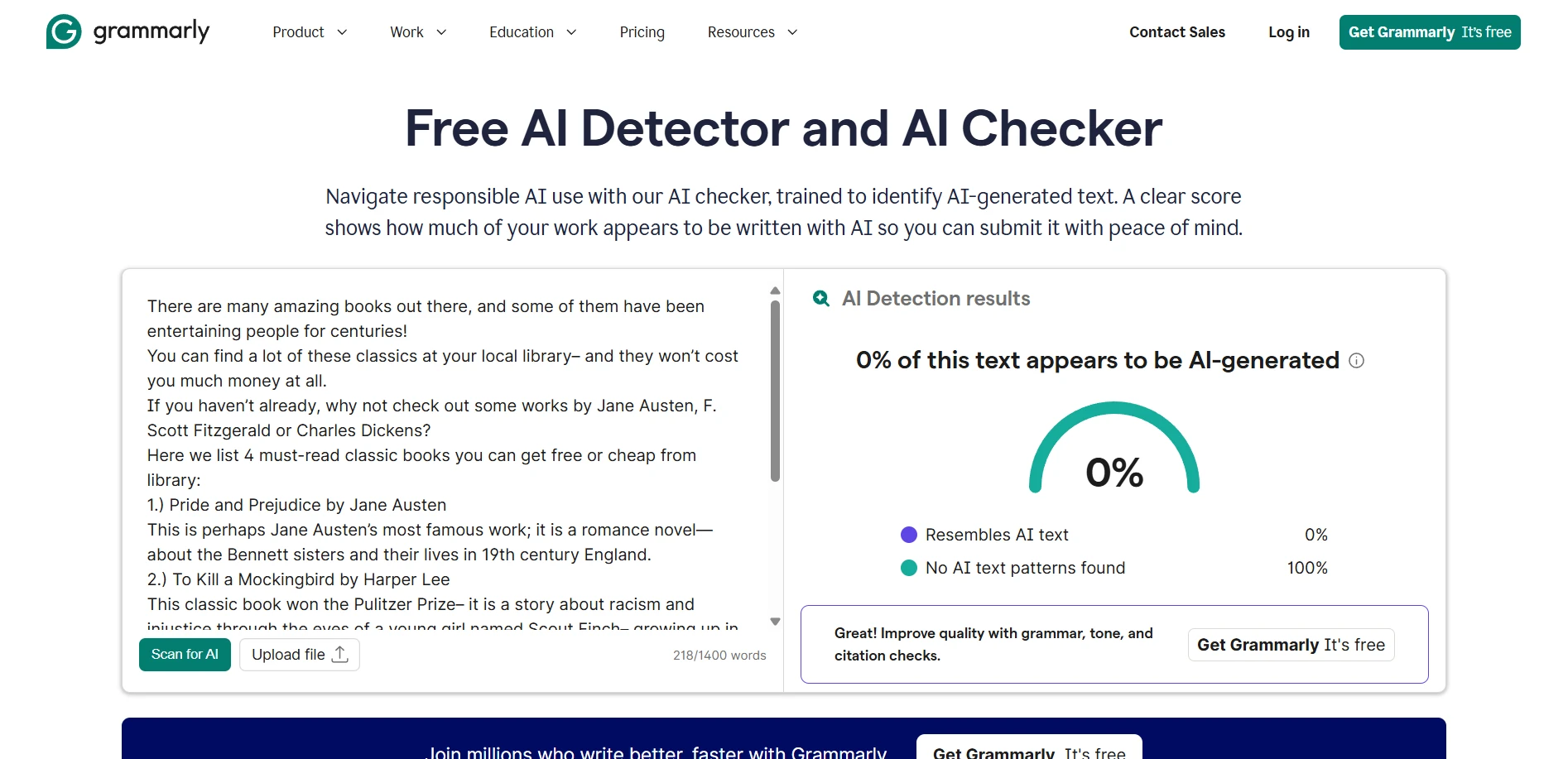

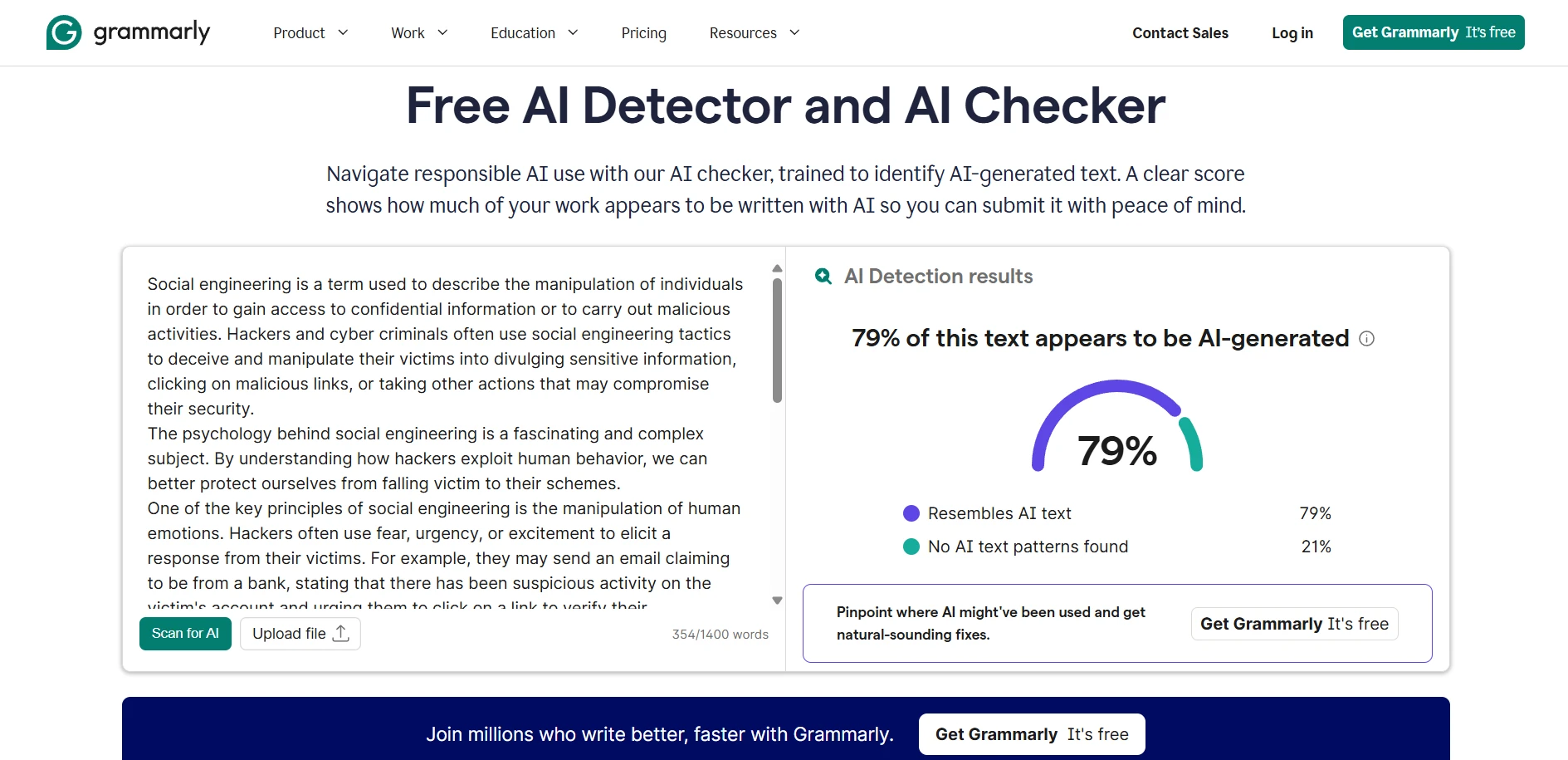

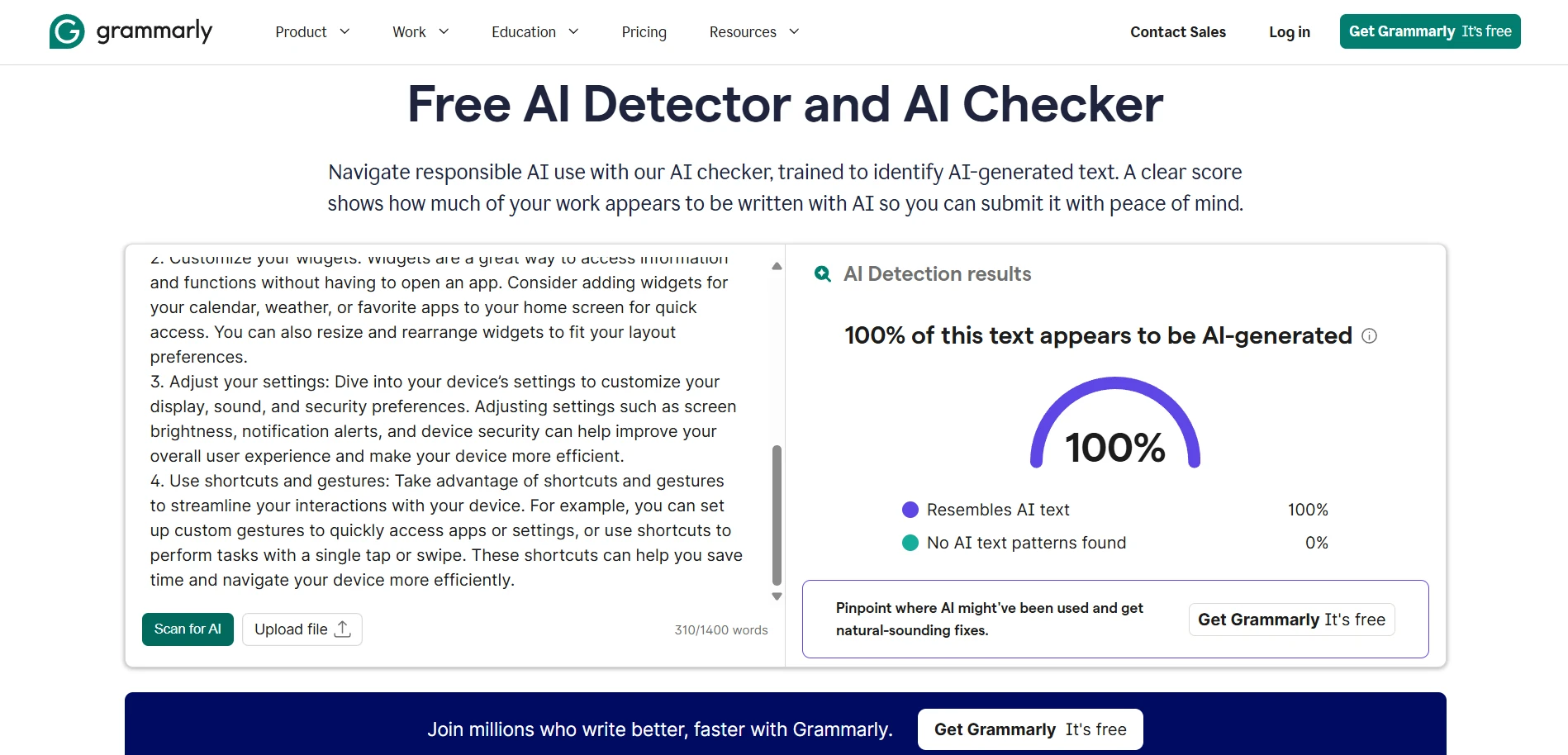

If the question is purely, “Can it raise the odds of passing Grammarly’s AI detector?” the answer from this dataset is mostly yes. An average score of 94.4, a median of 100, and 81 perfect scores out of 100 are hard to dismiss. The attached Grammarly screenshots tell the same broader story from another angle: some rewrites were marked 0% AI-generated, while others still came back as 59%, 72%, 79%, or even 100% AI-generated. In other words, the tool can work very well, but it is not automatic and not flawless.

Also Read: [STUDY] Can BypassGPT.ai Really Bypass Copyleaks?

The Real Takeaway for Students

BypassGPT looks effective if your only scoreboard is Grammarly’s detector. On that front, the numbers are strong. But the deeper review shows a trade-off: the tool often wins detector points by changing the shape of the writing, not necessarily by improving it. Lists get flattened, headings weaken, some facts shift, and a few outputs become outright awkward.

For students, that means the smartest approach is not blind trust. A rewritten draft still needs a human check for structure, meaning, and clarity before it is submitted anywhere. The lesson from these 100 samples is simple: detector success and writing quality are not the same thing. If you treat them as the same, the score may look great while the writing quietly gets worse.

![[STUDY] Can BypassGPT Outsmart Grammarly’s AI Detector?](/static/images/bypassgptai_vs_grammarly_ai_detectorpng.webp)