When a Detector Judges Your Writing: Grammarly vs ZeroGPT on 160 Samples

Imagine turning in a paper you wrote yourself… and a tool insists it “looks AI.” That one number can affect a grade, a scholarship, or your confidence. So I tested two popular detectors—Grammarly’s AI detector and ZeroGPT—across 160 text samples to see how they behave when the stakes are real.

What I Measured (and What the Score Actually Means)

Each tool outputs a human score between 0 and 1. Higher means “more likely written by a human.” For readability, you can think of 0.85 as roughly 85% human-like.

The dataset includes 78 human-written samples and 82 AI-generated samples. Every sample went through both tools, and I recorded their scores.

Also Read: Originality AI vs Grammarly AI Detector

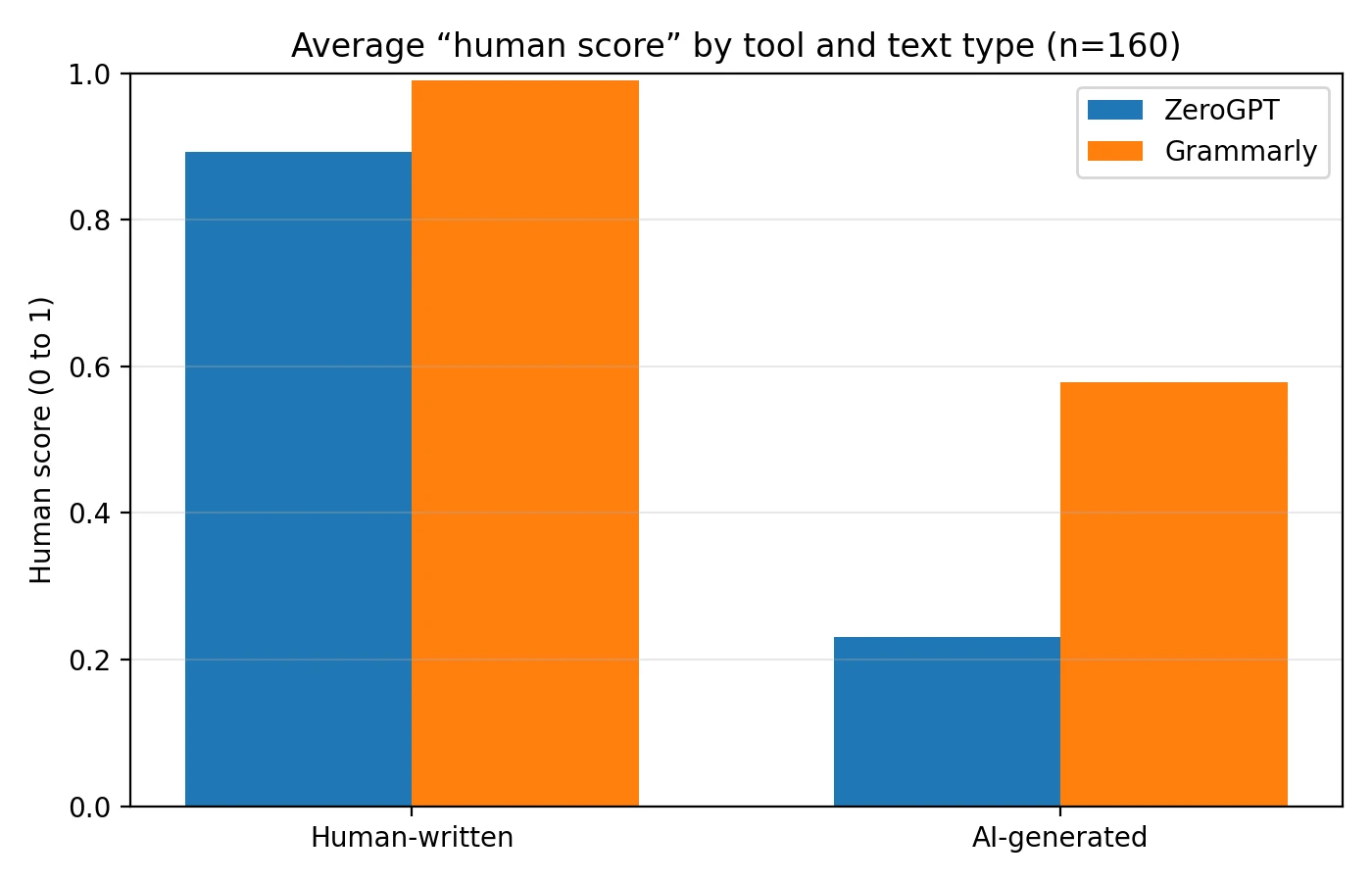

Quick scoreboard (higher is better on Human text, lower is better on AI text)

- Human-written text: Grammarly averaged 98.96% human, while ZeroGPT averaged 89.26% human.

- AI-generated text: ZeroGPT averaged 23.11% human (good: it’s skeptical), while Grammarly averaged 57.83% human (risky: it often “trusts” AI).

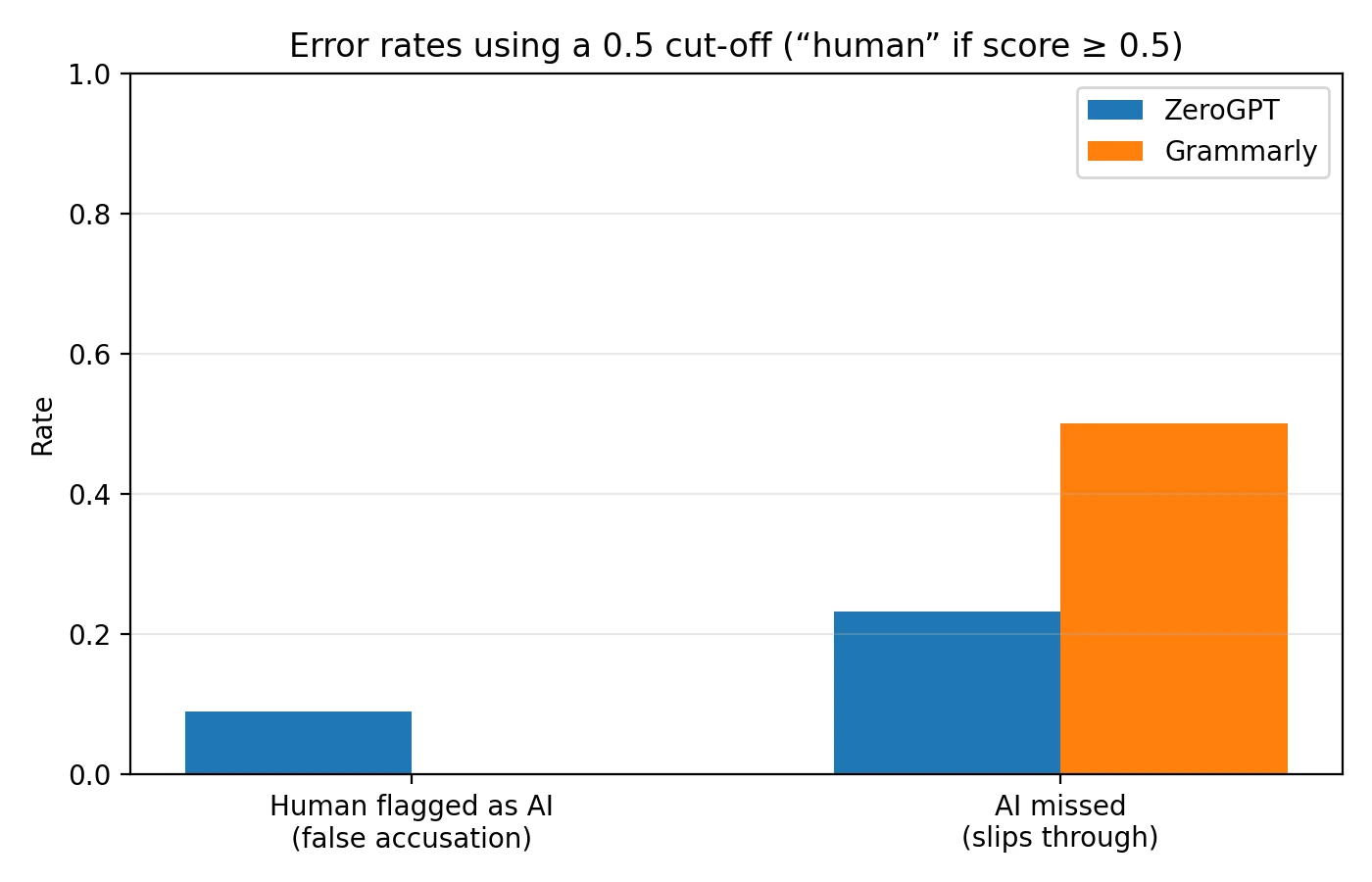

The Big Question for Students: “Will it falsely accuse me?”

AI detectors are often used like a yes/no decision. To simulate that, I used a simple cut-off: if the score is ≥ 0.5, the tool says “human”; if it’s below 0.5, it says “AI.”

Two mistakes can happen: false accusation (a human piece gets flagged as AI), and AI slip-through (AI text gets treated as human).

Also Read: ZeroGPT vs Quillbot AI Detector

What happened with the 0.5 cut-off

- False accusations (Human → flagged AI): ZeroGPT did this 7/78 times (9.0%). Grammarly did it 0/78 times (0.0%).

- AI slip-through (AI → treated Human): ZeroGPT missed 19/82 AI samples (23.2%). Grammarly missed 41/82 AI samples (50.0%).

So the trade-off is clear: Grammarly is “gentle” on human writers but lets a lot of AI pass. ZeroGPT catches more AI but is more likely to wrongly doubt a real student.

Chart 1: The Average Scores Tell a Story

Averages are not everything, but they’re a helpful first clue. This bar chart compares the average score each tool gave to human-written vs AI-generated text.

Also Read: Originality.ai vs Quillbot AI Detector

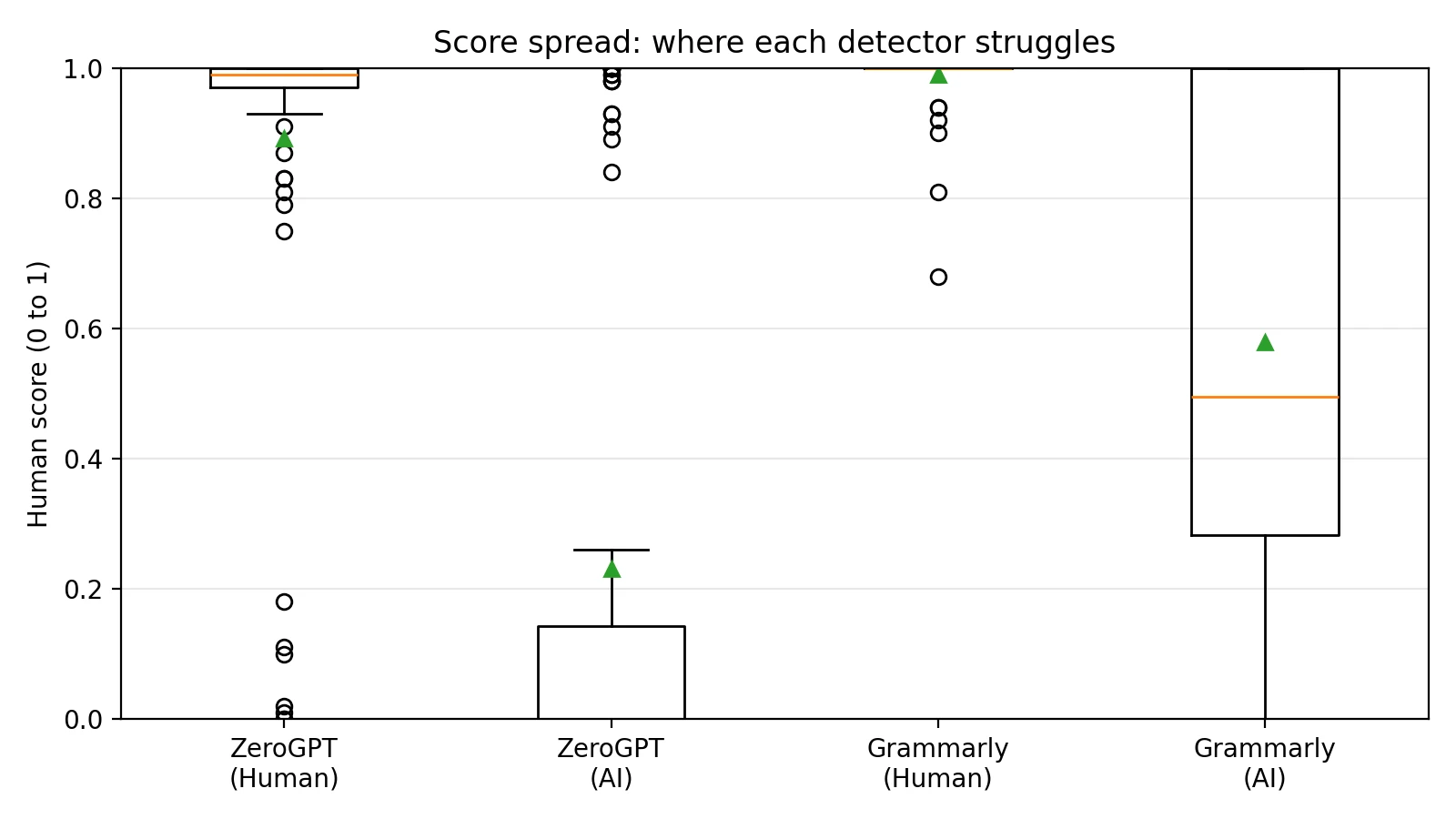

Chart 2: Where the Scores Spread Out (and Where They Overlap)

To see consistency, I used a box plot (a simple chart that shows the “middle chunk” of scores and how far they spread). When boxes overlap a lot, the tool has a harder time separating human and AI.

Notice the pattern: Grammarly scores are packed very close to 1.0 for human text—which is great— but it also gives many AI samples surprisingly high scores. ZeroGPT separates the groups more, but has a few human samples that drop low.

Chart 3: Error Rates (The Part Students Actually Feel)

If you only remember one chart, make it this one. It turns the scores into “real-world mistakes” at the 0.5 cut-off.

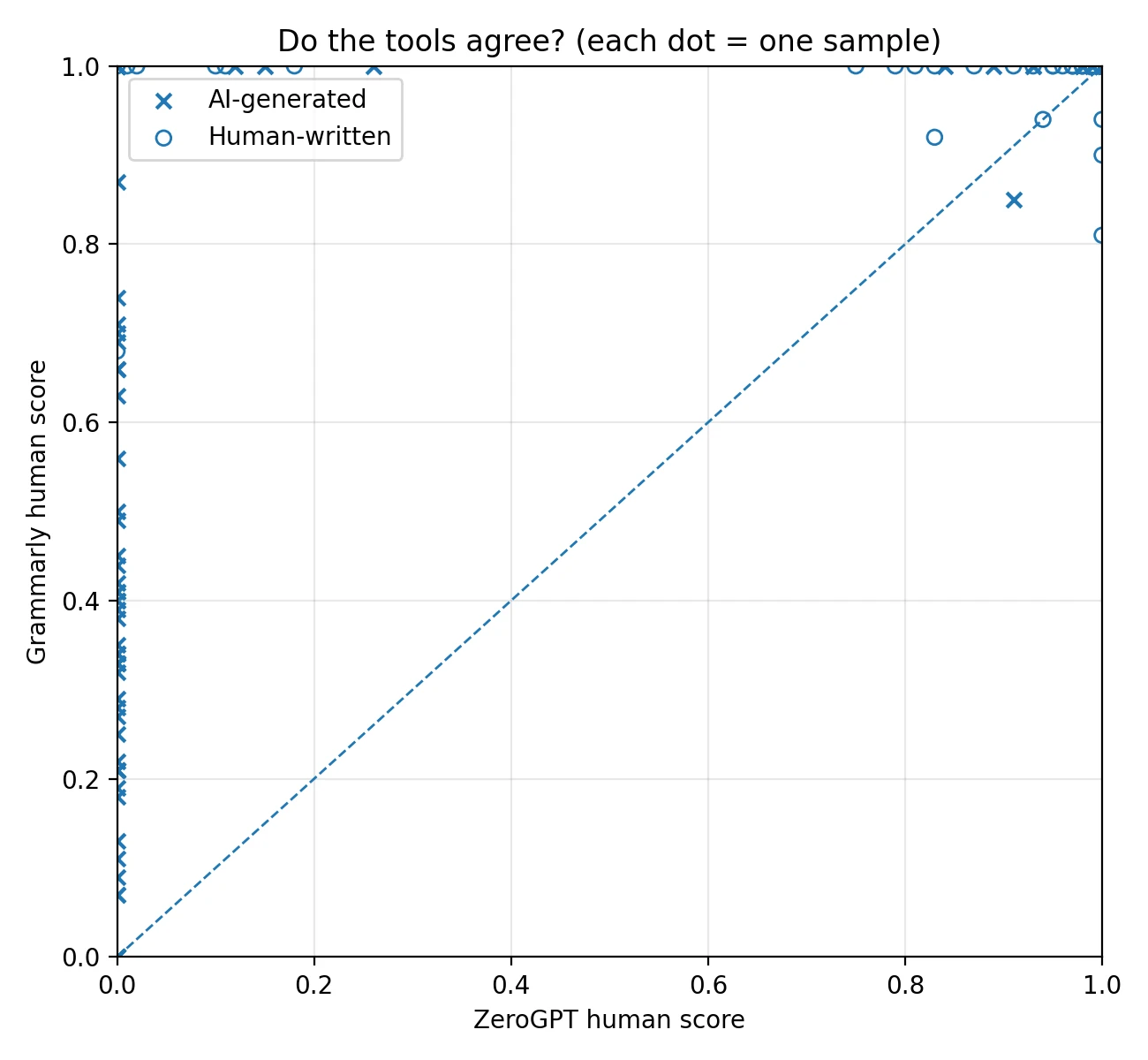

Chart 4: Do the Tools Agree With Each Other?

This scatter plot shows each sample as a dot: the x-axis is ZeroGPT’s score and the y-axis is Grammarly’s score. If both tools judged a text similarly, dots would cluster near the diagonal line.

So Which Detector Is “Better”?

It depends on the risk you care about.

If your priority is protecting students from false accusations: Grammarly behaved safer in this dataset (0% false accusations at the 0.5 cut-off). But the downside is huge: it treated half of the AI samples as “human,” which makes it weak as an enforcement tool.

If your priority is catching AI text more often: ZeroGPT was more skeptical of AI overall and missed fewer AI samples. However, it still falsely flagged about 9.0% of genuine human samples—meaning real students can get dragged into explaining themselves.

Optional nerd note (kept simple): I also computed a single “separation score” called AUC. Think of it as: “Across every possible cut-off, how well can the tool rank human texts above AI texts?” ZeroGPT scored 0.871 vs Grammarly’s 0.808 (higher is better).

What Students Should Do If a Detector Is Used Against Them

Detectors are not proof. If you want protection, keep evidence of your writing process:

- Save drafts (Google Docs version history counts).

- Keep your outline and sources.

- Write a short reflection: what you changed and why.

- If you used tools like Grammarly for grammar fixes, note that clearly.

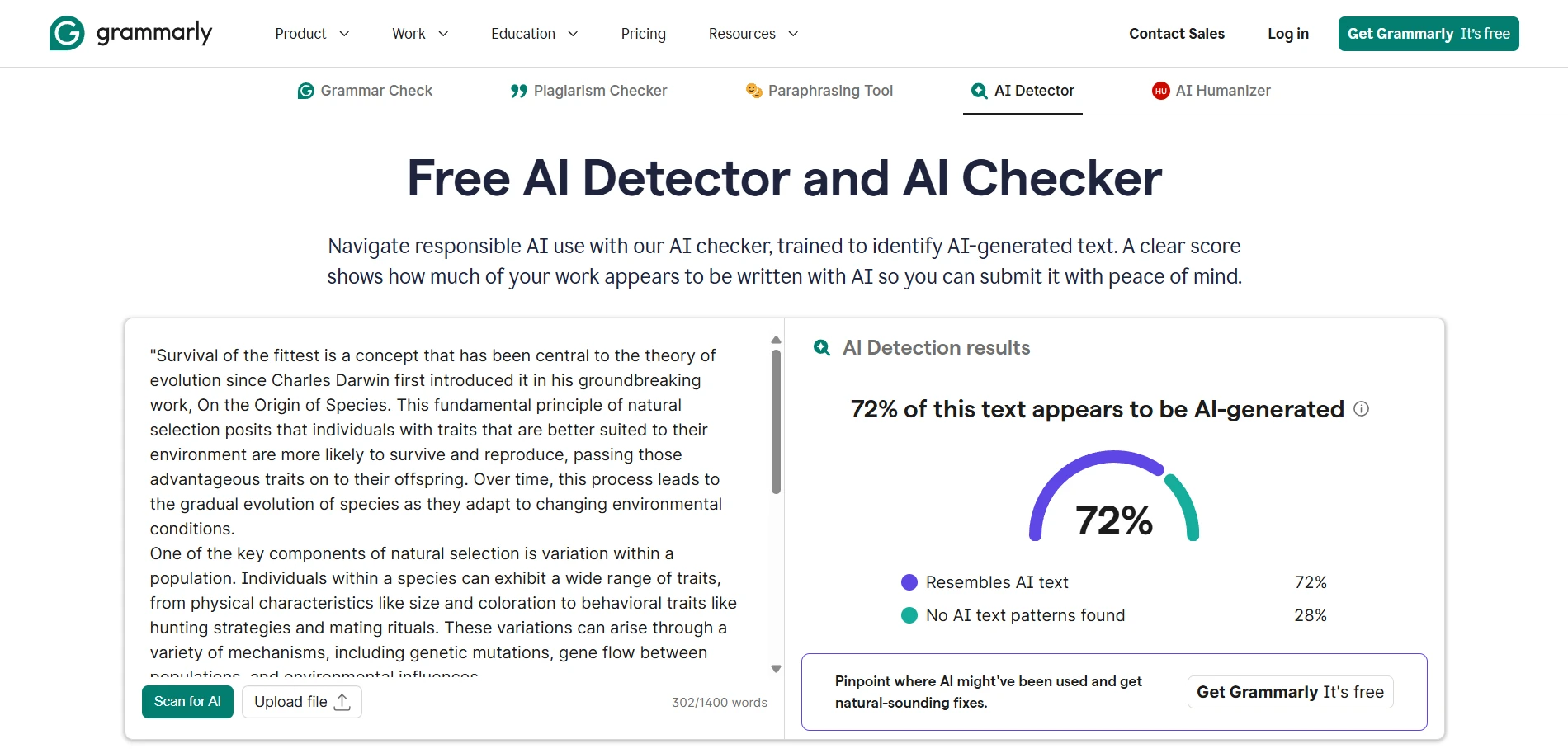

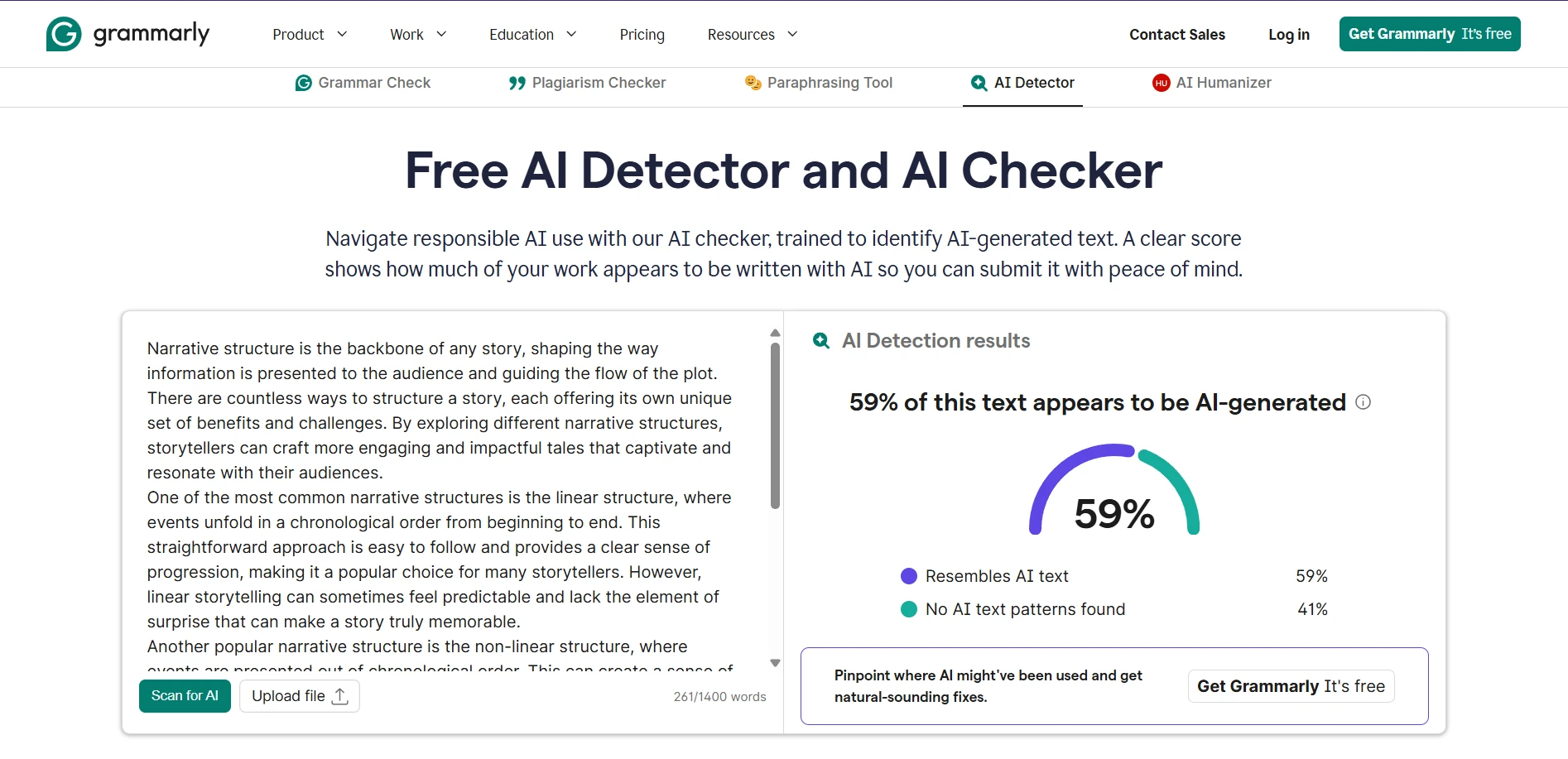

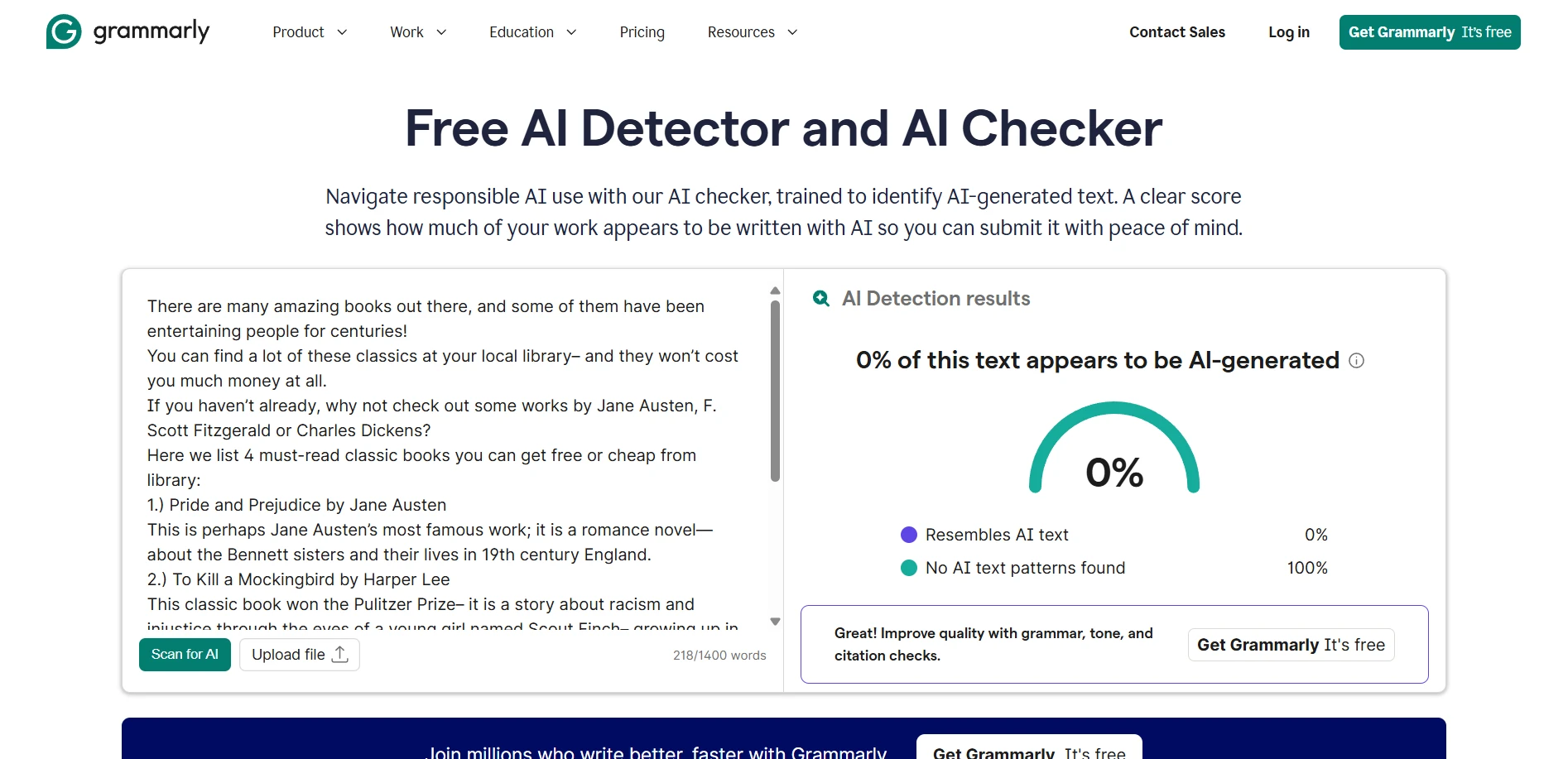

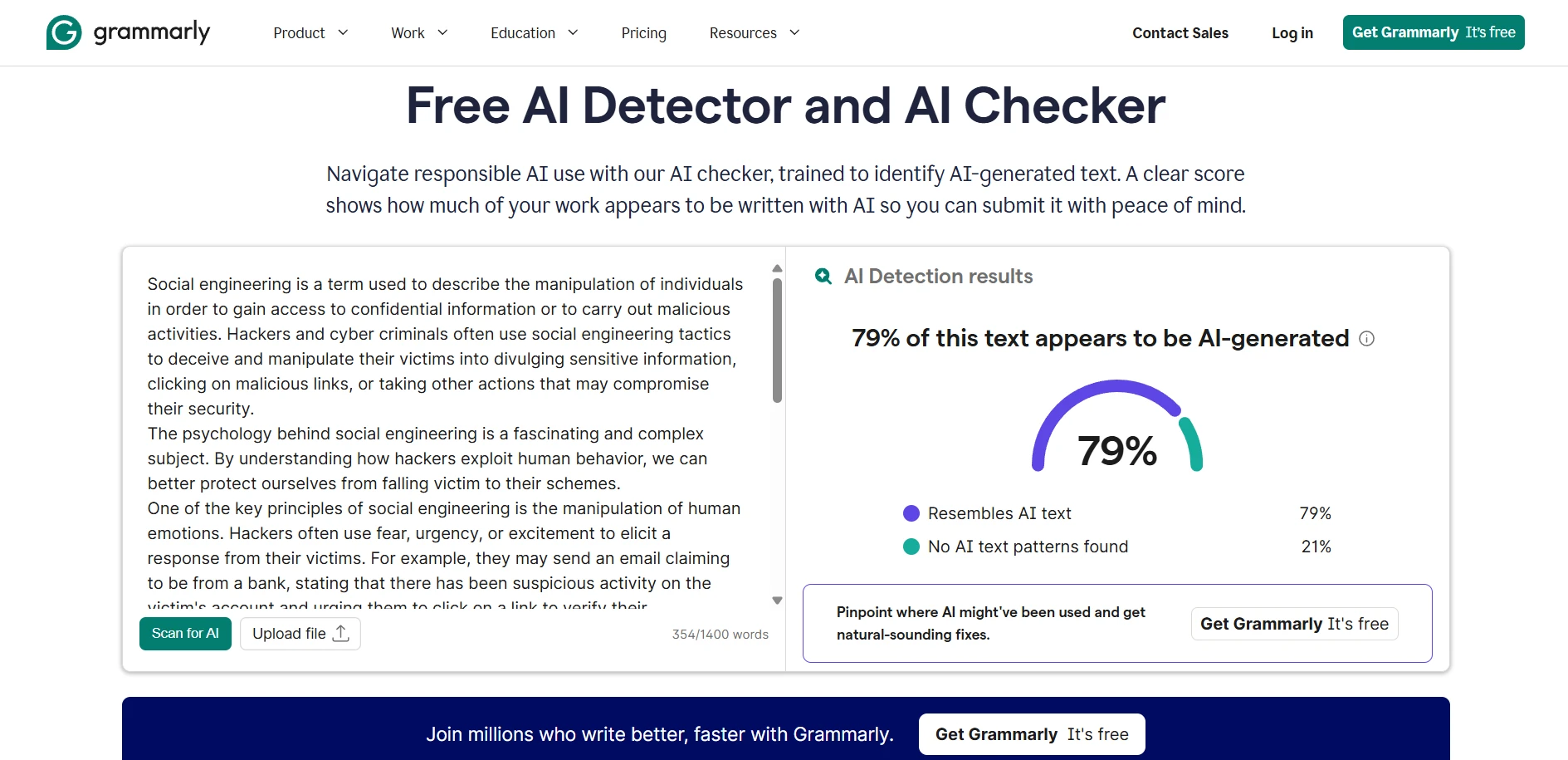

Deep Dive Screenshots: What the Tools Look Like

Grammarly AI Detector Examples

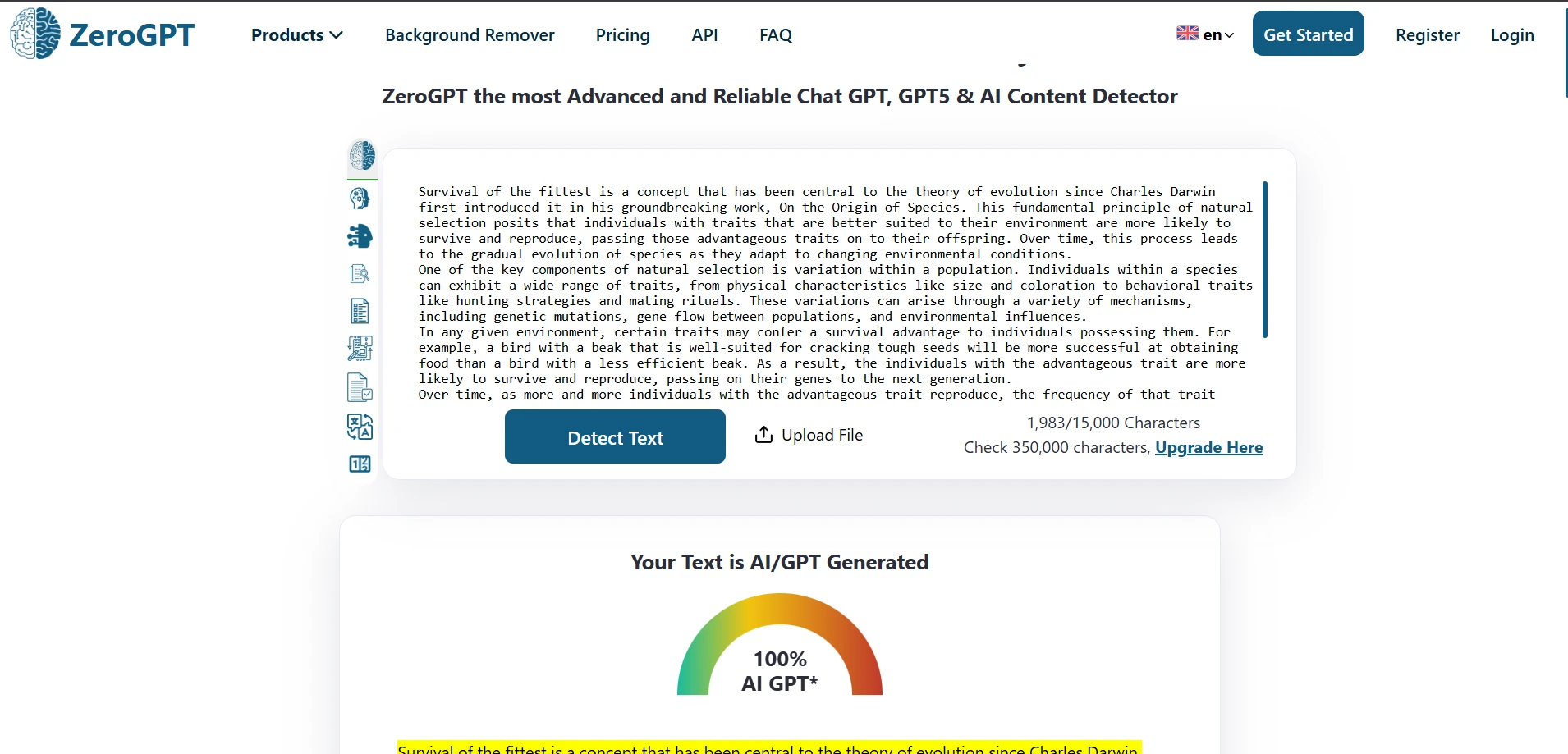

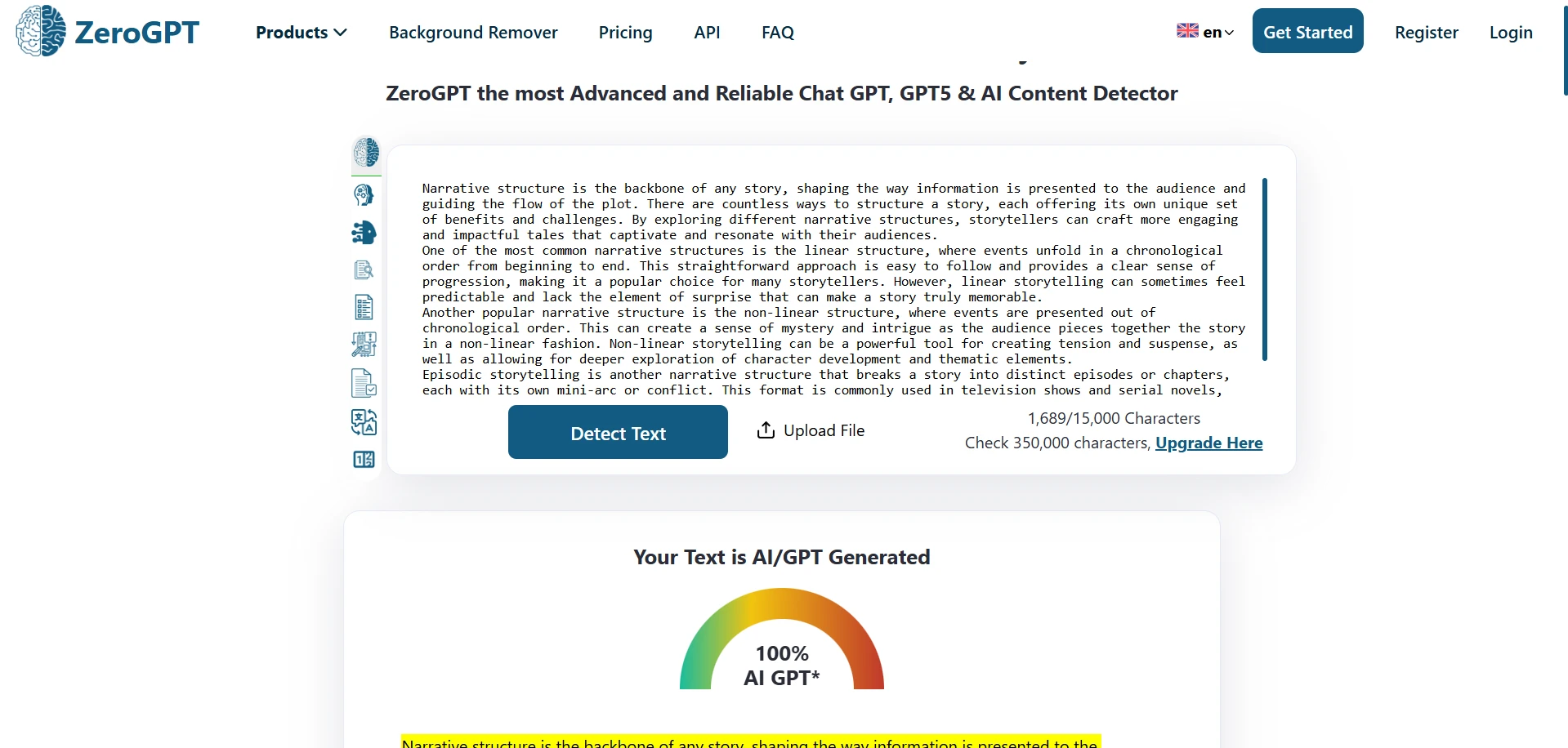

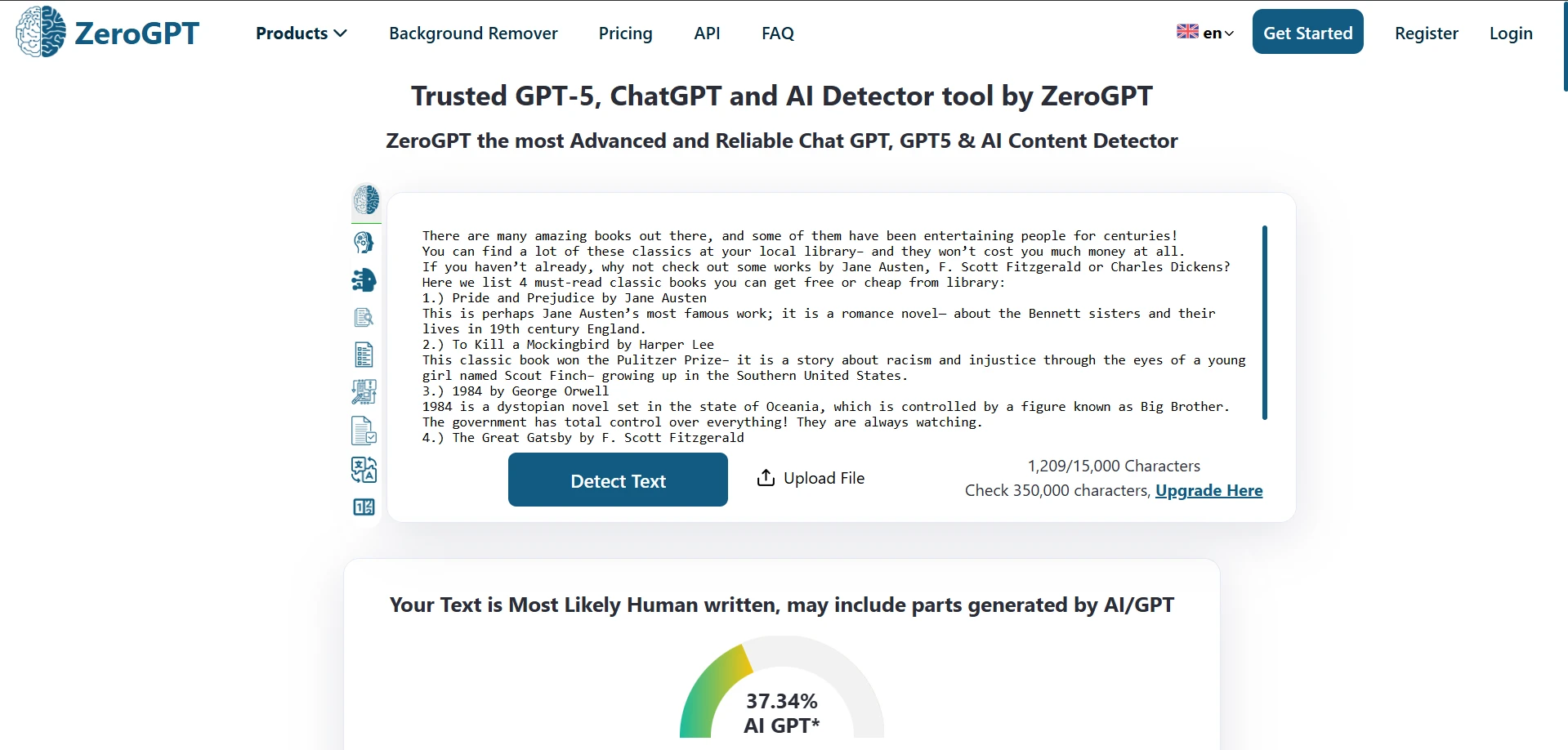

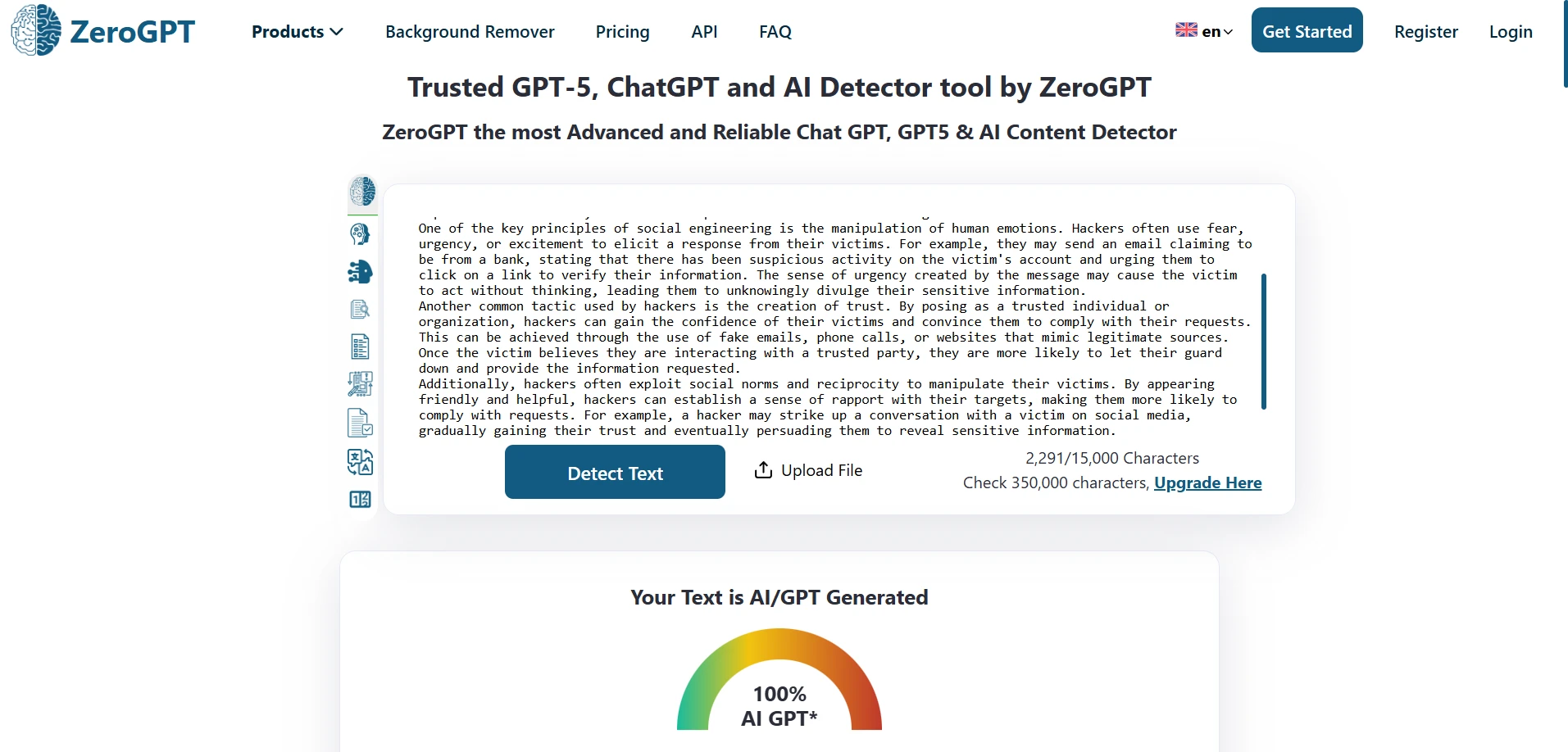

ZeroGPT Detector Examples

The Bottom Line

In this 160-sample test, Grammarly’s detector was less likely to falsely accuse a human writer—but it also let a lot of AI pass as human. ZeroGPT did a better job spotting AI overall, but it sometimes doubted genuine writing.

If you’re a student, the most important takeaway is this: a detector score should start a conversation, not end one. Keep your drafts, document your process, and treat any single number as a clue—not a verdict.

![[STUDY] When an AI Judges Your Writing: Grammarly vs ZeroGPT on 160 Samples](/static/images/zerogpt_vs_grammarly_featured_imagepng.webp)