A data-backed comparison using 160 samples, converted into a single “human score” scale so the two tools can be judged side by side.

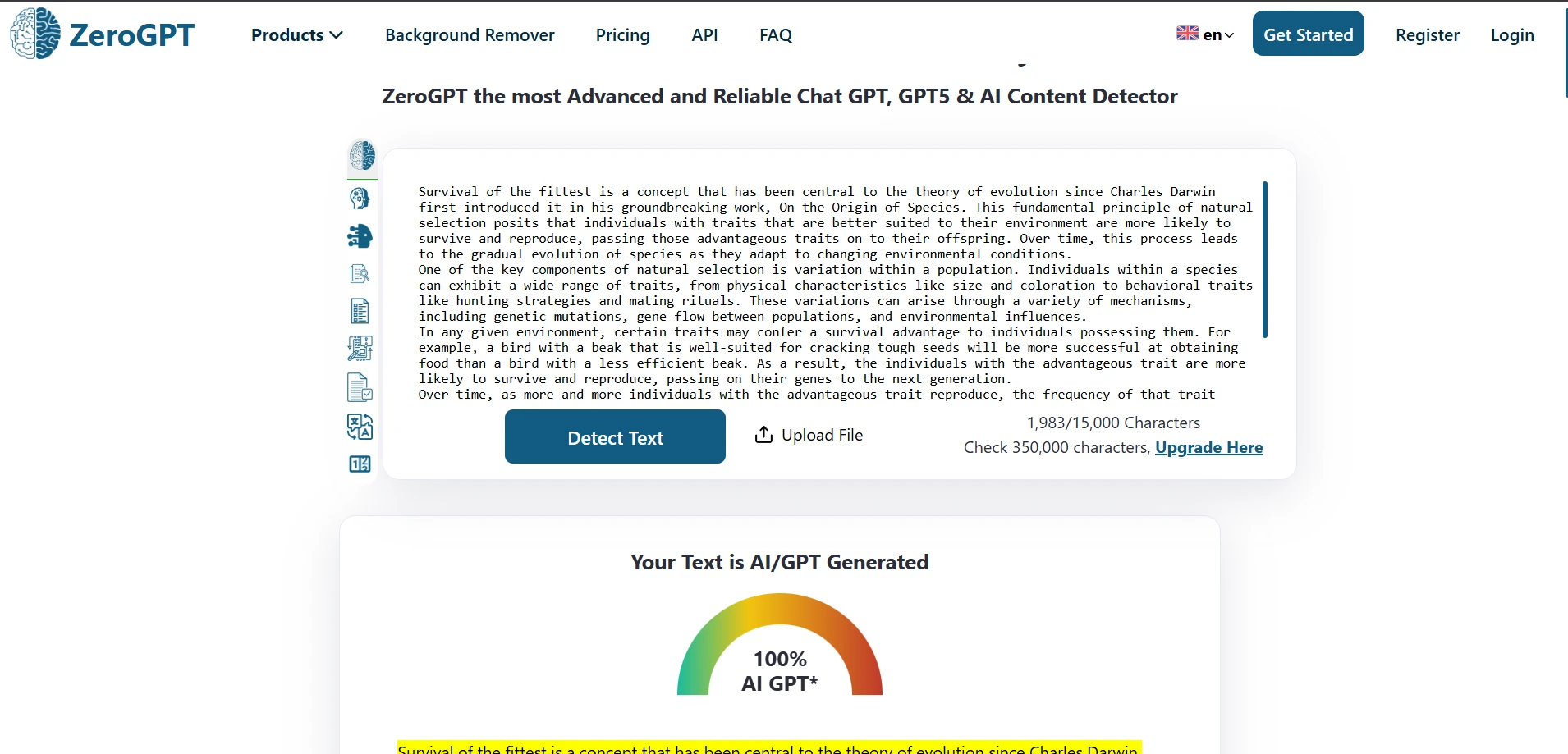

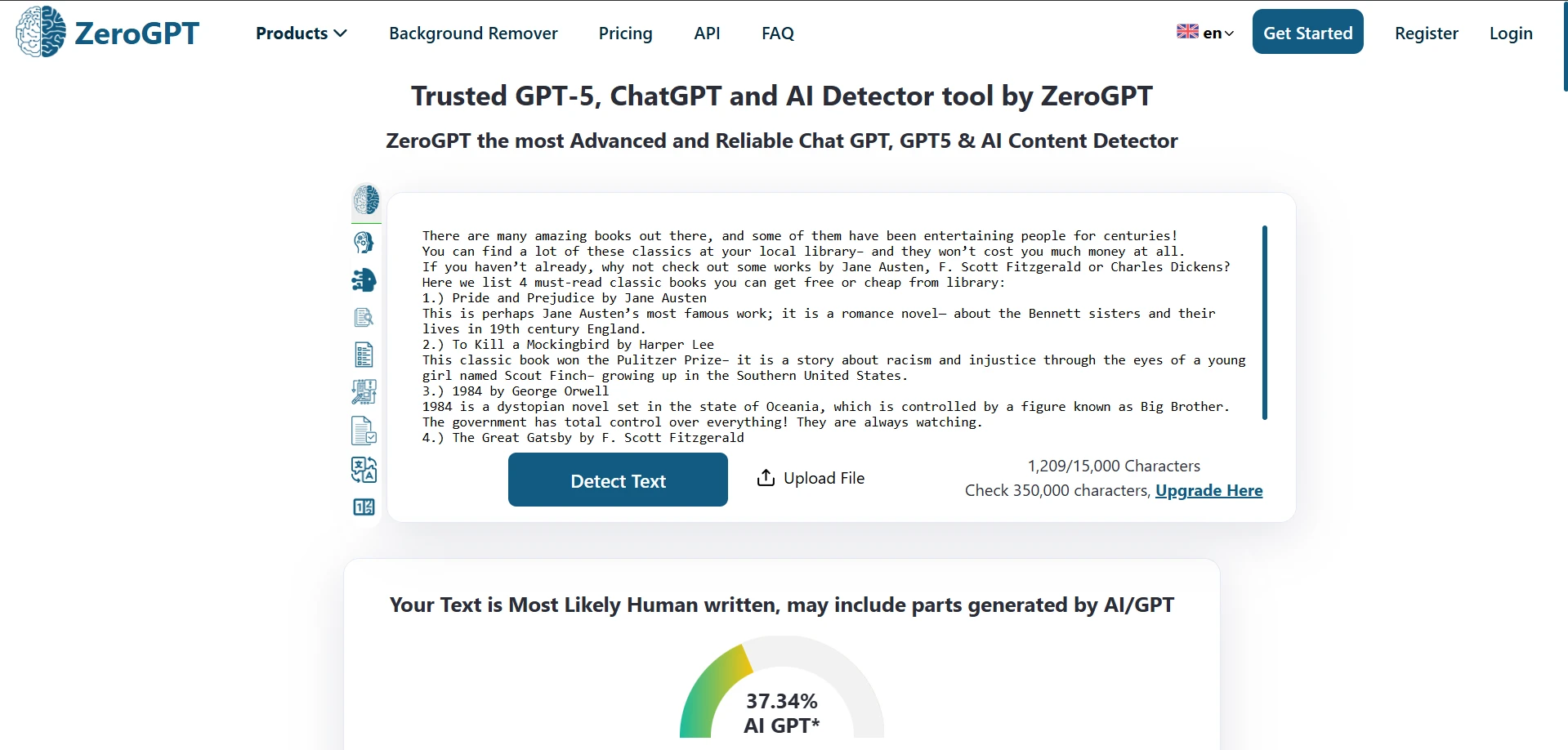

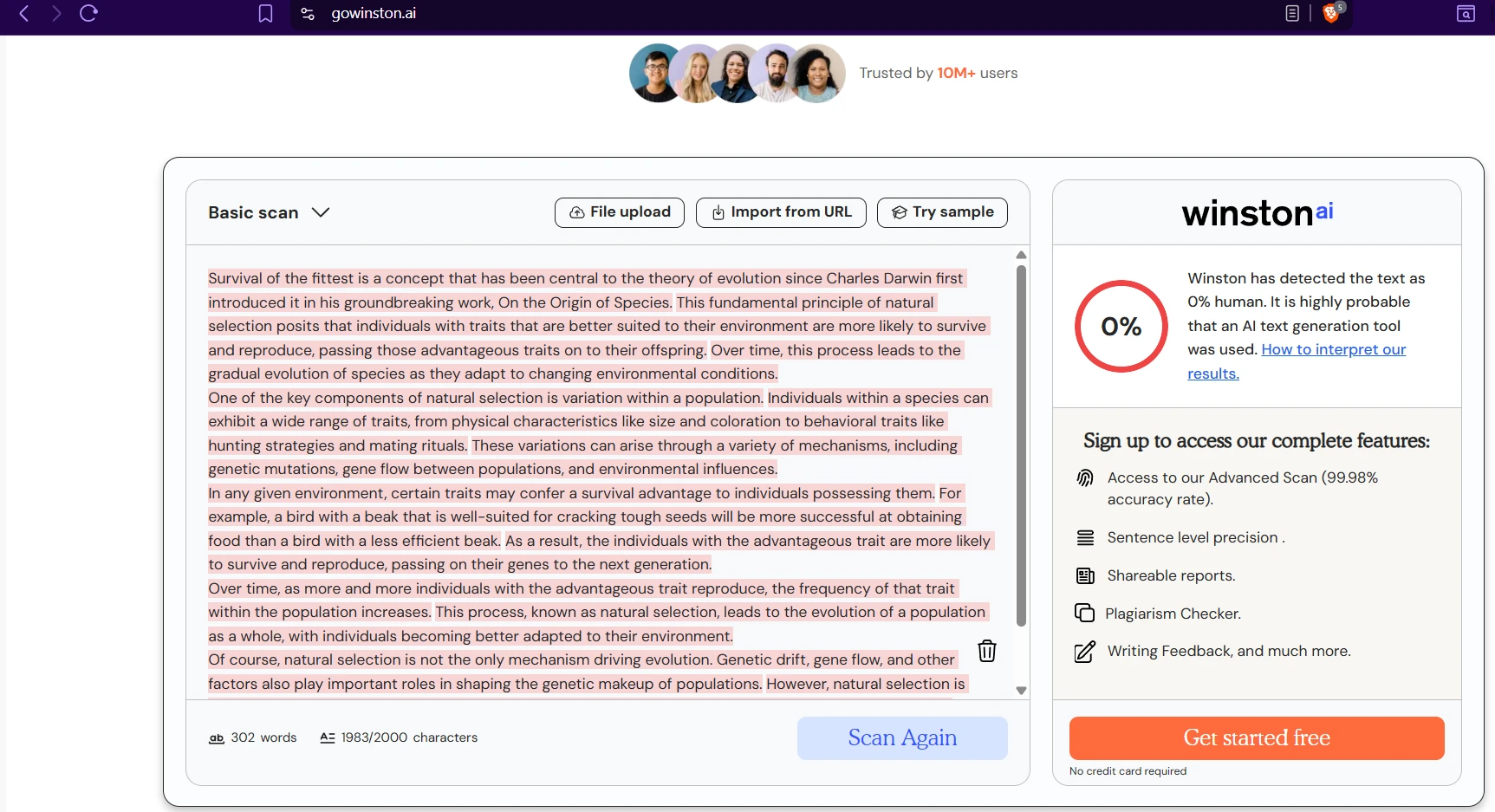

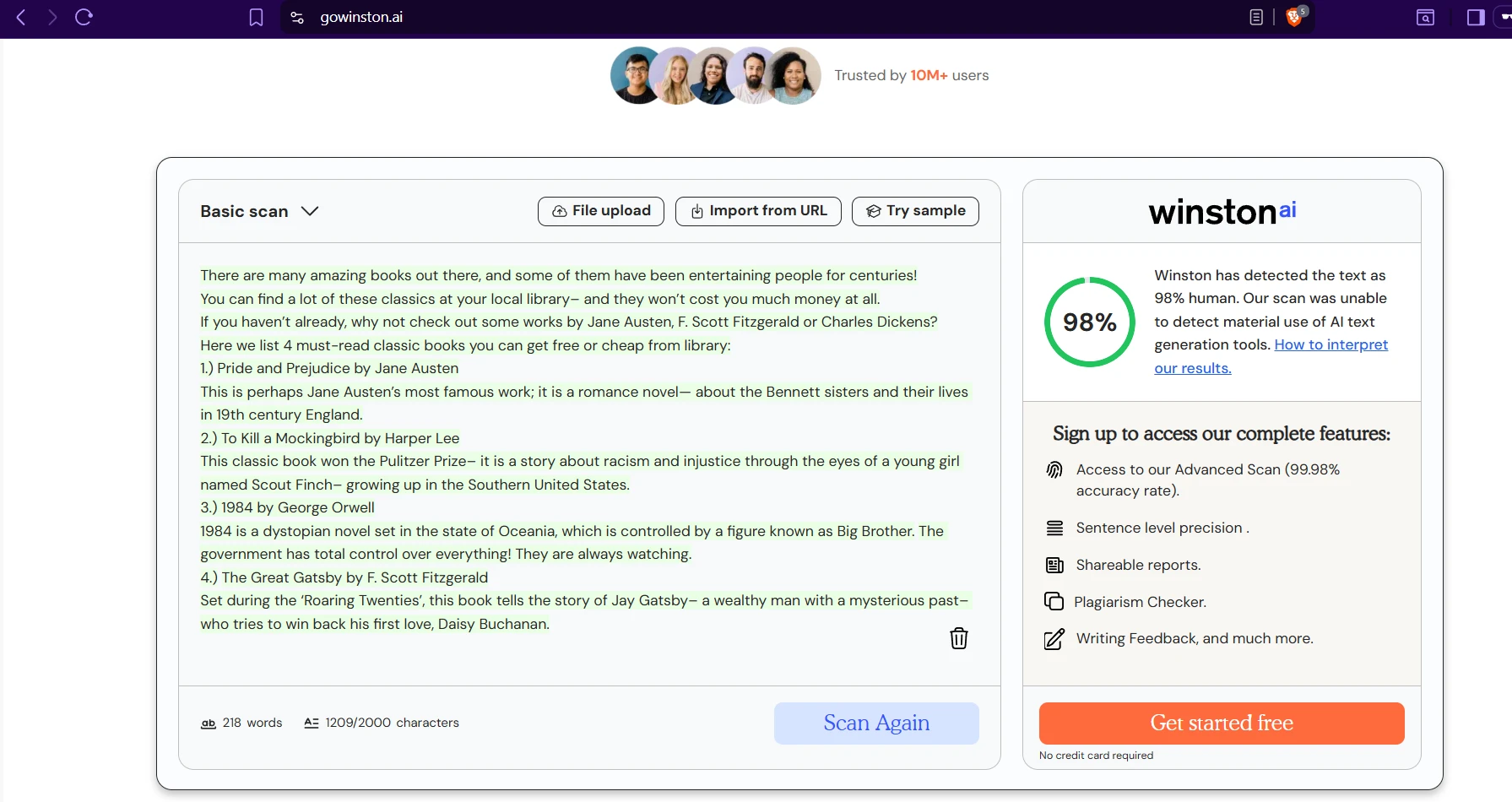

AI detectors sound precise, but in a student context the real question is not whether a tool can produce a number. The real question is whether that number deserves trust. A detector that wrongly accuses a real student can create anxiety, while a detector that lets AI-written work slip through can give a false sense of fairness. To test that trade-off, I ran 160 writing samples through ZeroGPT and Winston AI, then converted every result into the same format: a human score, where higher means the detector believes a person wrote it.

The dataset included 78 human-written samples and 82 AI-written samples. The average length was about 321 words, which keeps the comparison useful for the kind of writing students actually submit: short explainers, homework-style responses, and compact blog drafts. I was not looking for the detector with the flashiest dashboard. I wanted to know which one made the better decisions when the writing was mixed, messy, and realistic.

Also Read: [STUDY] Sapling AI vs Winston AI Detector: Which One Is Fairer to Student Writing?

Key findings from the dataset

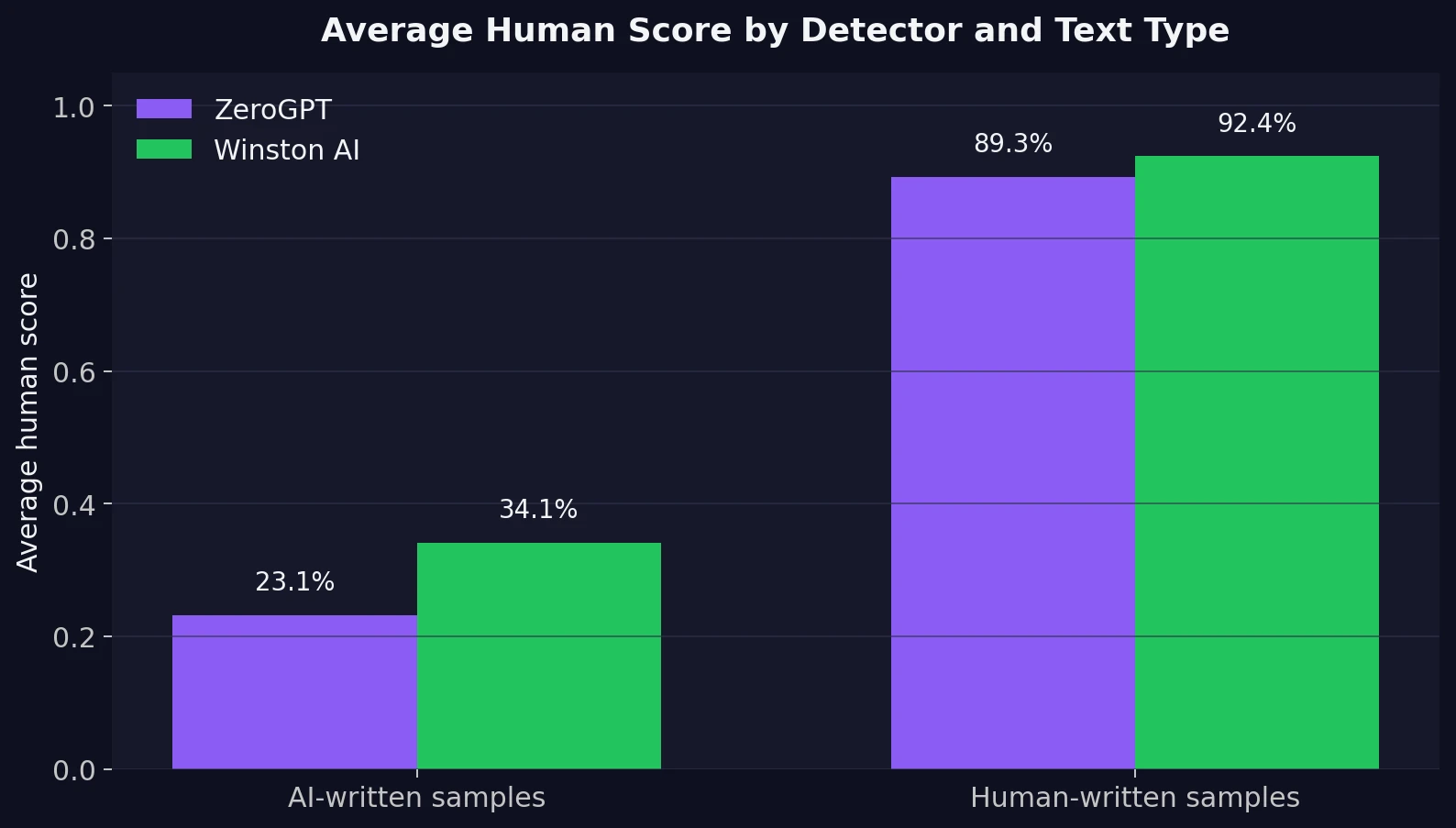

- On human writing: Winston AI gave a slightly higher average human score at 92.4%, while ZeroGPT averaged 89.3%.

- On AI writing: ZeroGPT was tougher, giving AI samples an average human score of only 23.1%. Winston AI was more forgiving at 34.1%.

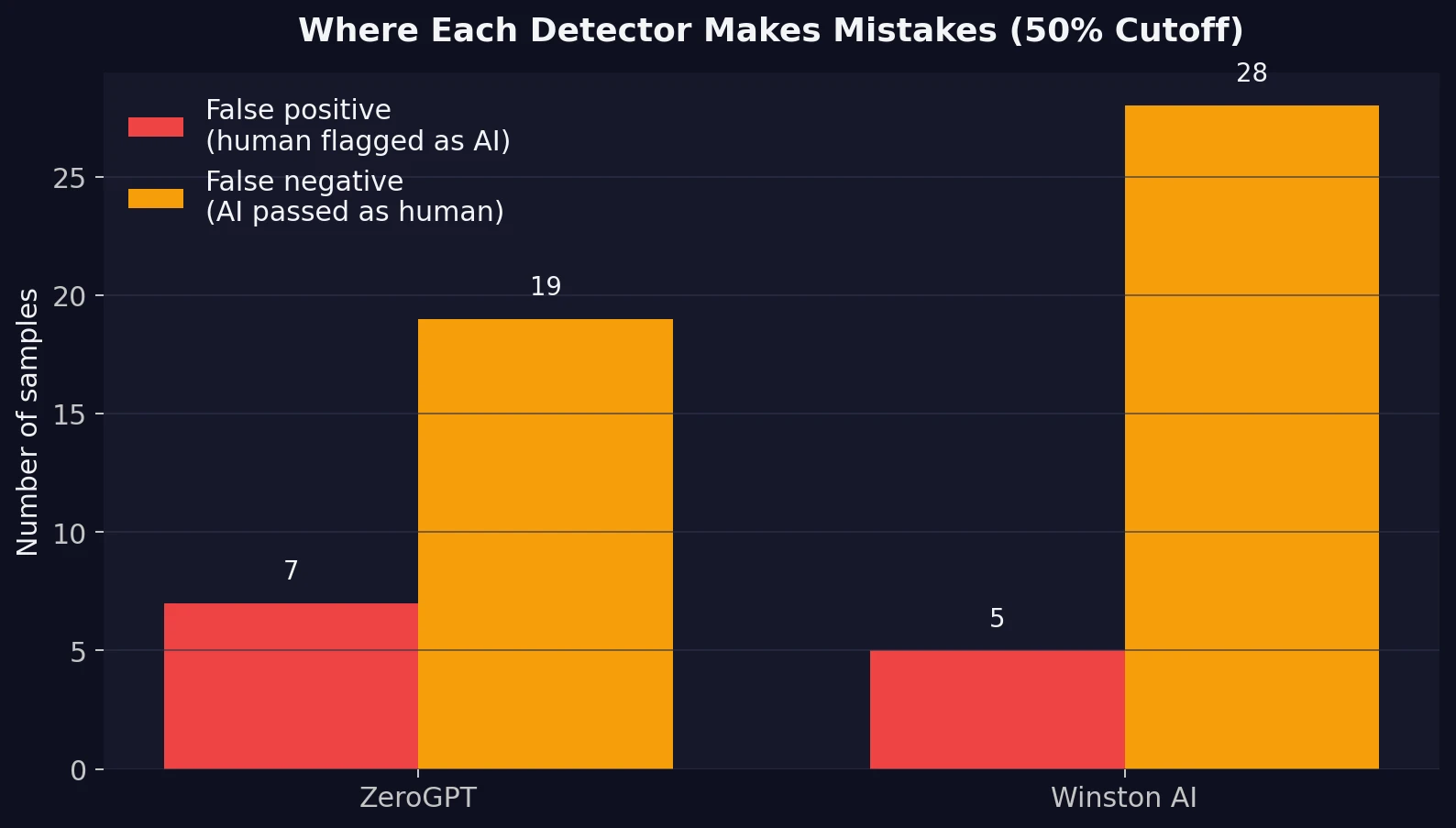

- Using a simple 50% cutoff: ZeroGPT was correct on 83.8% of all samples, compared with 79.4% for Winston AI.

- Trade-off: Winston AI made fewer false accusations on human work (5 vs 7), but ZeroGPT let fewer AI samples pass as human (19 vs 28).

First look: the averages tell a clear story

The first chart is the easiest place to start. On genuine human writing, both tools scored high, which is good. Winston AI had a small edge there, averaging 92.4% human on real student-style text. ZeroGPT was still strong at 89.3%. So if your only concern is, “Will this detector be kind to real human writers?”, Winston AI looks slightly safer.

But detectors are not only supposed to protect humans. They are also supposed to catch AI. That is where the gap becomes more important. On AI-written text, ZeroGPT dropped the average human score to 23.1%, while Winston AI stayed much higher at 34.1%. In plain English, that means

ZeroGPT was better at pushing AI samples toward the AI side of the scale

Also Read: Is Winston AI or GPTZero more accurate?

This is why averages need context. A detector can look “nicer” because it gives high human scores more often, but that same kindness can become a weakness when AI-generated writing is polished and readable. For students, that matters. Many modern AI outputs no longer sound robotic. They sound organized, clean, and surprisingly ordinary.

Also Read: Is Winston AI accurate like Turnitin

The mistake that matters depends on who is holding the result

Two terms matter here, and they are worth defining simply. A false positive means the detector flags human writing as AI. A false negative means AI writing passes as human. In a classroom, the first hurts trust. The second hurts enforcement. A useful detector has to manage both.

At a 50% cutoff, Winston AI produced 5 false positives, slightly fewer than ZeroGPT’s 7. That is a real advantage. In this dataset, Winston AI was a bit less likely to wrongly accuse a human writer. But the other side of the picture is harder to ignore: Winston AI also allowed 28 AI samples to pass as human, compared with 19 for ZeroGPT. That is a difference of 9 extra misses.

That trade-off shapes the verdict. Winston AI is slightly gentler on real human writing, but ZeroGPT is more balanced overall because it catches more AI without dramatically increasing false accusations. For schools and student use, balance matters more than being generous in one direction.

Also Read: Grammarly vs ZeroGPT AI Detector

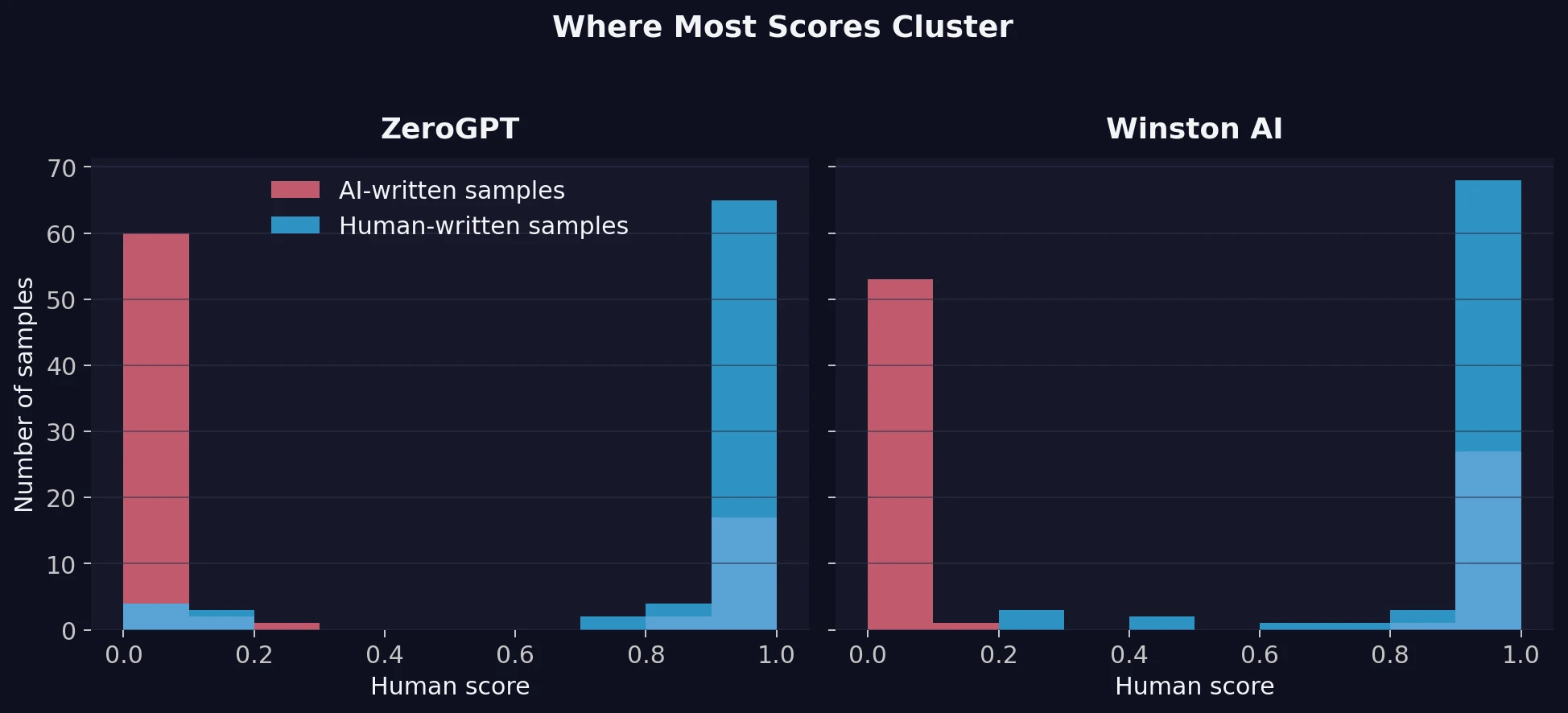

How consistent are the scores?

It is easy to focus only on averages, but averages can hide messy behavior. The next chart shows where most scores cluster. You do not need any special stats background to read it. Just look for where the bars pile up. Scores near 0 mean “very likely AI,” and scores near 1 mean “very likely human.”

ZeroGPT shows a stronger split. Most AI samples sit close to the left side, while most human samples sit close to the right. Winston AI also separates the two groups, but not as cleanly. More of its AI-written samples drift toward the human end of the scale. That lines up with the higher false-negative count we saw earlier.

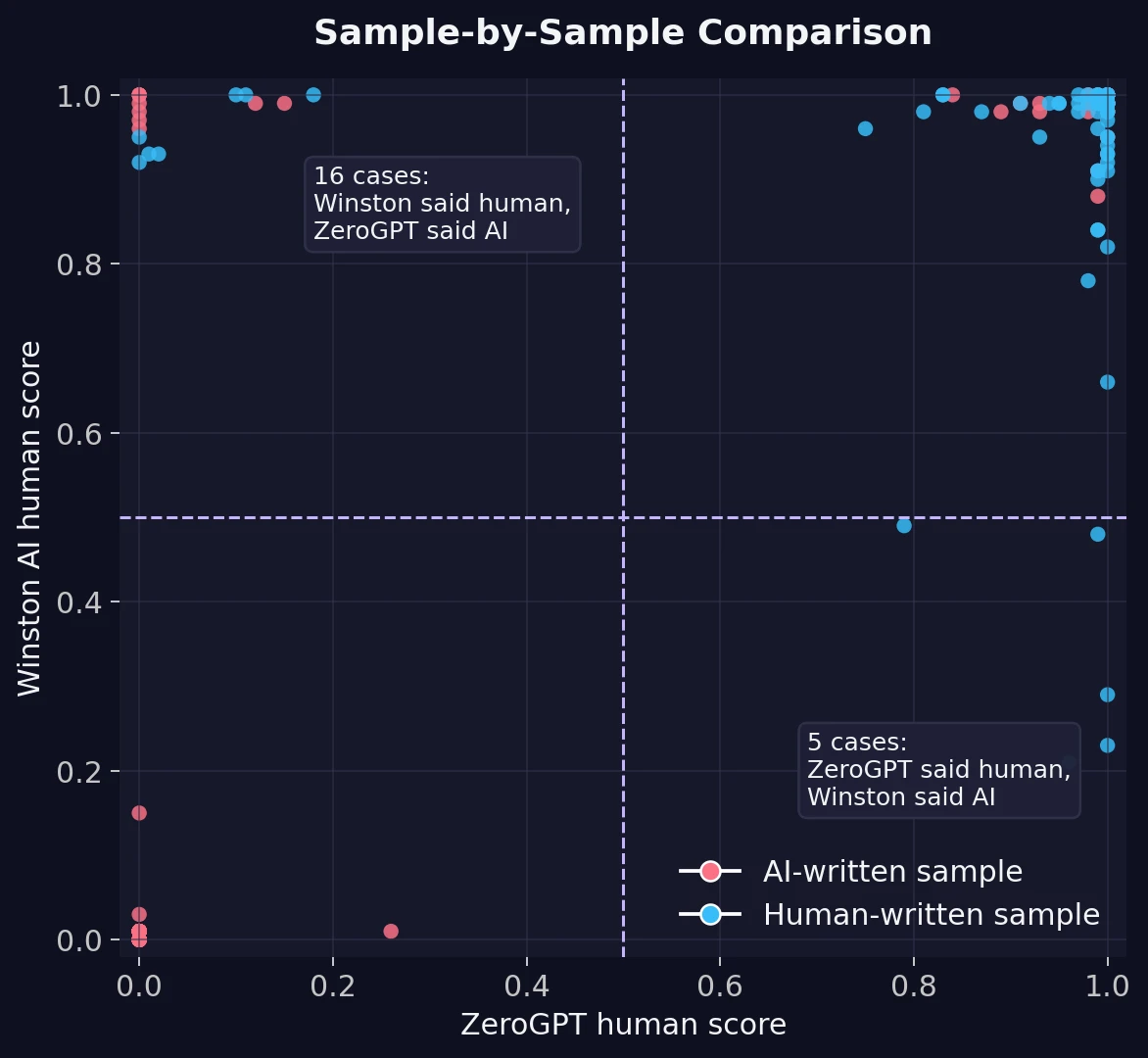

When the tools disagree, Winston AI usually leans human

The most revealing part of the comparison is not where the tools agree. It is where they split. Out of 160 samples, the two detectors disagreed on 21. In 16 of those cases, Winston AI said the text looked human while ZeroGPT said it looked AI. In only 5 cases did the reverse happen.

That pattern tells us something useful about detector personality. Winston AI behaves like the more forgiving judge. ZeroGPT behaves like the stricter one. Neither style is automatically better. It depends on your goal. If you want to avoid false accusations as much as possible, a more forgiving detector can feel safer. If you want fewer AI-written submissions slipping through, the stricter detector is more useful.

What this means for students

The biggest lesson from this test is not that one tool is perfect and the other is broken. The bigger lesson is that AI detection is still probabilistic. That word just means the result is a likelihood, not a courtroom verdict. These tools are reading patterns in wording, sentence flow, and predictability. They are not reading your intentions, your notes, or your writing process.

That is why students should be careful in two ways. First, do not assume a high human score proves your writing is safe from suspicion forever. Second, do not assume a low human score automatically means a detector has caught something real. The fairest use of these tools is as a signal, not a final decision. Draft history, outlines, version logs, and writing samples still matter.

How the tools present their results

One thing students often overlook is interface design. A bright warning, a red gauge, or a highlighted paragraph can make a detector feel more certain than it really is. Below are example result screens from both platforms. The visuals are polished, but the data above shows why polished visuals and reliable judgment are not the same thing.

Final verdict

If I had to choose one detector from this dataset alone, ZeroGPT is the stronger all-rounder. It delivered better overall accuracy, created a clearer separation between human and AI text, and missed fewer AI-written samples. That makes it the better option when the goal is not just reassurance, but actual detection.

At the same time, Winston AI deserves credit for one thing: it was slightly less likely to mislabel genuine human writing. That is not a trivial strength, especially in educational settings where a false accusation can do real harm.

The honest conclusion is this: ZeroGPT wins the comparison, but neither tool should be trusted in isolation. For students, teachers, and editors, the smartest approach is to treat detector scores as one piece of evidence, not the entire case. A detector can support judgment. It should not replace it.

![[STUDY] ZeroGPT vs Winston AI: Which AI Detector Performs Better? 160-Sample Test](/static/images/winston_ai_vs_zerogpt_featured_imagepng.webp)