QuillBot vs Winston AI Detector: Which One Is Fairer to Students?

Students do not just need an AI detector that looks strict on a dashboard. They need one that can tell the difference between real writing and smooth, polished text that only sounds human. That is a big difference. In this comparison, I analyzed a dataset of 160 samples to see how QuillBot and Winston AI behave when the pressure is real: not one demo paragraph, but dozens of human and AI passages put through the same test.

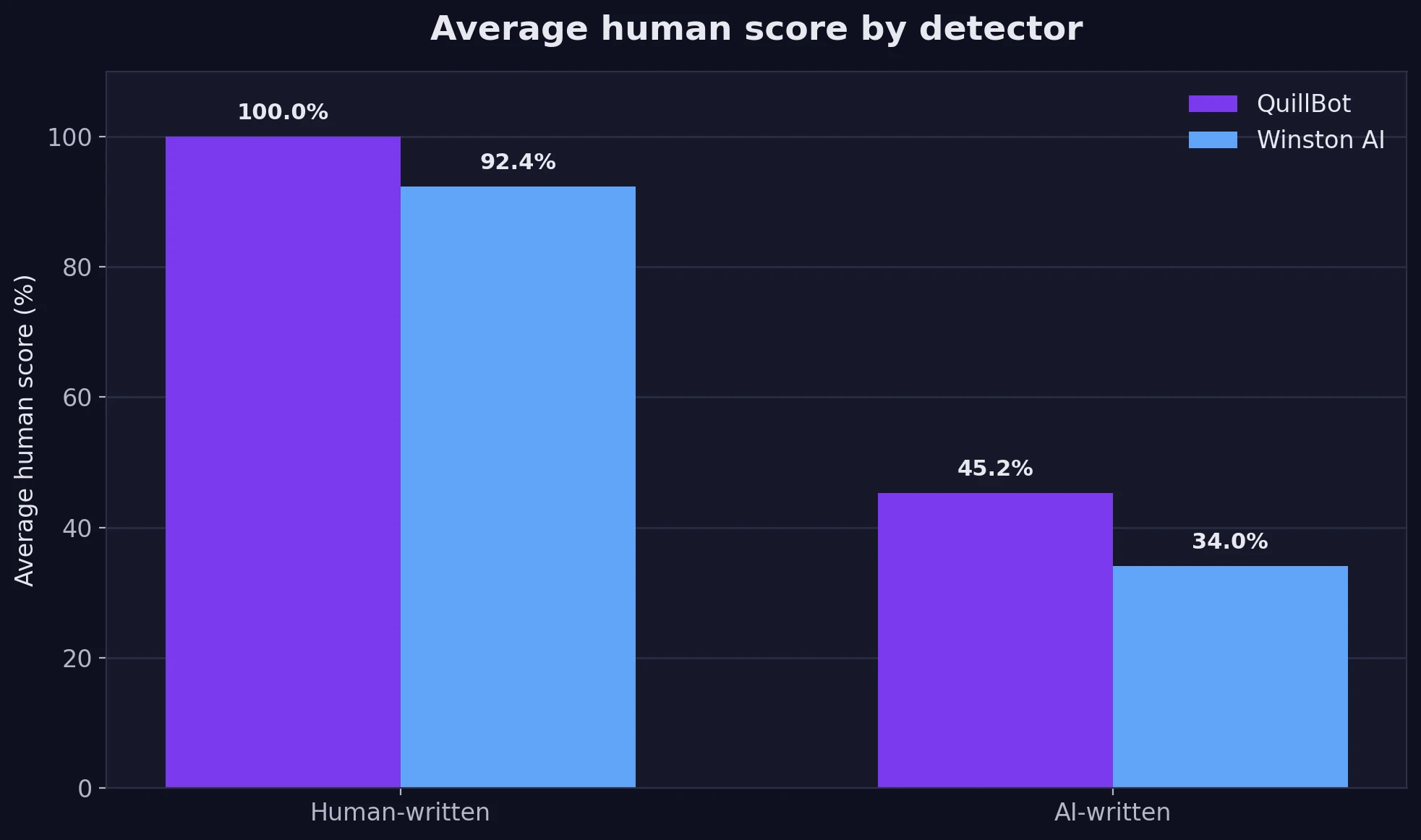

To make the comparison easier to read, I used a converted human score. A higher score means the detector thinks the passage is more likely to be written by a person. So a result near 100% means “this looks human,” while a score near 0% means “this looks AI-generated.” That makes the trade-off easy to understand. On human-written text, higher is better. On AI-written text, lower is better.

How the test was set up

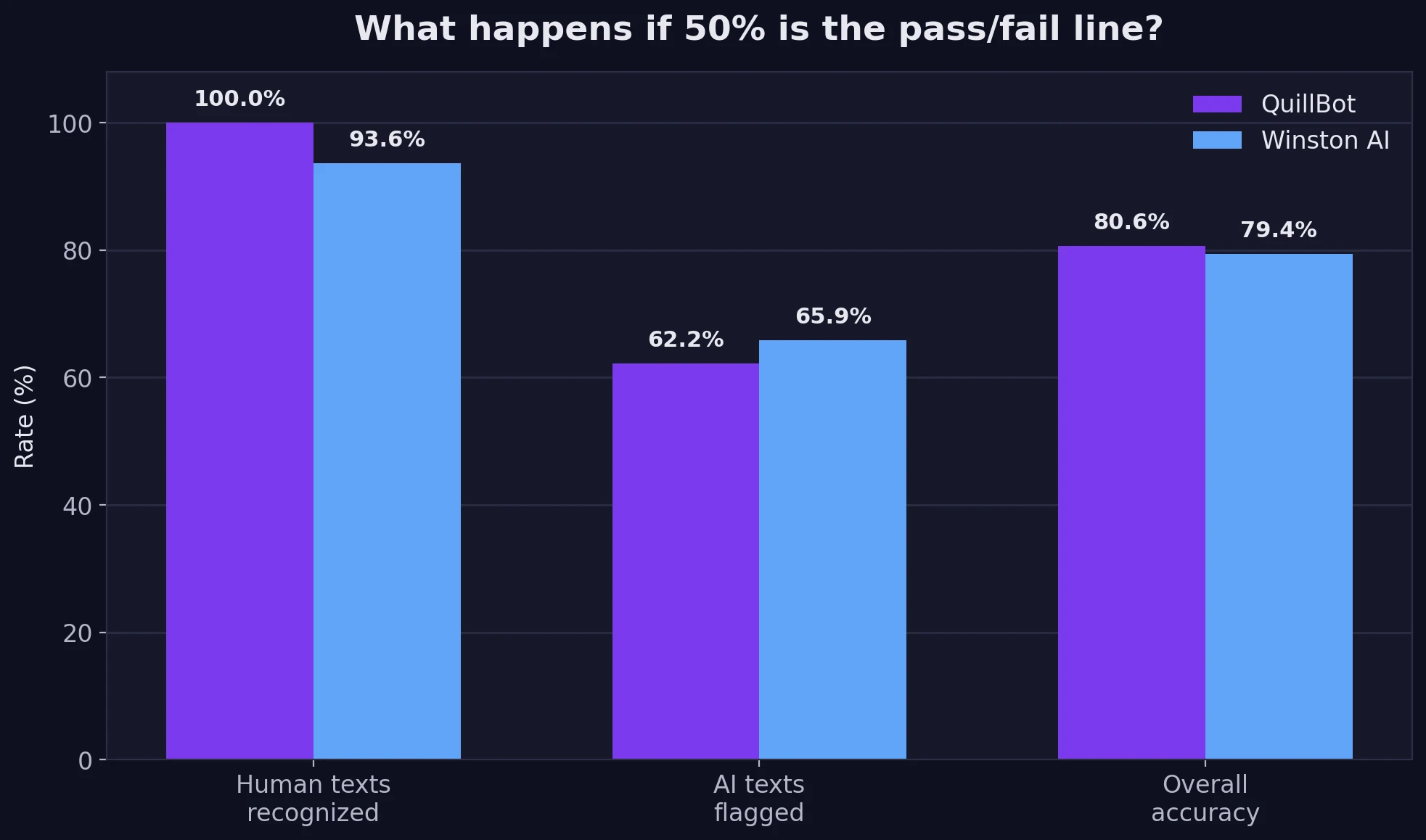

This dataset contains 78 human-written samples and 82 AI-written samples. I looked at averages, medians, and simple pass/fail behavior using a 50% cutoff. In plain words, that means any score above 50% is treated as “more human than AI.” I am using that line only as a practical comparison tool. Real-world decisions should never rely on one number alone.

Also Read: Quillbot vs Sapling AI Detector

What stood out immediately

- QuillBot was much kinder to genuine human writing. In this dataset, its average score on human text was 100.0% human, and every human sample stayed above the 50% line.

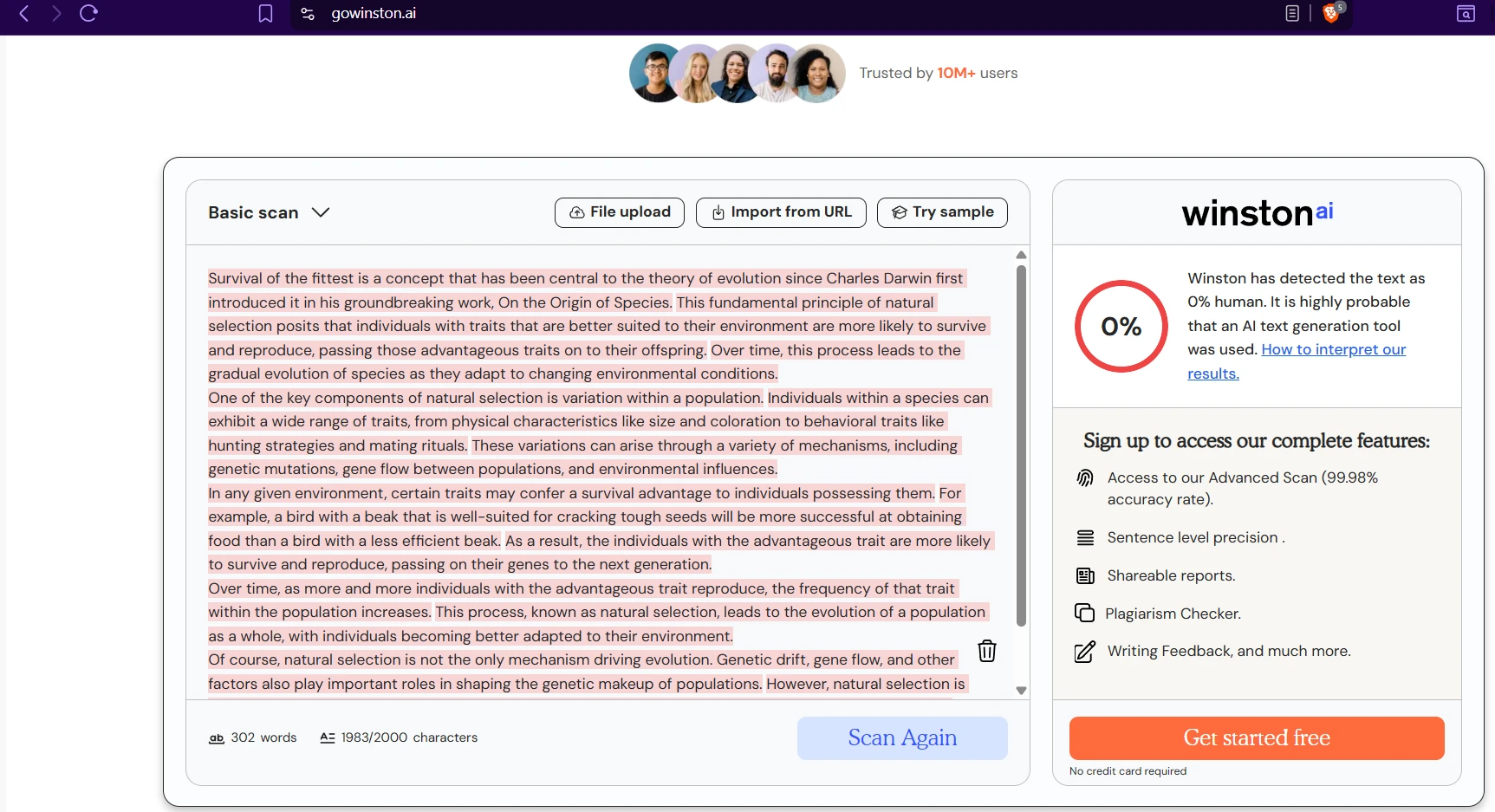

- Winston AI was slightly harsher on AI text. Its average score on AI text was 34.0% human, compared with 45.2% for QuillBot. Lower is better here, so Winston had the edge.

- The trade-off is clear. Winston caught 54/82 AI samples at the 50% cutoff, while QuillBot caught 51/82. But Winston also marked 5 human samples as AI, while QuillBot marked 0.

- Overall, the race was close. QuillBot finished with 80.6% overall accuracy, slightly ahead of Winston AI at 79.4%.

The big picture: one tool is safer for human writers

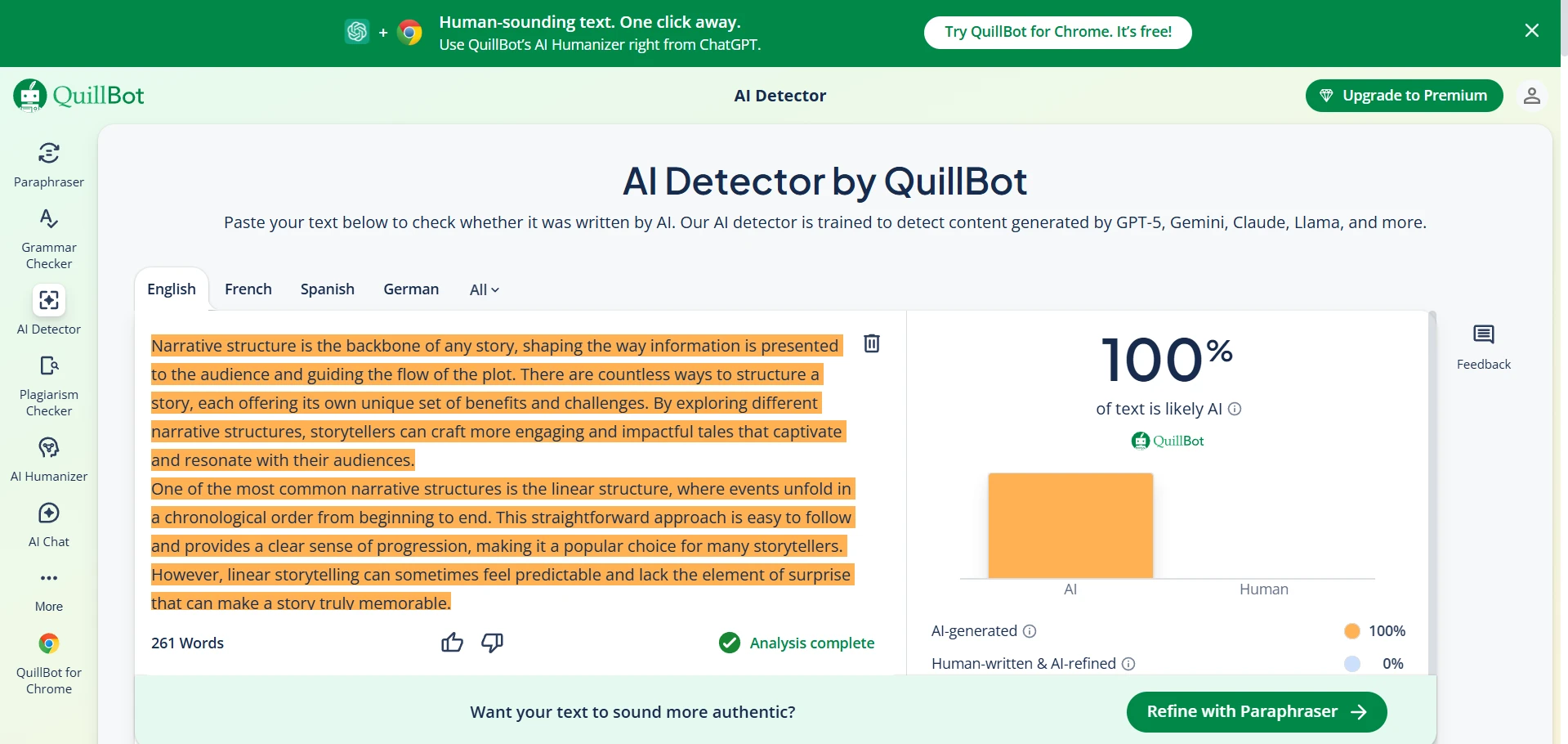

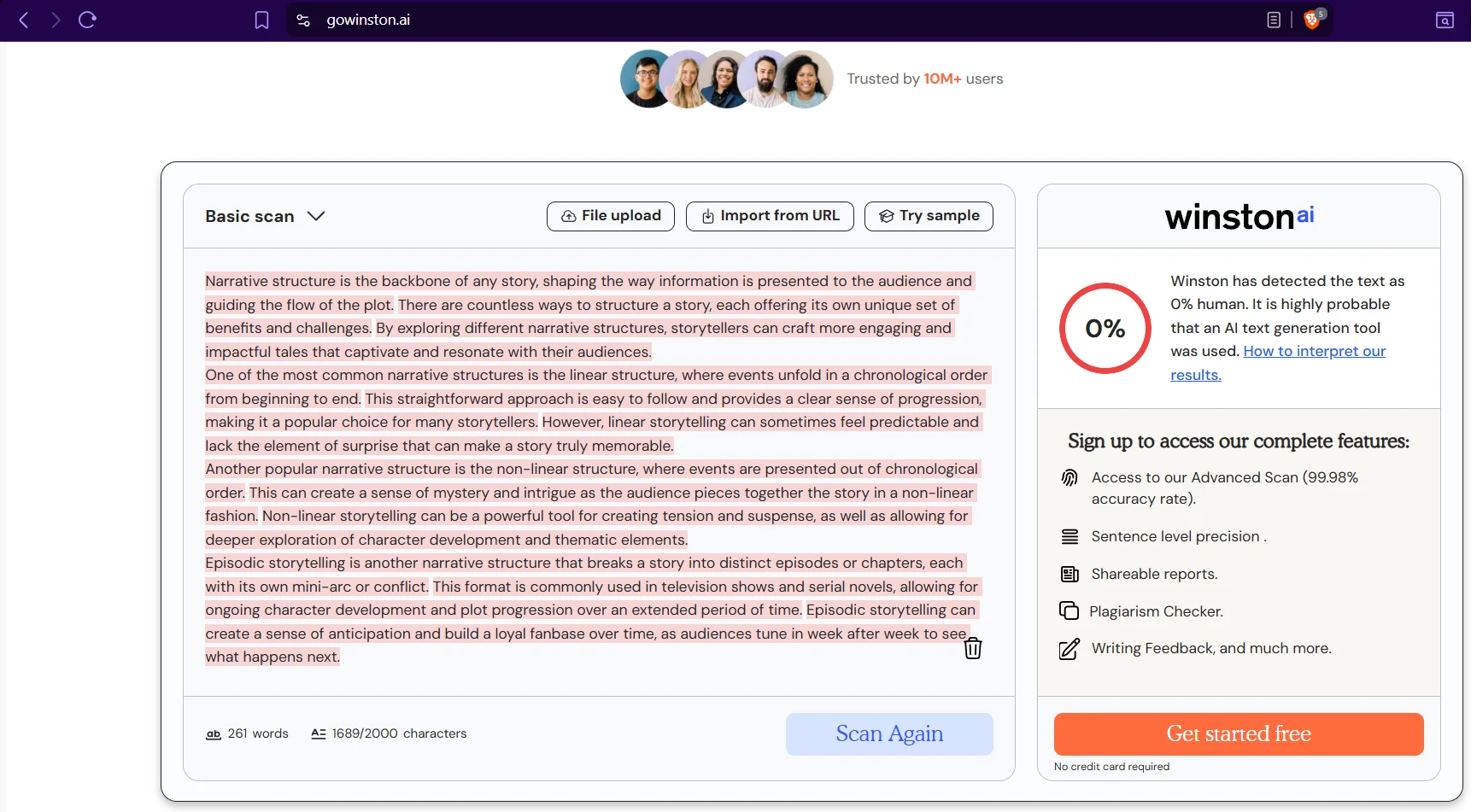

The first chart shows the average human score each detector gave to human-written and AI-written text. This is the fastest way to understand the personality of each tool. QuillBot is extremely generous toward human writing in your dataset. Winston AI is also strong on human text, but not as consistent.

Also Read: GPTZero vs Sapling AI Detector

That chart also reveals something important: Winston AI is the stricter AI catcher on average. Its AI average is lower, which means it is usually more willing to call a suspicious passage AI-written. But strictness is not always the same thing as usefulness. In a student setting, the hardest problem is often the false positive—when real human work gets flagged as AI. That is the kind of error that damages trust fastest.

Also Read: Winston AI vs Turnitn AI Detector

If 50% is the line, who makes fewer bad calls?

To make this practical, I treated 50% human as the point where a passage “passes” as human. This is not a perfect scientific rule, but it mirrors the kind of quick judgment people often make when they read detector dashboards. The next chart shows how both tools performed using that simple line.

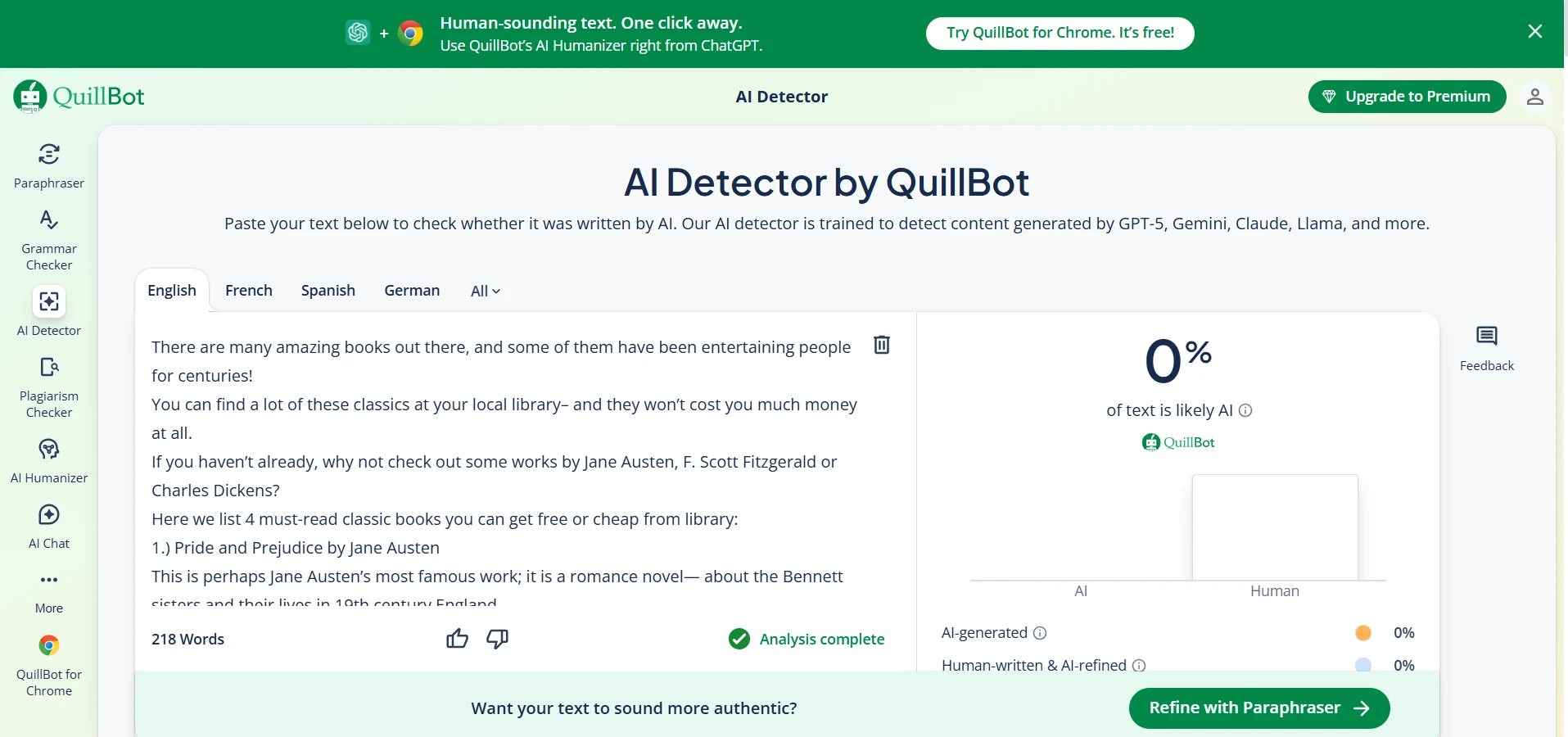

Here is the real student-facing takeaway. QuillBot recognized 100% of the human-written samples as human. Winston AI recognized 93.6% of them and wrongly pushed 5 genuine human samples below the line. On the AI side, Winston AI did slightly better, flagging 65.9% of AI passages compared with 62.2% for QuillBot. So the choice is not simply “which detector is better?” The better question is which mistake worries you more?

If you are a student, teacher, or editor who wants to avoid accusing real writers unfairly, QuillBot looks safer in this dataset. If you care more about catching every possible AI pattern, Winston AI is somewhat more aggressive. The problem is that aggressive tools can also punish clean, well-structured student writing.

Also Read: Can Quillbot AI Detector be trusted?

Averages can hide the messy part

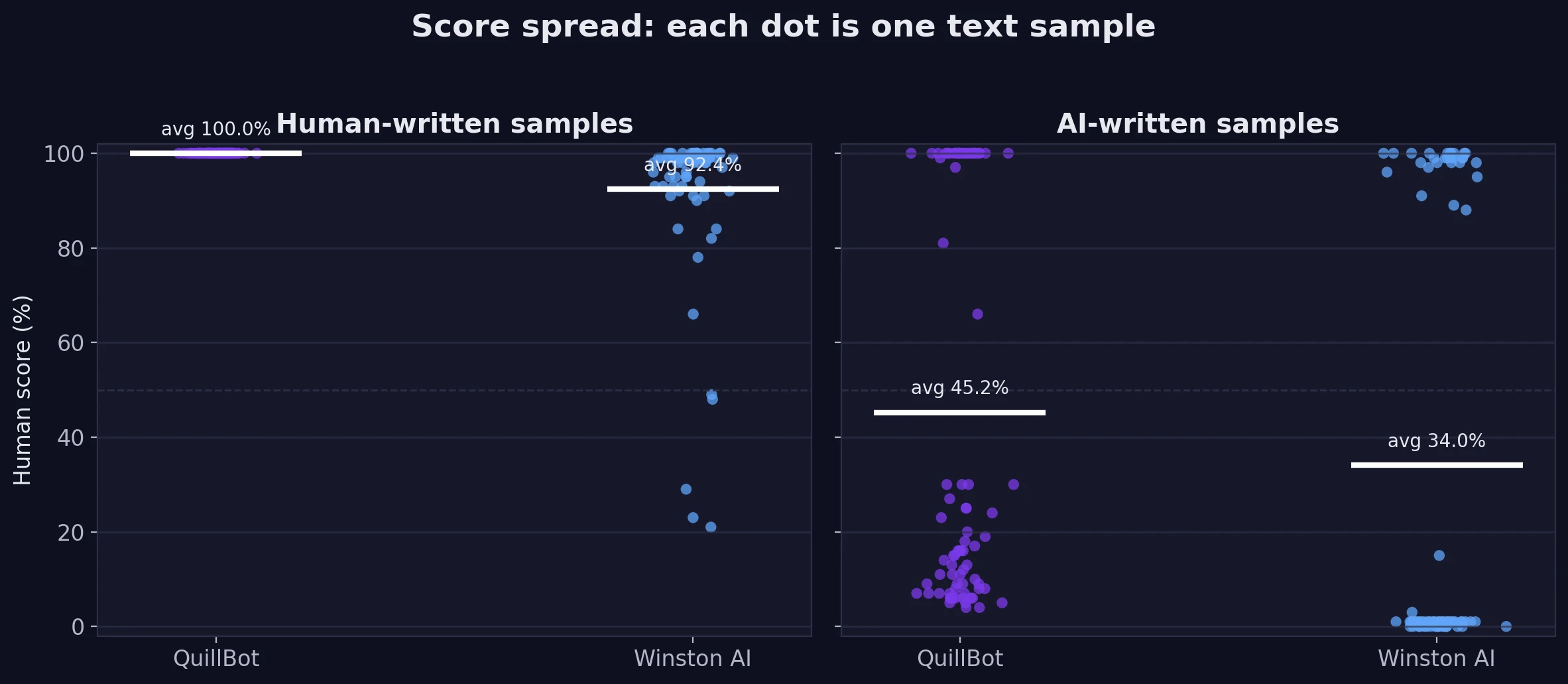

Average scores are useful, but they do not tell the whole story. The next chart shows the spread of the results. Spread simply means how widely the scores move around from one sample to another. Each dot is one text sample. A tight cluster means the detector behaved in a more consistent way. A wide scatter means the detector changed its mind a lot depending on the passage.

This is where the comparison becomes more interesting. QuillBot gave every human sample a perfect 100% human score, which is unusually stable. Winston AI was still strong on human text, but its scores ranged much more widely. Some human passages landed far below the pack. On AI text, both tools were mixed. The middle value (called the median) was 19.5% human for QuillBot and just 1.0% for Winston AI, so Winston was usually tougher. But both tools also had a real weakness: 31 AI samples for QuillBot and 28 AI samples for Winston still scored above 50% human. Even more striking, 29 QuillBot AI samples and 26 Winston AI samples scored at least 90% human.

That matters because it shows why detector scores should be treated as signals, not verdicts. A detector can look very confident and still be wrong, especially when the AI text is short, polished, or written in a clean educational tone.

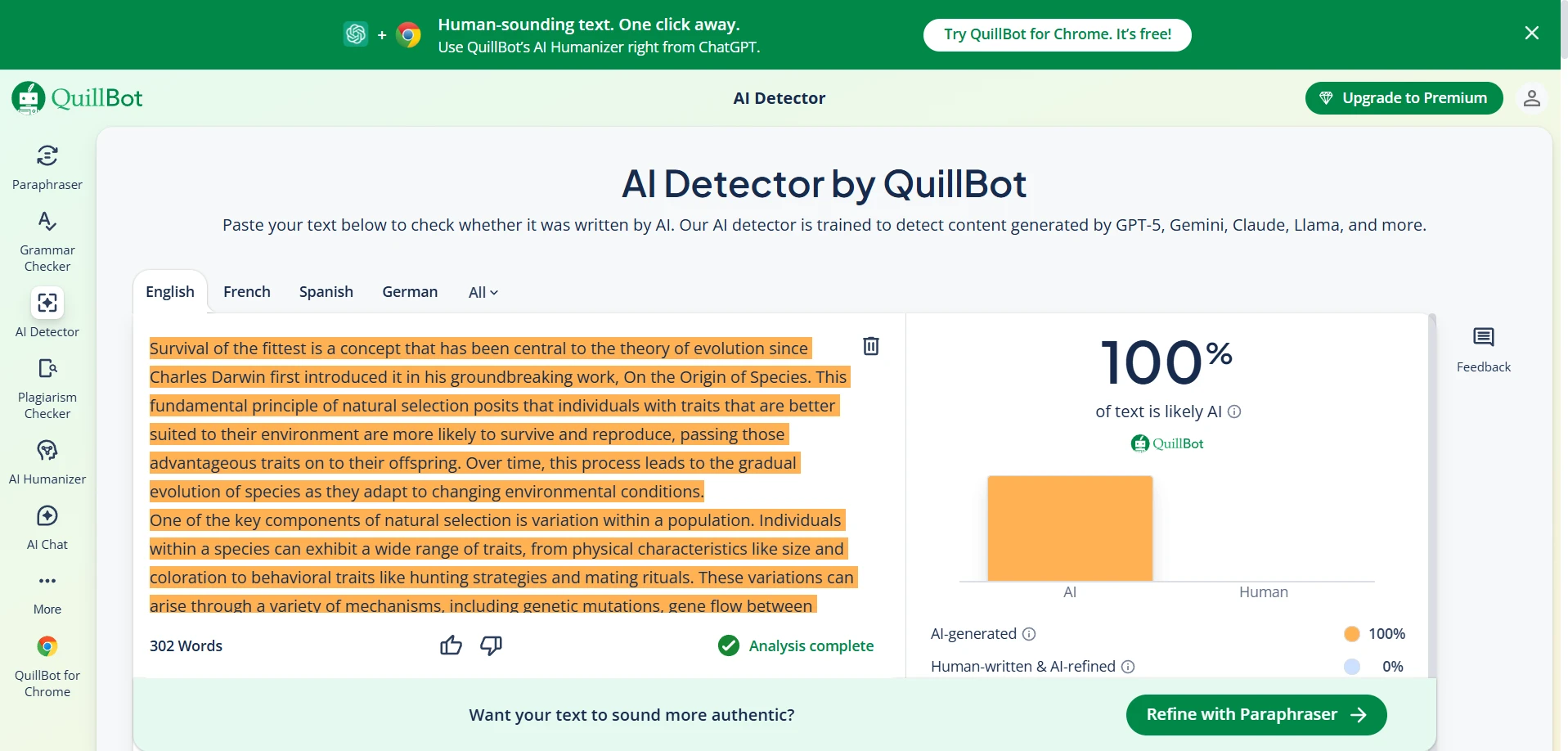

What the dashboards looked like on real examples

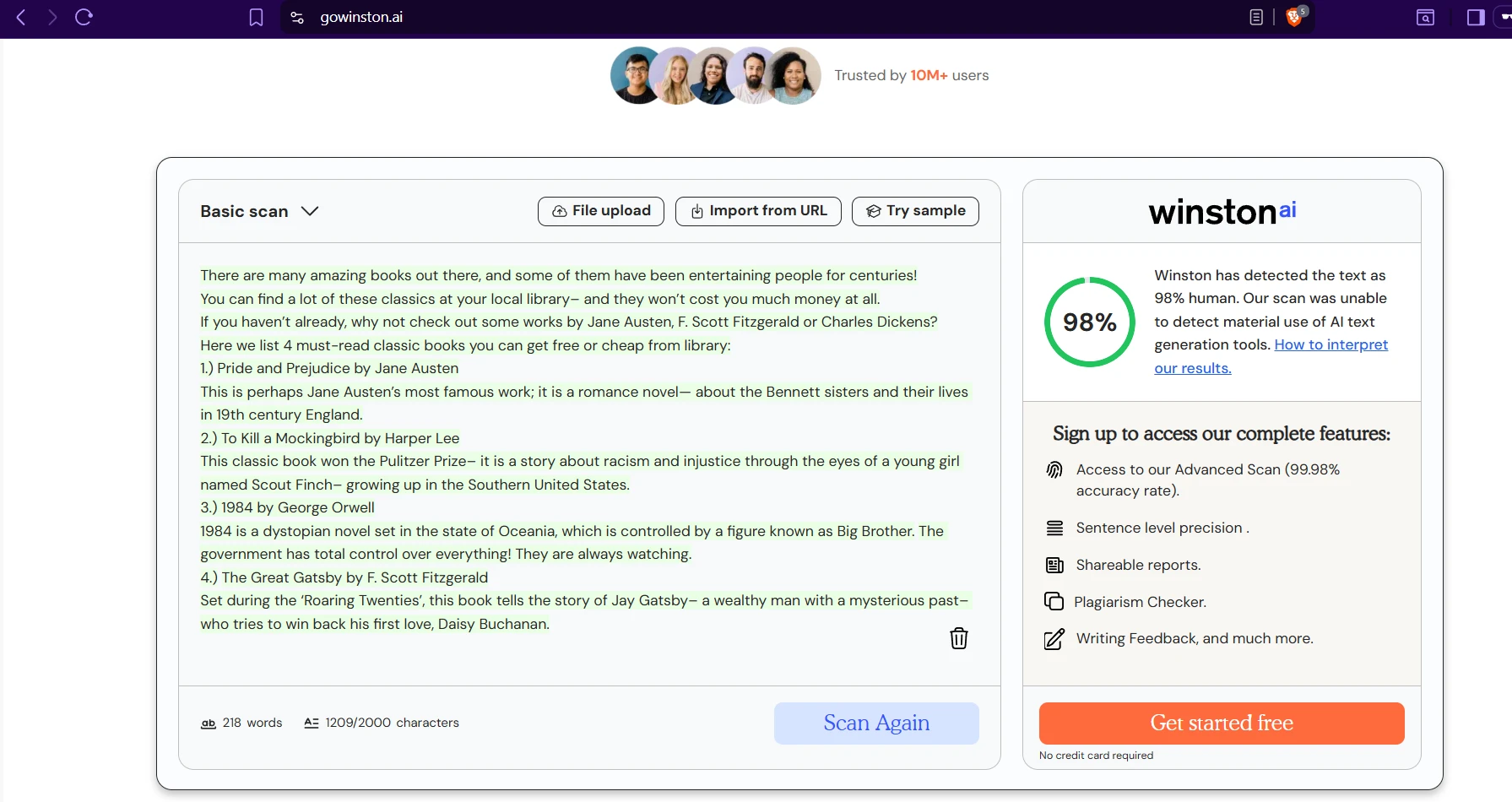

The screenshots below match the pattern from the dataset. Some AI passages were caught immediately by both tools. Others looked surprisingly human to both. That is exactly why a single screenshot can be misleading: you need the full sample set before you can say anything meaningful.

Final verdict

If the goal is to be fair to students, QuillBot has the stronger case in this dataset. It was more forgiving toward genuine human writing, produced zero human false alarms at the 50% cutoff, and still ended with slightly better overall accuracy.

Winston AI deserves credit for being more aggressive against AI on average, and that may appeal to users who care most about catching suspicious writing. But the numbers also show the cost of that strategy: a detector that is too eager can still misread authentic work. For classrooms, that is a serious issue.

The most honest conclusion is this: neither tool should be used as a final judge on its own. Both detectors let a meaningful share of AI text pass as human. But if I had to choose the more student-friendly detector from your 160-sample test, QuillBot comes out ahead because it makes the safer mistake profile.

![[STUDY] QuillBot vs Winston AI: Which AI Detector Is Better for Students?](/static/images/quillbot_vs_winston_blog_featured_imagepng.webp)