AI detectors are becoming a new “gatekeeper” for students—sometimes deciding whether your work is trusted or questioned. So I tested Grammarly’s AI detector vs QuillBot’s AI detector on 160 samples and recorded each tool’s human score (higher score = the tool thinks it’s more likely written by a human). The surprise wasn’t which tool looked smarter—it was how easily AI can still slip through.

The “Human Score” in Plain English

Both tools output a score that behaves like a confidence meter. If the score is close to 1.0, the tool is saying “this looks human.” If it’s near 0.0, it’s saying “this looks AI.”

That sounds simple, but here’s the catch: detectors can fail in two different ways. A false positive is when a human-written paragraph gets flagged as AI. A false negative is when AI-written text gets treated as human.

What I Tested (So You Can Trust the Comparison)

I used 160 total samples: 78 human-written and 82 AI-written. Every sample went through both Grammarly and QuillBot, and I recorded the human score each tool produced.

Important note: AI detectors change over time. A future update could shift these numbers, so treat this as a snapshot of what happened during this experiment—not a permanent “final truth.”

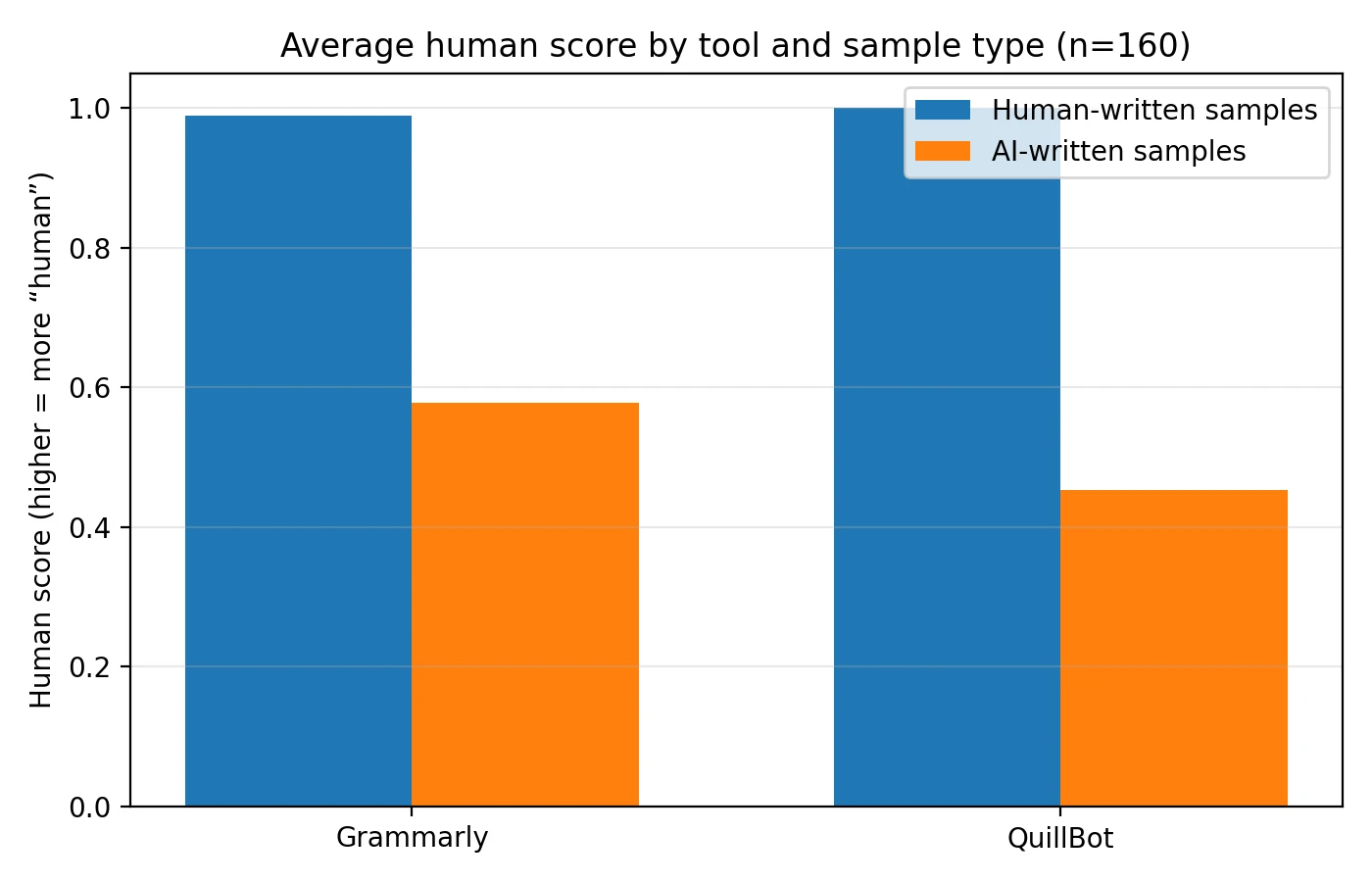

Key Findings From the Dataset (n=160)

- Human-written samples: Grammarly averaged 0.990 and QuillBot averaged 1.000 (both were very generous to human writing).

- AI-written samples: Grammarly averaged 0.578 while QuillBot averaged 0.452 (QuillBot was stricter on AI).

- Big picture: Both tools rarely accused human text, but both tools often gave AI a surprisingly “human-looking” score.

The One Chart That Explains the Whole Story

If you only look at one visual, make it this one. For human-written text, higher is better (you want your real writing recognized). For AI-written text, lower is better (you want AI to be caught).

Also Read: Originality.ai vs Grammarly AI Detector

What this shows is a classic trade-off: both tools are “friendly” to human writing (good!), but when it comes to AI, QuillBot pushes scores down more than Grammarly does. In other words, QuillBot is better at being suspicious of AI in this dataset.

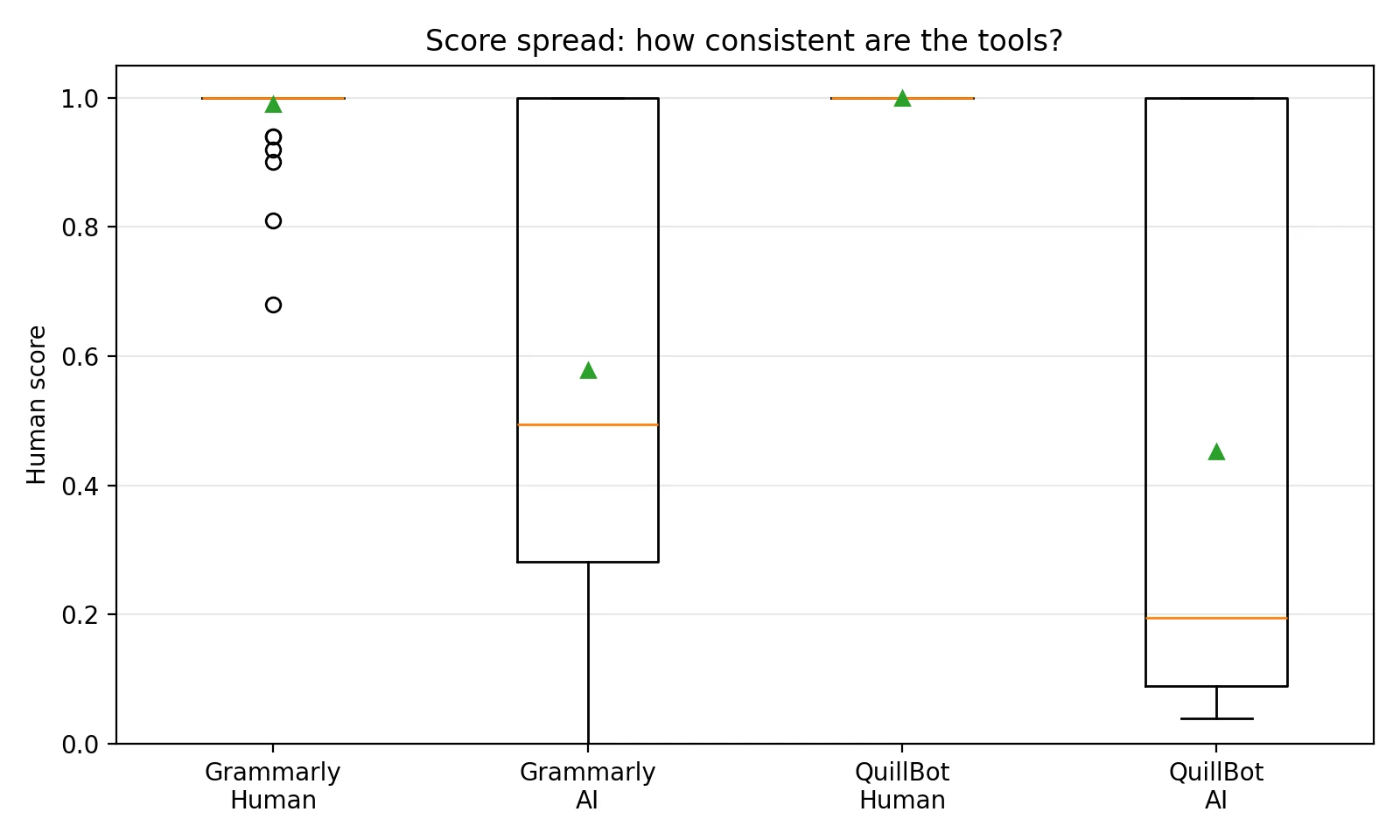

Consistency Check: Do the Tools Give Stable Results?

Averages are useful, but they can hide weird behavior. So I also looked at the spread of scores (how wide the results vary). One simple way to visualize spread is a box plot—it’s basically a “score summary” that shows what most results look like, plus outliers (the odd cases).

Also Read: Originality.ai vs Sapling.ai

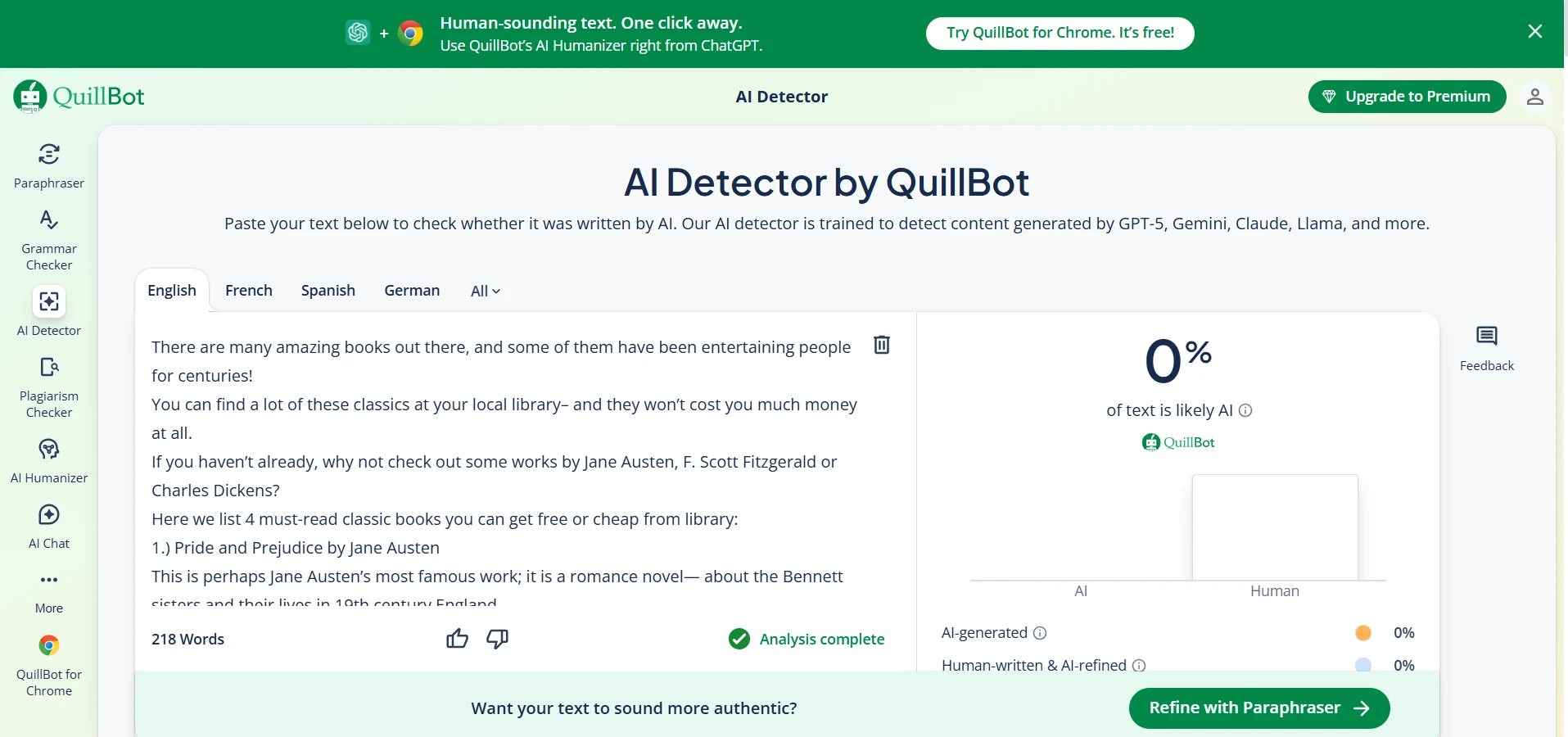

The human-written scores cluster very high for both tools (a good sign for students). But the AI-written scores are messy: there are plenty of AI samples that still land high—sometimes extremely high. That’s the uncomfortable reality: good AI writing can look “human” to detectors.

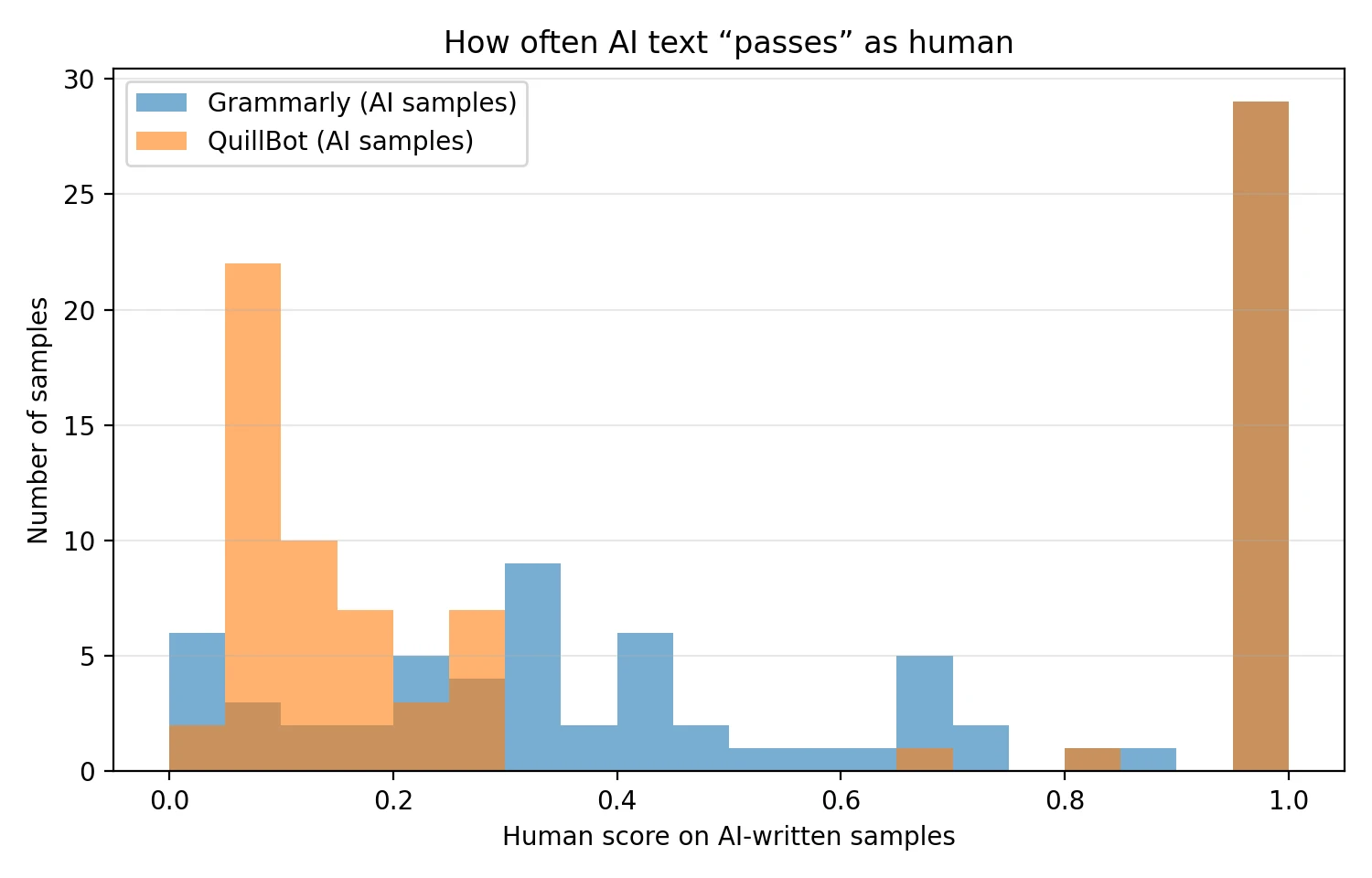

How Often Does AI “Pass” as Human?

Next, I zoomed in only on the AI-written samples and asked: how frequently do the tools give AI a high human score? The histogram below answers that by counting how many AI samples fall into each score range.

In this dataset, QuillBot’s AI scores are more concentrated toward the lower side than Grammarly’s, which supports the same conclusion: QuillBot was the tougher judge on AI. But neither tool completely solves the “AI can pass” problem.

Also Read: ZeroGPT vs Quillbot AI Detector

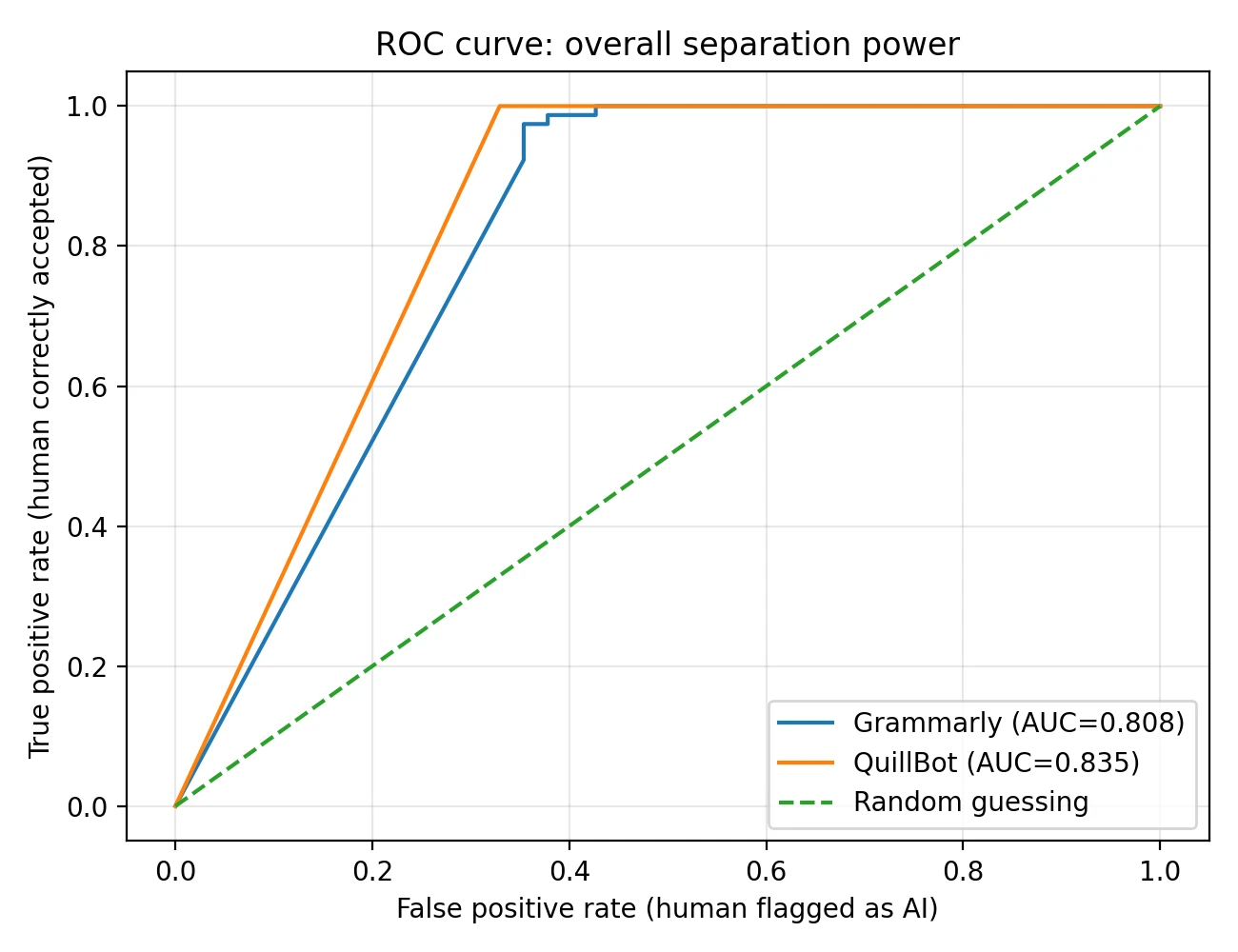

A Simple “Overall Quality” Score (Explained Without Math Panic)

I also computed something called AUC from an ROC curve. Here’s the student-friendly meaning: AUC measures how well a tool can separate two groups—human-written vs AI-written—across all possible cutoffs. An AUC of 1.0 would mean perfect separation. An AUC of 0.5 is basically random guessing.

In my results, QuillBot achieved a slightly higher AUC (0.835) than Grammarly (0.808), which matches what we saw earlier: QuillBot separated AI vs human a bit better in this dataset.

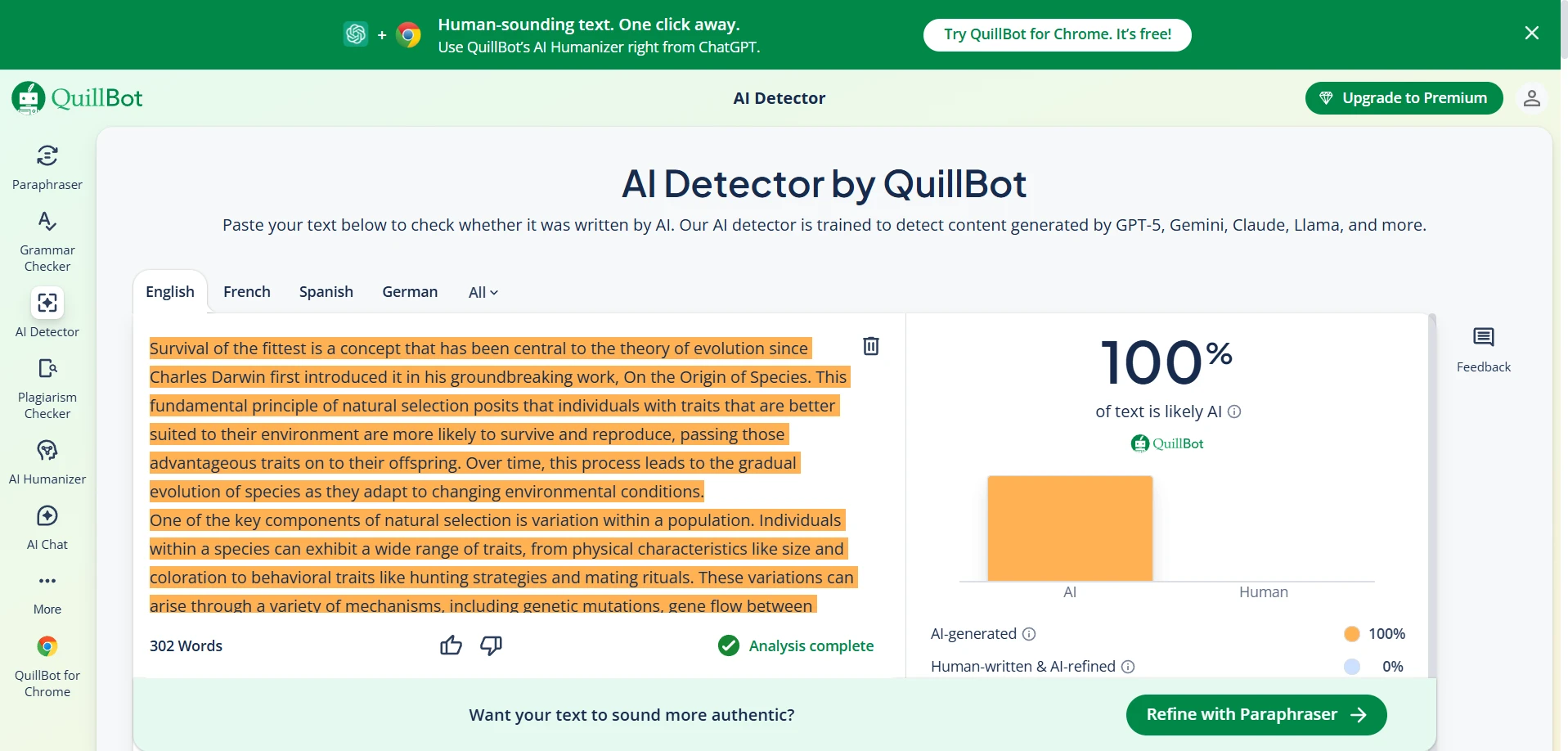

Grammarly Detector: What It Looks Like in Real Use

Grammarly’s detector behaved like a “benefit-of-the-doubt” system here: it rarely punished human writing, but it also let a lot of AI writing score fairly high. If your goal is to avoid false accusations as a student, that gentleness can feel safer. If your goal is catching AI reliably, it may feel too forgiving.

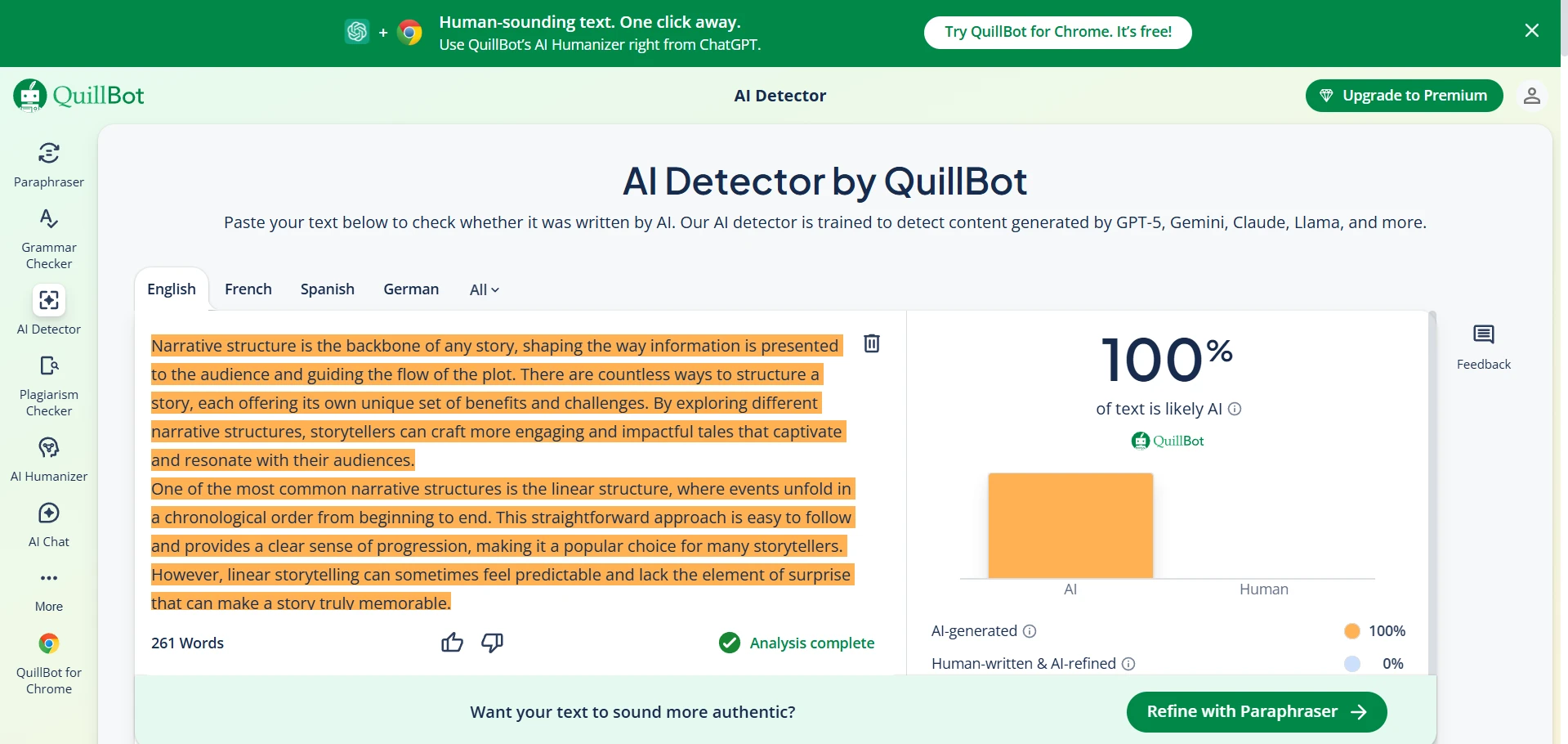

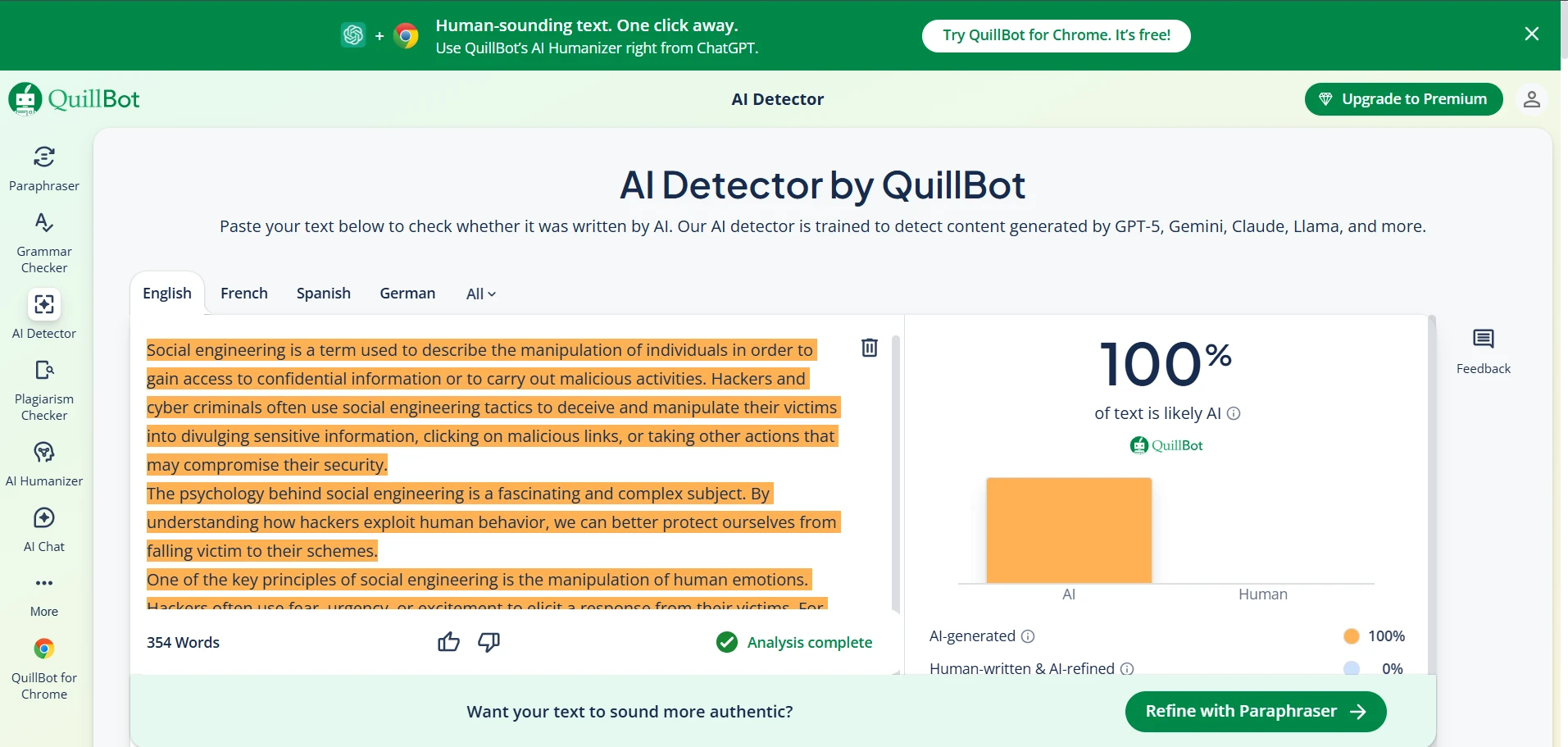

QuillBot Detector: What It Looks Like in Real Use

QuillBot was more skeptical in this dataset, pushing AI samples toward lower human scores more often. That sounds like a win—and statistically, it is slightly stronger here. But the same core issue remains: some AI outputs still scored high.

Final Verdict: Which Detector Should Students Trust?

If you want the most student-friendly outcome—meaning your genuine writing is unlikely to be wrongly accused— both tools performed well on human-written samples in this dataset.

If you care more about catching AI reliably, QuillBot had the edge: it gave lower scores to AI on average and had a slightly better overall separation score (AUC). Still, neither tool is a “lie detector.” Some AI text scored high enough to look convincingly human.

The practical takeaway is simple: AI detectors are signals, not judges. For students, the safest strategy is to keep drafts, outlines, and sources—proof of your process beats any single score.

![[SHOCKING RESULTS] Grammarly vs QuillBot AI Detector: I Tested 160 Samples](/static/images/grammarly_vs_quillbots_ai_detectorpng.webp)