A tool does not need to be perfect to look impressive. It only needs a few strong examples. That is why bypass tools can feel more powerful than they really are. To test Stealthwriter more carefully, I reviewed 100 rewritten samples and converted Originality.ai's AI output into a human score, where a higher number means the text looked more human to the detector. The result was not a simple win or loss. It was a story about inconsistency.

How this test was set up

Each sample in this dataset contains an original passage, a Stealthwriter rewrite, and the final score after checking the rewrite in Originality.ai. Because Originality.ai normally reports how likely a passage is to be AI, I flipped that into a reader-friendly scale: 100% means “looks fully human” and 0% means “looks fully AI”.

I kept the analysis simple on purpose. Students do not need a wall of technical language to understand what matters here. The main questions were straightforward: How often did the rewrite clear a strong human-looking score? How often did it fail badly? And just as important, what happened to the quality of the writing along the way?

Also Read: [100 Samples Test] Can BypassGPT Really Bypass Originality.ai?

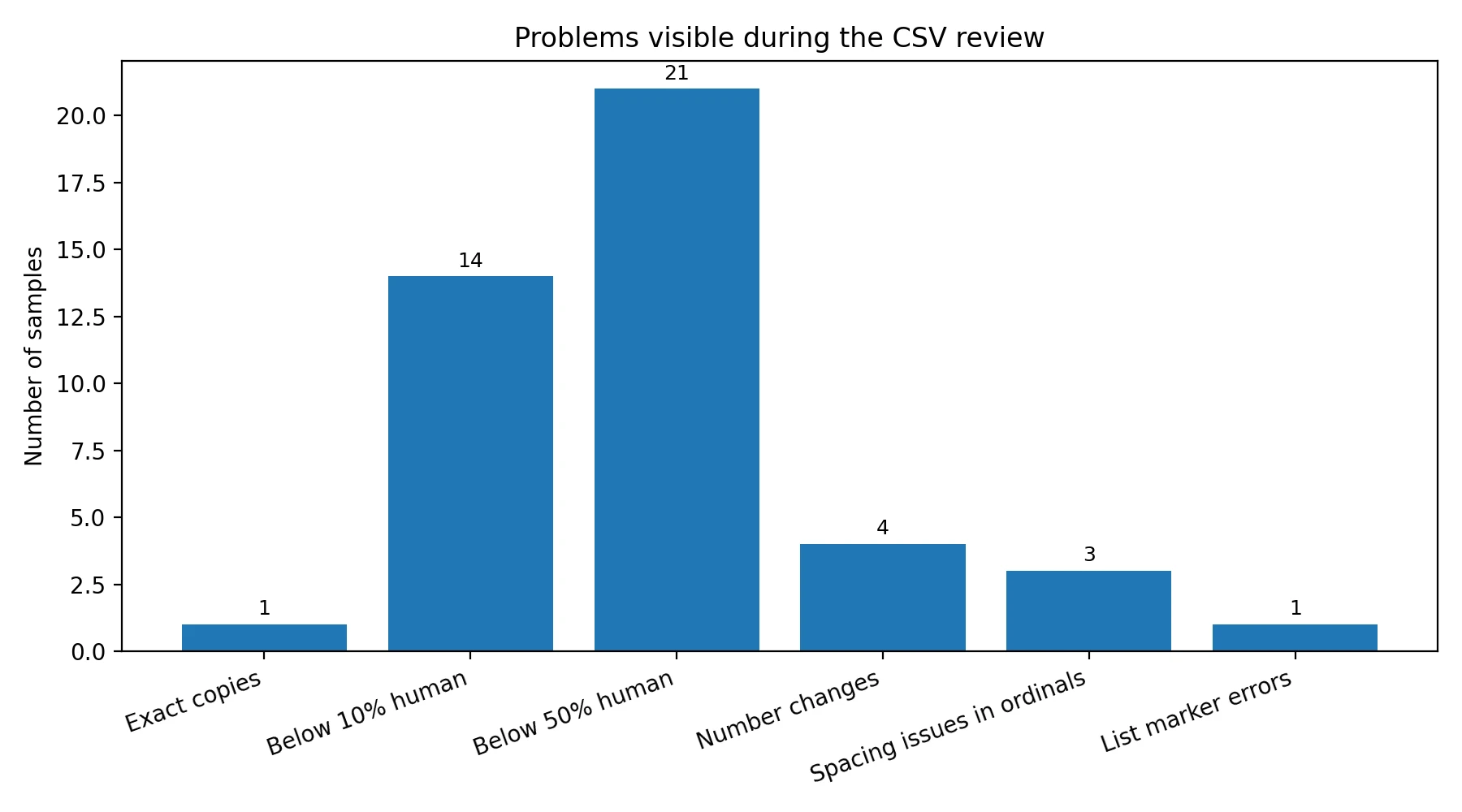

What stood out immediately

- Average human score: 74.3%

- Median human score: 93.5% (the middle result, which shows many samples scored high even though some failed badly)

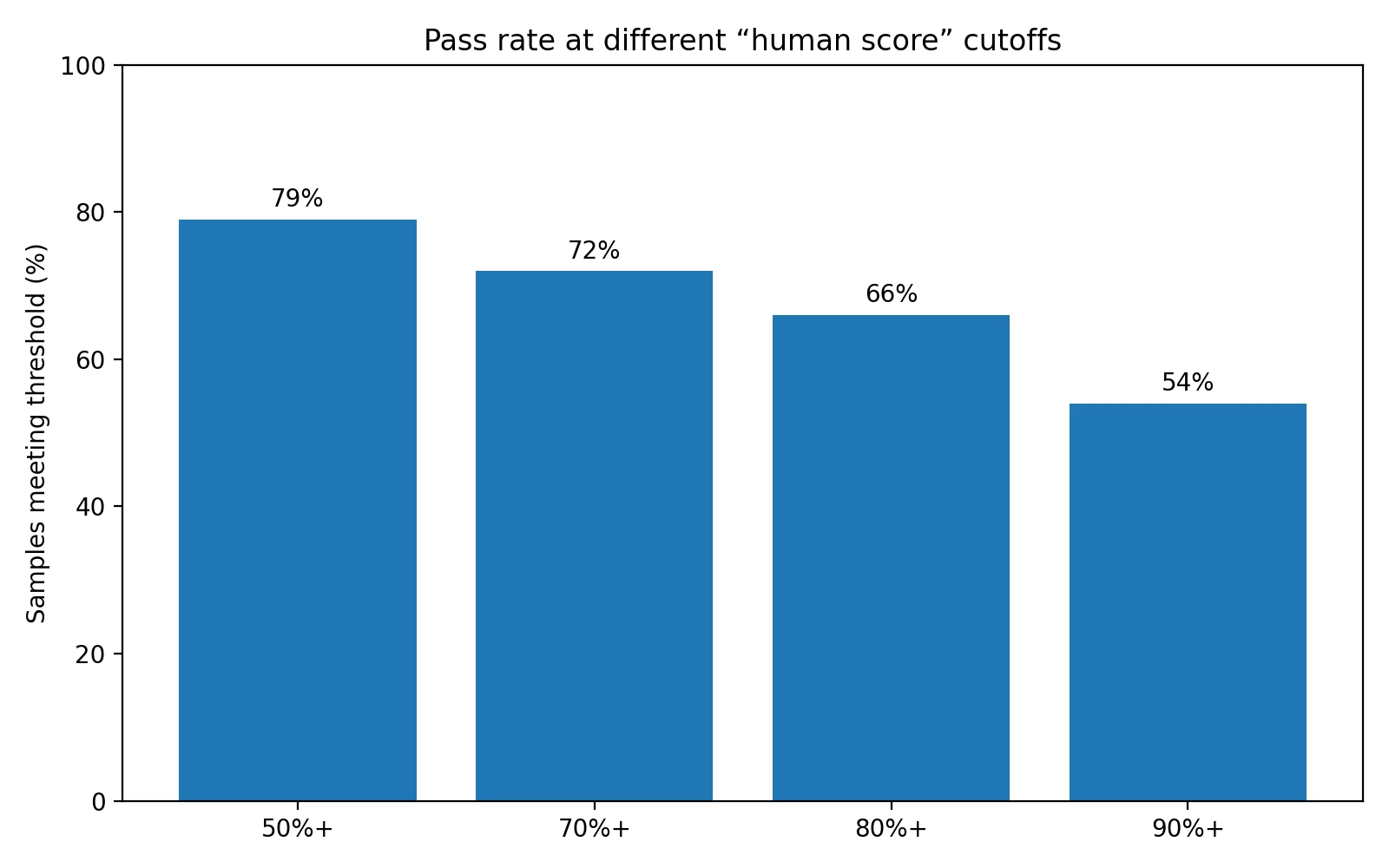

- Only 54% of rewrites reached a very strong 90%+ human score

- 21% fell below 50% human, and 14% crashed below 10%

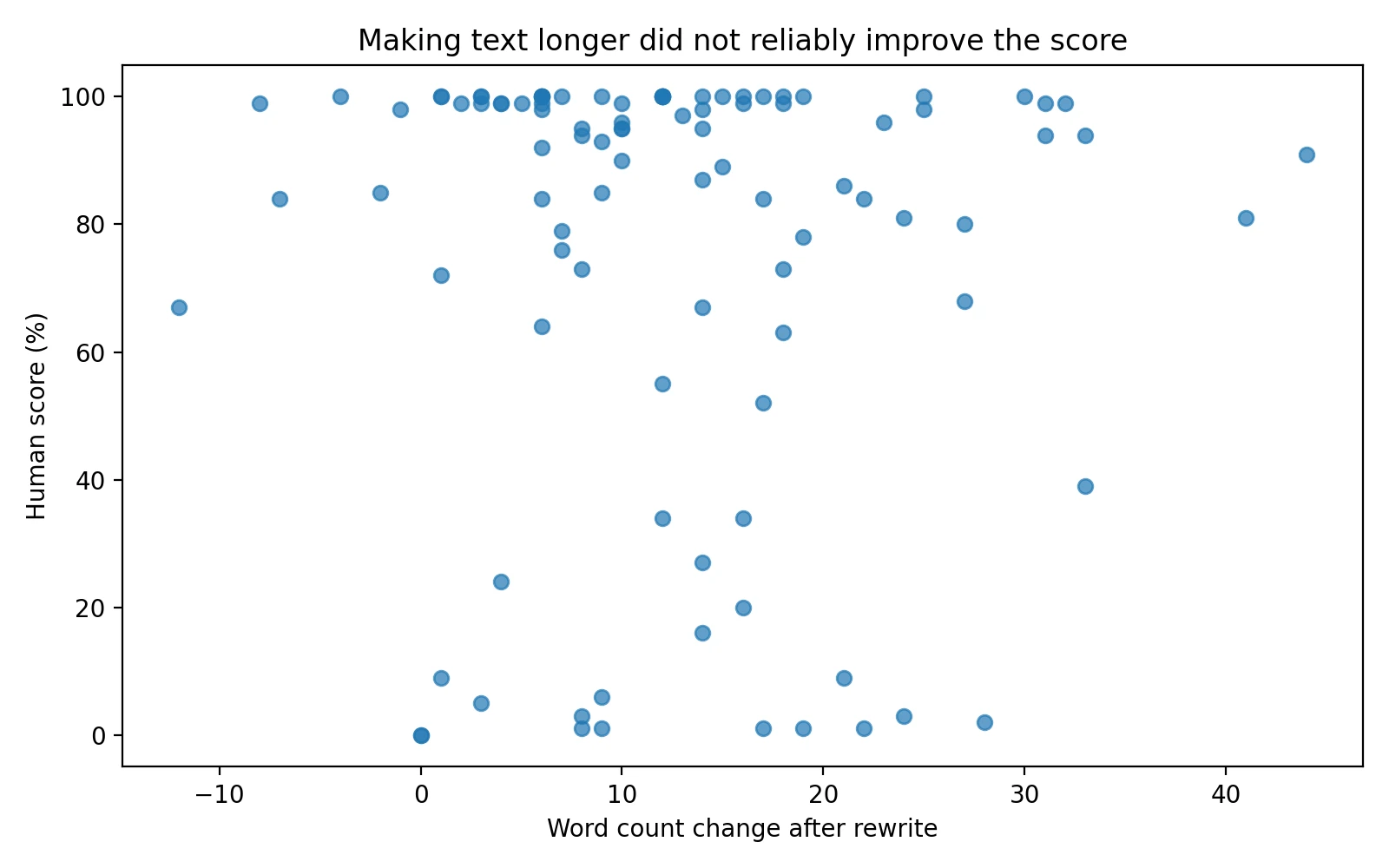

- On average, rewrites were 6.9% longer, but making them longer did not meaningfully improve the score

The biggest lesson: Stealthwriter is not steady

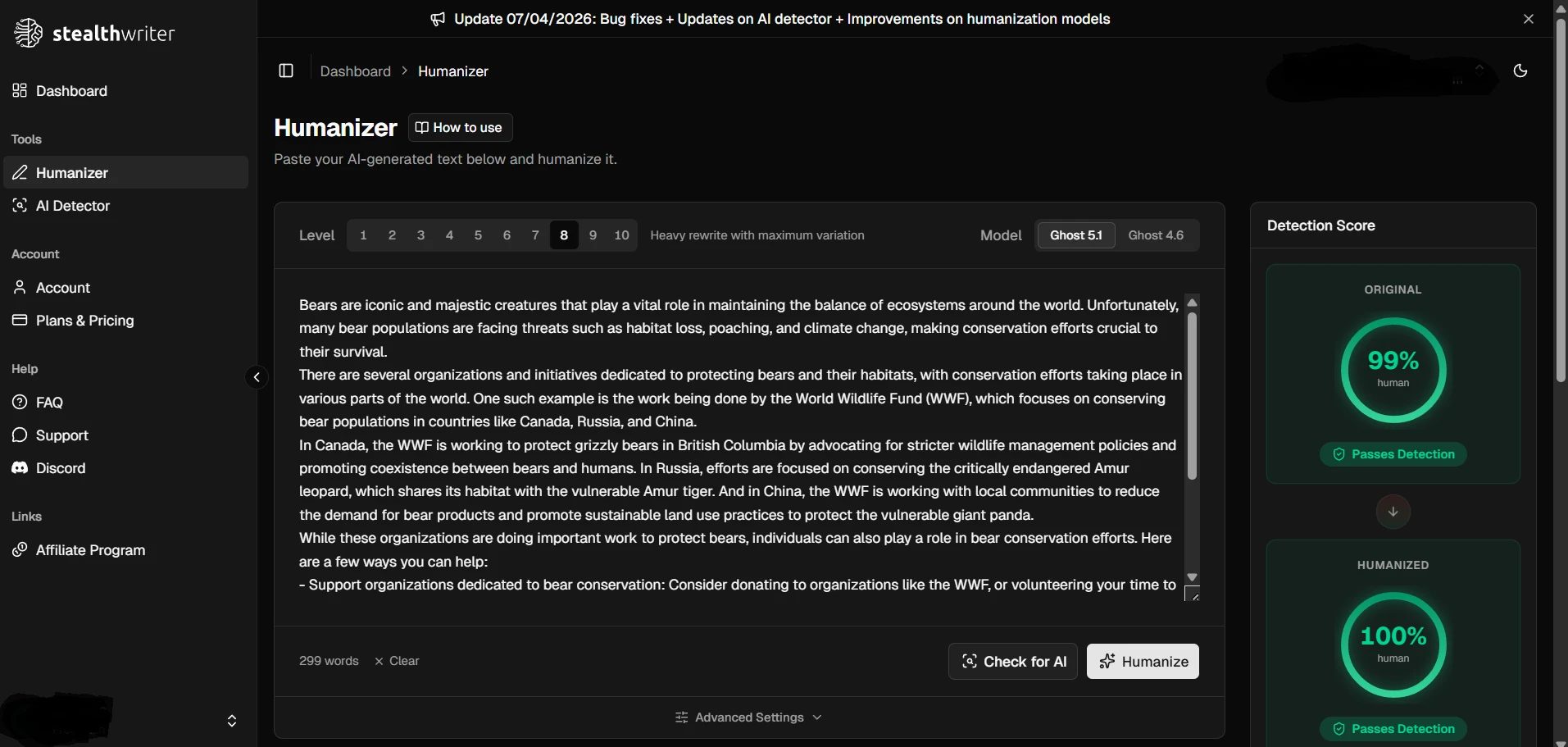

If you only look at the best examples, Stealthwriter seems extremely effective. In this dataset, 24 out of 100 rewrites scored a perfect 100% human. That is enough to create the impression that the tool “works.” But that is only half the picture.

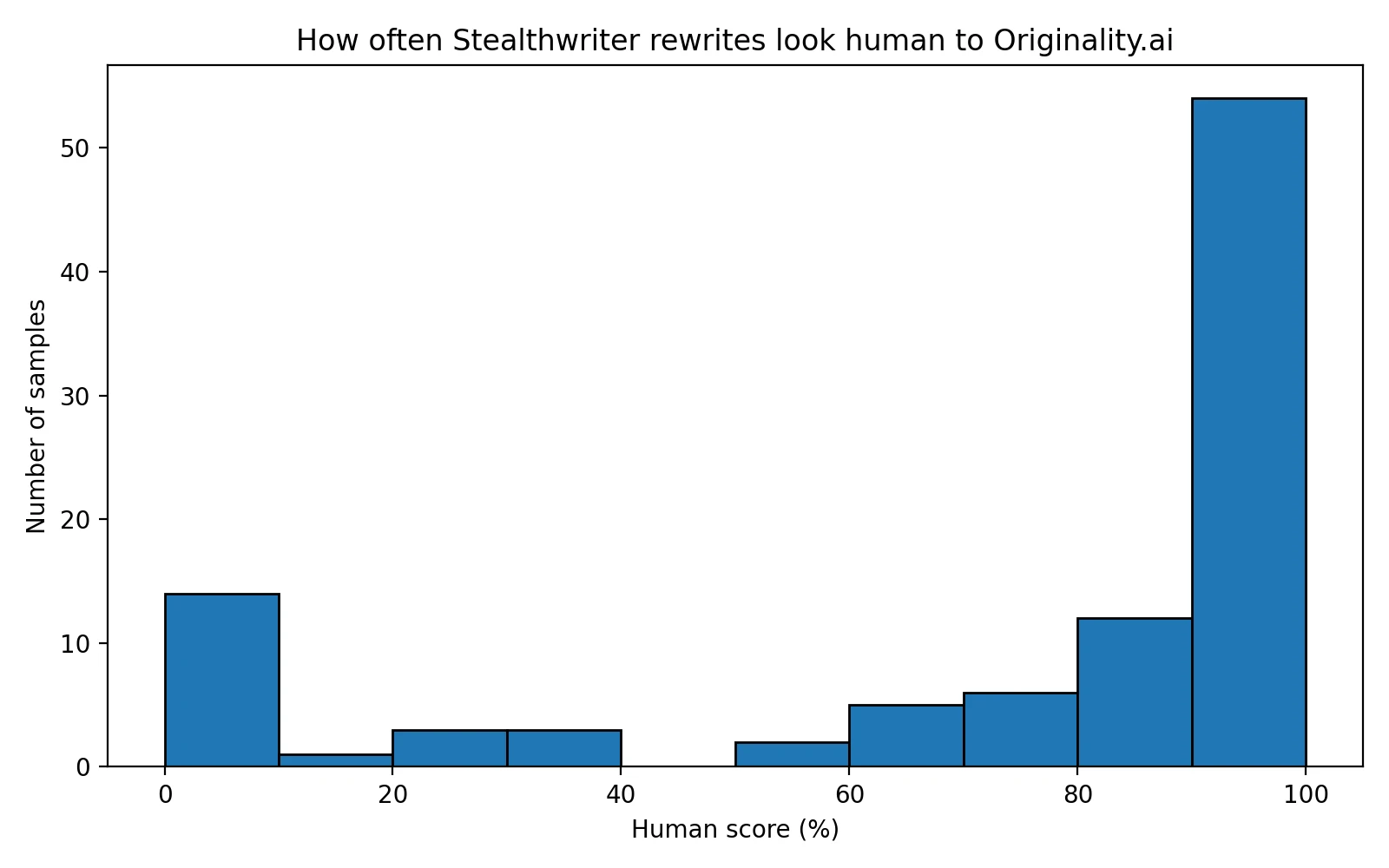

The full distribution tells a more cautious story. More than half of the rewrites landed in the top band, but there was also a long tail of failures. In plain English, Stealthwriter often did well, then suddenly did very badly. For anyone hoping for reliable bypass performance, that is a problem. A tool that succeeds on Monday and collapses on Tuesday is hard to trust.

The threshold chart makes that even clearer. About 79% of rewrites cleared a basic 50% human score, but once the bar became stricter, the pass rate fell. Only 66% reached 80% human, and only 54% crossed 90% human. That drop matters because most users of bypass tools are not aiming for “barely acceptable.” They want a result that looks safe and convincing.

Why the average and the middle score tell different stories

The average score was 74.3%, while the median was much higher at 93.5%. That gap is useful. It means the dataset was pulled in two directions at once: many samples performed very well, but a smaller group performed so badly that they dragged the average down. This is what people sometimes call a split distribution—basically, the results bunch up near the top and also near the bottom instead of clustering in one reliable range.

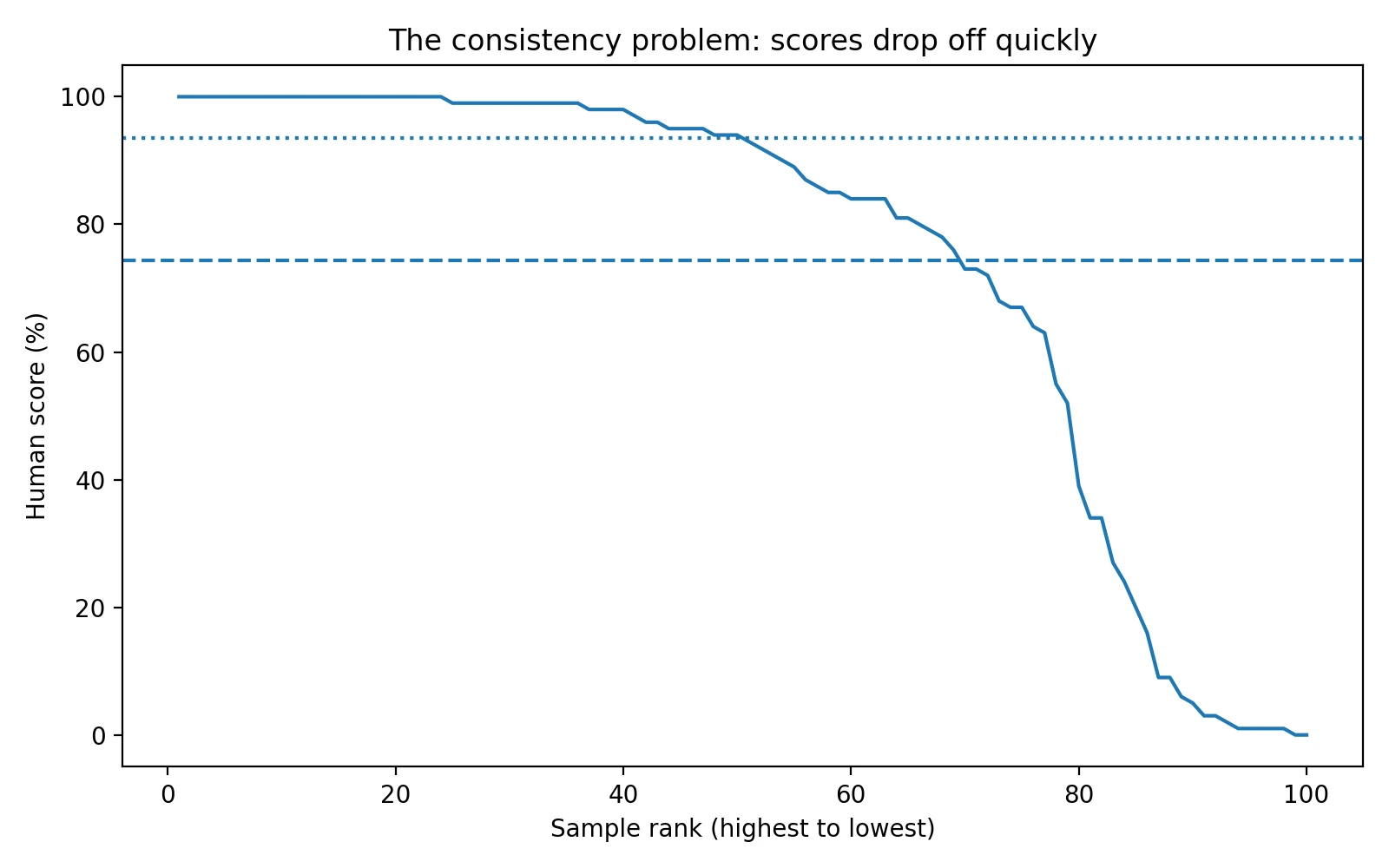

That pattern shows up in the ranked score curve below. The line stays high for a while, then drops sharply. For students, the takeaway is simple: Stealthwriter can produce strong-looking rewrites, but it does not do so evenly. A few dramatic failures can ruin the value of many earlier wins.

Passing a detector is not the same as producing a good rewrite

This was the most interesting part of the CSV review. Some rewrites scored well but still introduced visible problems. In other words, the detector score and the writing quality did not always move together.

One perfect-scoring rewrite changed “British Columbia” to “British Colombia”. Another turned “Chang'e” into “Changyu”. A different sample rewrote “From Tadpole to Frog: The Amazing Metamorphosis Process” into the clumsy title “The Wonderful Metamorphosis Process: Frog (Tadpole).” There was also a breakfast list where item 1 became item 2, creating duplicate numbering, and a King Tut sample that used the awkward formatting “18 th dynasty”.

These are not tiny cosmetic details. They affect trust. If a rewrite changes a place name, breaks a title, or damages list formatting, the text may look less natural to a human reader even when the detector gives it a high score.

Where the rewrites broke down

The weak samples were not just “a little AI-looking.” Several had more obvious rewrite damage. In the lowest-scoring group, I found cases where the output stayed almost unchanged, cases where sentences were clipped or shuffled, and cases where wording became awkward enough to distract from the message.

One rewrite in the dataset was an exact copy of the original passage and scored 0% human. That is the clearest possible miss. Other failures were messier. A travel article about waterfalls looked as if parts of its introduction had been cut and glued back in the wrong place. A battery safety piece contained broken phrasing. Some samples read like clean paraphrases at first glance, but on closer reading they had odd constructions, lost context, or moved ideas into less sensible order.

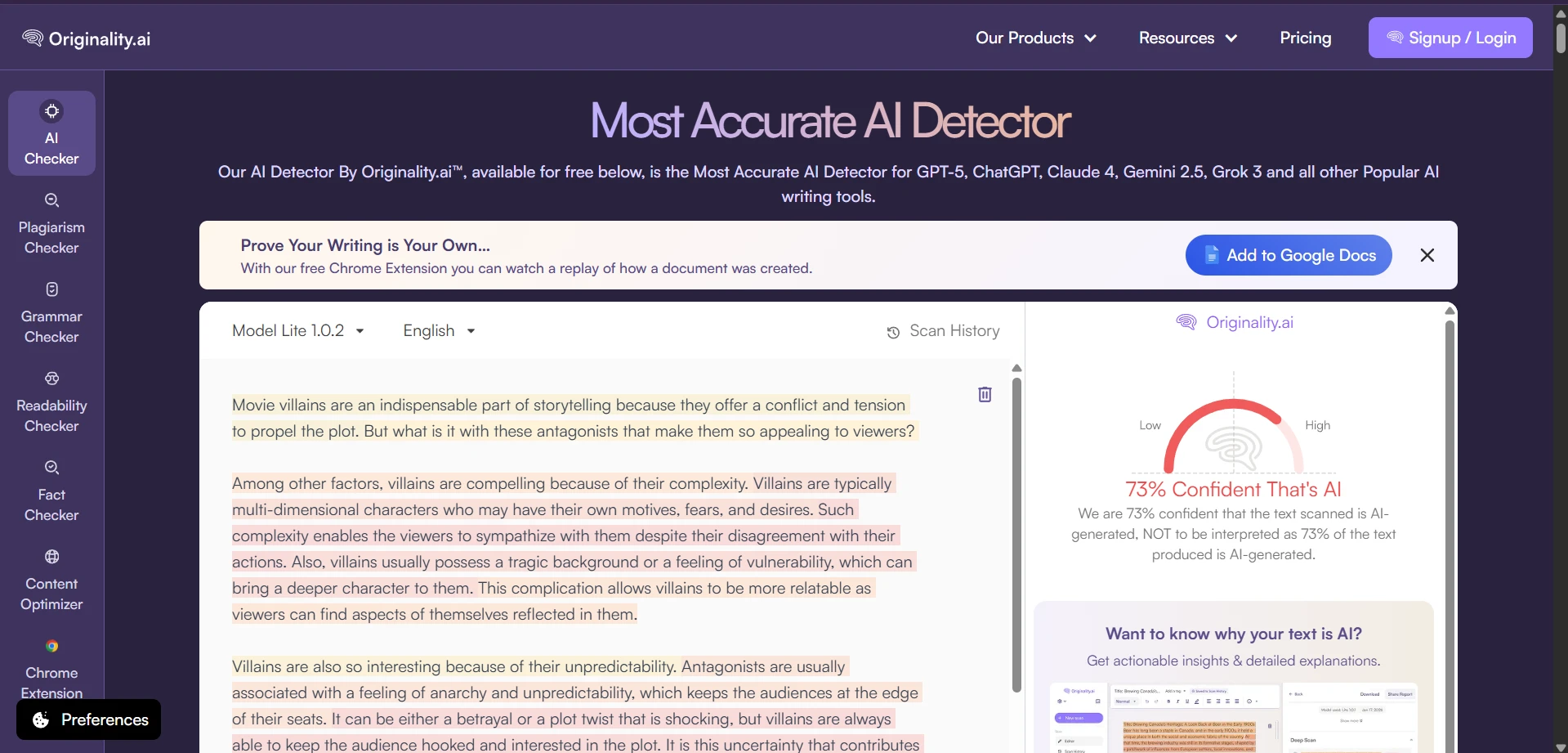

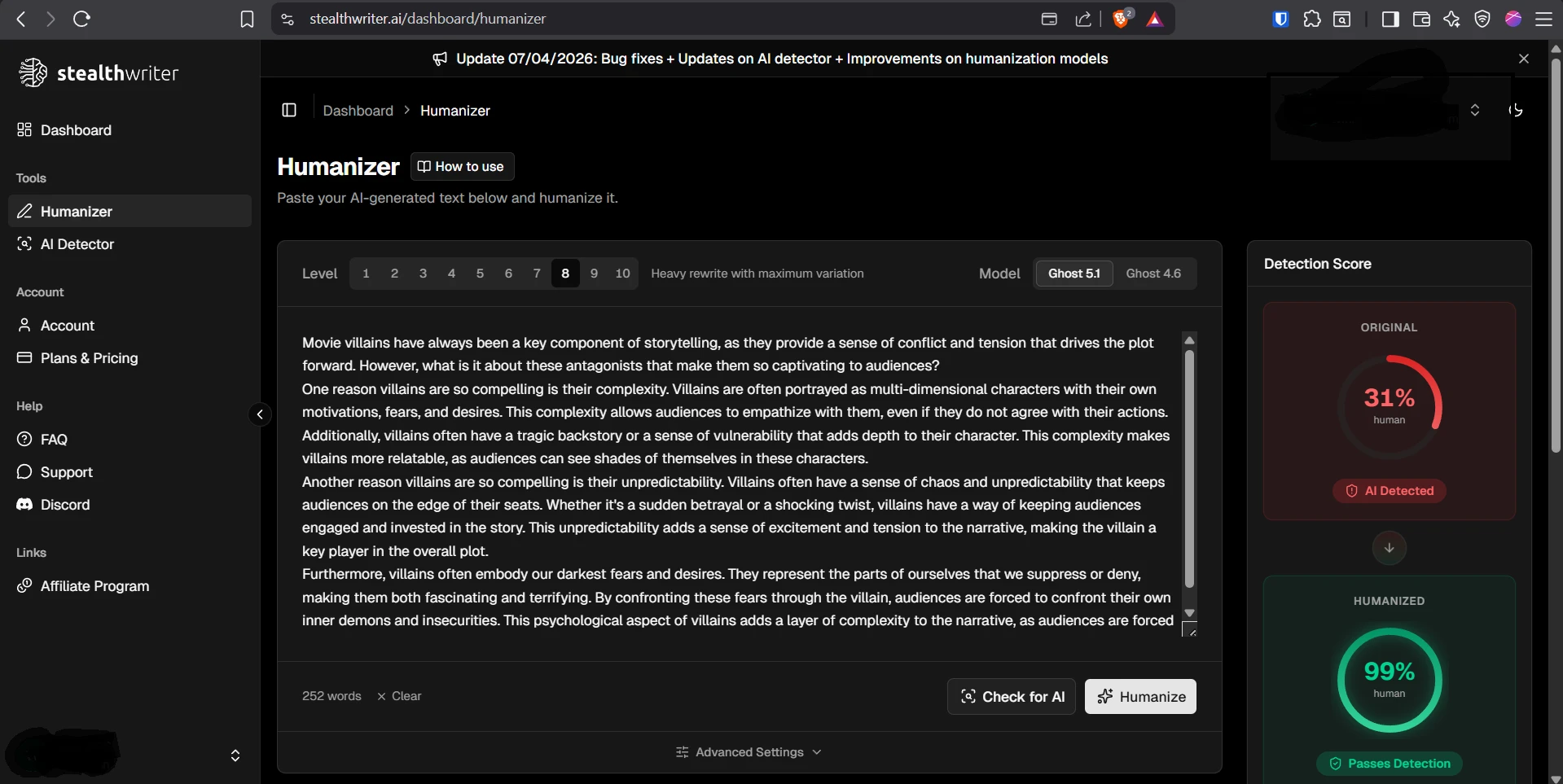

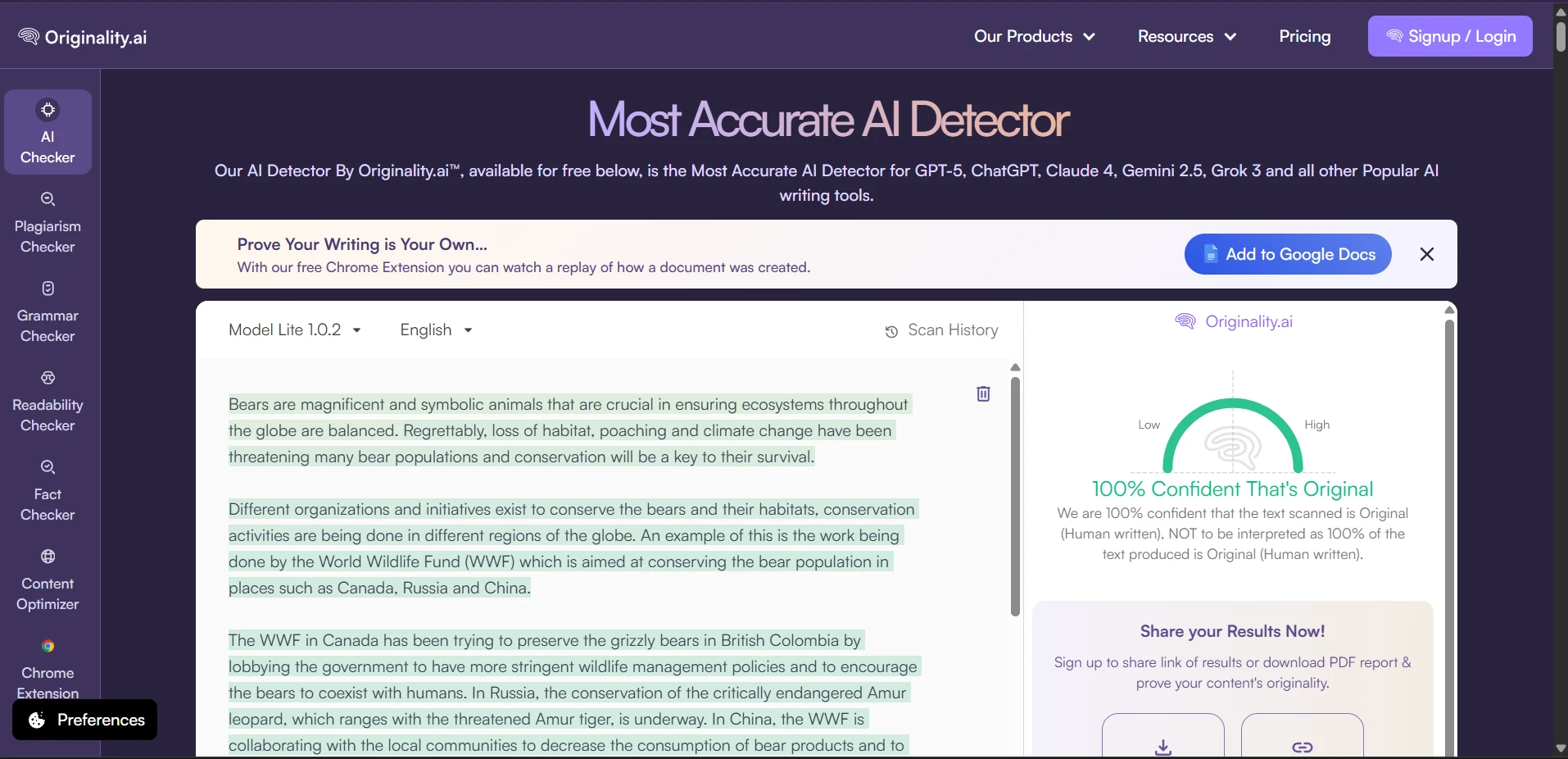

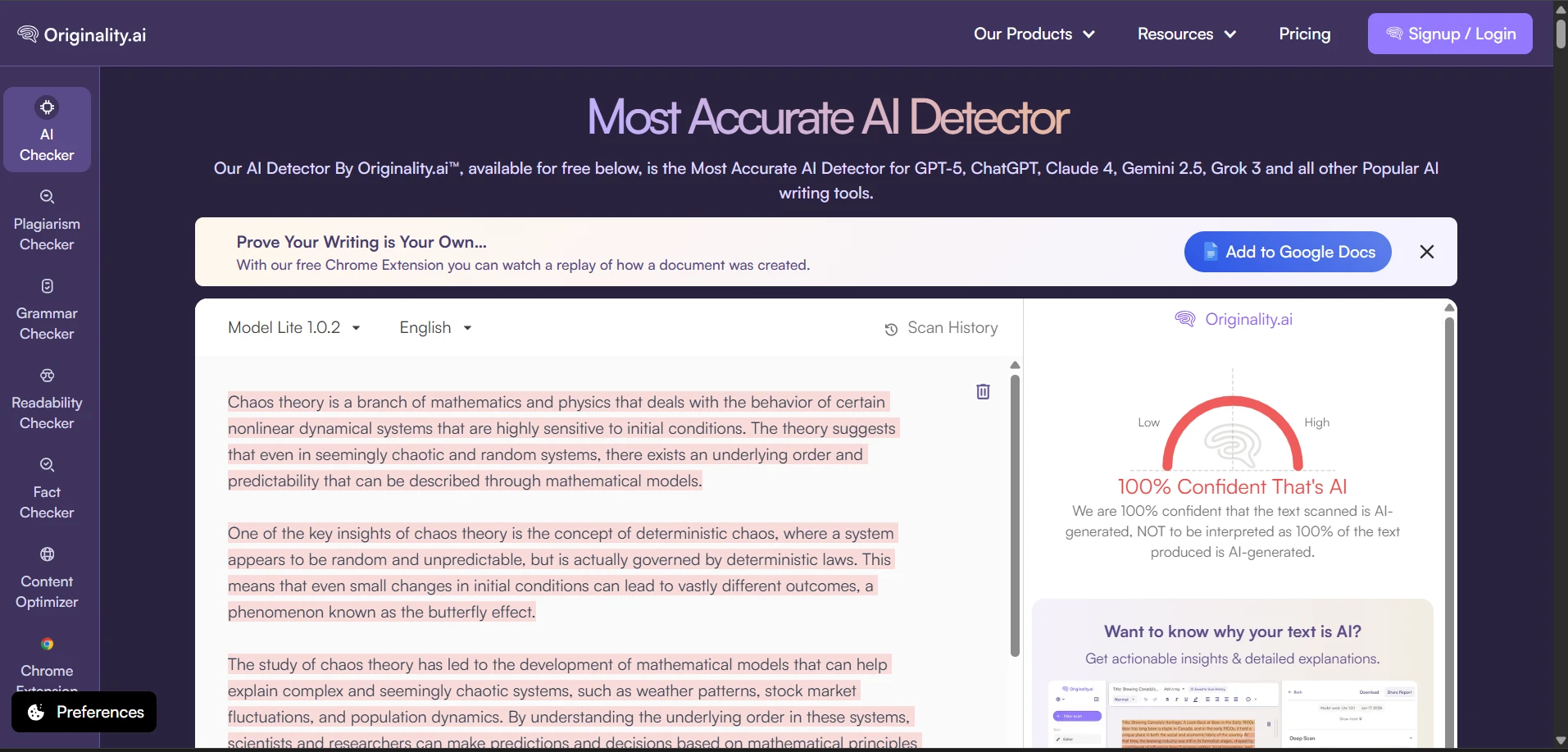

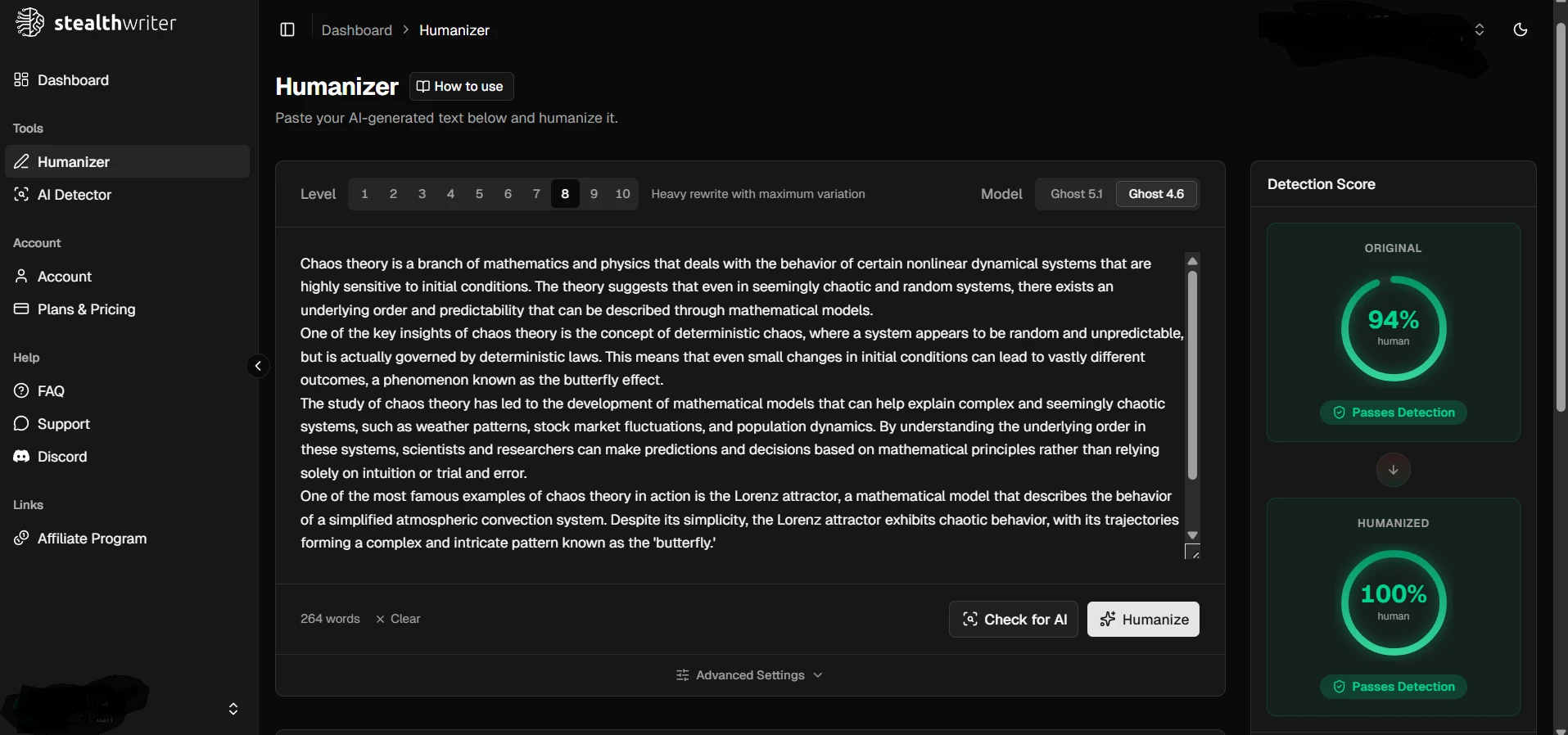

That is why the screenshots matter too. In selected examples, Stealthwriter clearly improved the detector result. Some passages that Originality.ai strongly flagged as AI were later shown as highly human after rewriting. But screenshots highlight possibilities, not consistency. A broad dataset tells you whether those wins are dependable. In this test, they were not dependable enough to call the tool safe.

So, how effective is Stealthwriter against Originality.ai?

Stealthwriter is effective often enough to look impressive, but not reliable enough to feel secure. In this 100-sample test, it produced many high human scores, yet it also generated a meaningful number of sharp failures. That makes the tool less like a guaranteed bypass and more like a gamble.

For students, that distinction matters. A detector score is only one layer of the story. If the rewrite introduces strange wording, broken formatting, or factual drift, the text can still feel off even when the score looks good. The strongest lesson from this dataset is not that bypassing is impossible. It is that high scores can hide weak writing, and low scores can appear without much warning.

If your goal is dependable, readable, believable writing, the safest conclusion from this dataset is clear: Stealthwriter sometimes beats Originality.ai, but it does not do so consistently enough to treat it as a sure thing.

![[STUDY] Can Stealthwriter really slip past Originality.ai? I tested 100 rewrites to find out.](/static/images/stealthwriter-ai-vs-originality-ai-featured-imagepng.webp)